On Path to Multimodal Generalist: General-Level and General-Bench

[📖 Project] [🏆 Leaderboard] [📄 Paper] [🤗 Paper-HF] [🤗 Dataset-HF (Close-Set)] [🤗 Dataset-HF (Open-Set)] [📝 Github]

Open Set of General-Bench

We divide our General-Bench into two settings: Open and Close.

This is the Open Set, where we release the full ground-truth annotations for all datasets, allowing to train and evaluate models for open research purpose.

If you wish to rank on our 🏆 leaderboard, please use the 👉 Close Set.

📕 Table of Contents

- ✨ File Origanization Structure

- 🍟 Usage

- 🌐 General-Bench

- 🖼️ Image Task Taxonomy

- 📽️ Video Task Taxonomy

- 📞 Audio Task Taxonomy

- 💎 3D Task Taxonomy

- 📚 Language Task Taxonomy

✨✨✨ File Origanization Structure

Here is the organization structure of the file system:

General-Bench

├── Image

│ ├── comprehension

│ │ ├── Bird-Detection

│ │ │ ├── annotation.json

│ │ │ └── images

│ │ │ └── Acadian_Flycatcher_0070_29150.jpg

│ │ ├── Bottle-Anomaly-Detection

│ │ │ ├── annotation.json

│ │ │ └── images

│ │ └── ...

│ └── generation

│ └── Layout-to-Face-Image-Generation

│ ├── annotation.json

│ └── images

│ └── ...

├── Video

│ ├── comprehension

│ │ └── Human-Object-Interaction-Video-Captioning

│ │ ├── annotation.json

│ │ └── videos

│ │ └── ...

│ └── generation

│ └── Scene-Image-to-Video-Generation

│ ├── annotation.json

│ └── videos

│ └── ...

├── 3d

│ ├── comprehension

│ │ └── 3D-Furniture-Classification

│ │ ├── annotation.json

│ │ └── pointclouds

│ │ └── ...

│ └── generation

│ └── Text-to-3D-Living-and-Arts-Point-Cloud-Generation

│ ├── annotation.json

│ └── pointclouds

│ └── ...

├── Audio

│ ├── comprehension

│ │ └── Accent-Classification

│ │ ├── annotation.json

│ │ └── audios

│ │ └── ...

│ └── generation

│ └── Video-To-Audio

│ ├── annotation.json

│ └── audios

│ └── ...

├── NLP

│ ├── History-Question-Answering

│ │ └── annotation.json

│ ├── Abstractive-Summarization

│ │ └── annotation.json

│ └── ...

An illustrative example of file formats:

🍟🍟🍟 Usage

Please download all the files in this repository. We also provide overview.json, which is an example of the format of our dataset.

For more instructions, please go to the document page.

🌐🌐🌐 General-Bench

A companion massive multimodal benchmark dataset, encompasses a broader spectrum of skills, modalities, formats, and capabilities, including over 700 tasks and 325K instances.

Overview of General-Bench, which covers 145 skills for more than 700 tasks with over 325,800 samples under comprehension and generation categories in various modalities

🍕🍕🍕 Capabilities and Domians Distribution

Distribution of various capabilities evaluated in General-Bench.

Distribution of various domains and disciplines covered by General-Bench.

🖼️ Image Task Taxonomy

Taxonomy and hierarchy of data in terms of Image modality.

📽️ Video Task Taxonomy

Taxonomy and hierarchy of data in terms of Video modality.

📞 Audio Task Taxonomy

Taxonomy and hierarchy of data in terms of Audio modality.

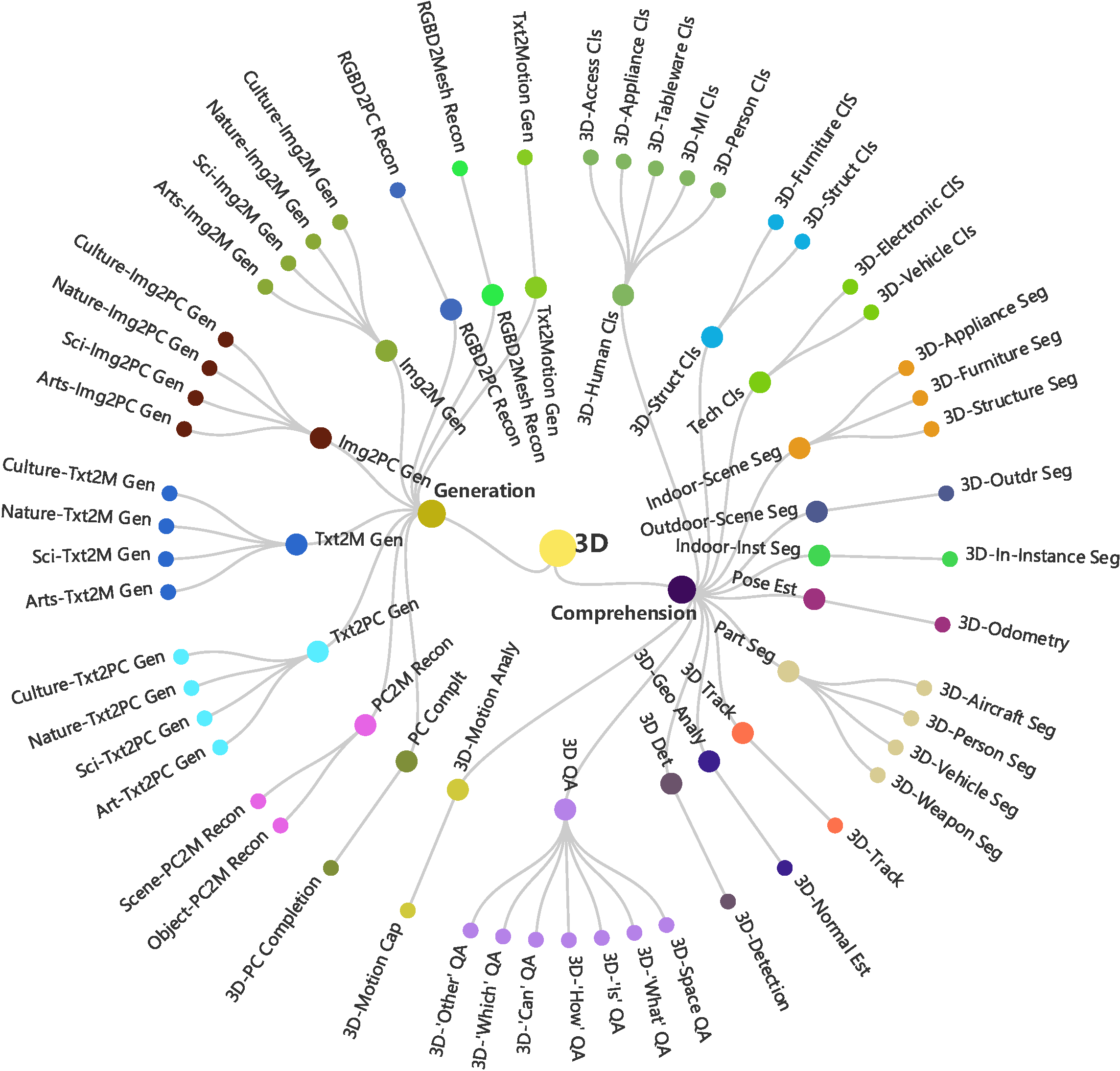

💎 3D Task Taxonomy

Taxonomy and hierarchy of data in terms of 3D modality.

📚 Language Task Taxonomy

Taxonomy and hierarchy of data in terms of Language modality.

🚩🚩🚩 Citation

If you find this project useful to your research, please kindly cite our paper:

@articles{fei2025pathmultimodalgeneralistgenerallevel,

title={On Path to Multimodal Generalist: General-Level and General-Bench},

author={Hao Fei and Yuan Zhou and Juncheng Li and Xiangtai Li and Qingshan Xu and Bobo Li and Shengqiong Wu and Yaoting Wang and Junbao Zhou and Jiahao Meng and Qingyu Shi and Zhiyuan Zhou and Liangtao Shi and Minghe Gao and Daoan Zhang and Zhiqi Ge and Weiming Wu and Siliang Tang and Kaihang Pan and Yaobo Ye and Haobo Yuan and Tao Zhang and Tianjie Ju and Zixiang Meng and Shilin Xu and Liyu Jia and Wentao Hu and Meng Luo and Jiebo Luo and Tat-Seng Chua and Shuicheng Yan and Hanwang Zhang},

eprint={2505.04620},

archivePrefix={arXiv},

primaryClass={cs.CV}

url={https://arxiv.org/abs/2505.04620},

}