diff --git a/.gitattributes b/.gitattributes

index a6344aac8c09253b3b630fb776ae94478aa0275b..3e15efd719e55ebabe8454de9919507fc0c87be9 100644

--- a/.gitattributes

+++ b/.gitattributes

@@ -25,11 +25,12 @@

*.safetensors filter=lfs diff=lfs merge=lfs -text

saved_model/**/* filter=lfs diff=lfs merge=lfs -text

*.tar.* filter=lfs diff=lfs merge=lfs -text

-*.tar filter=lfs diff=lfs merge=lfs -text

*.tflite filter=lfs diff=lfs merge=lfs -text

*.tgz filter=lfs diff=lfs merge=lfs -text

*.wasm filter=lfs diff=lfs merge=lfs -text

*.xz filter=lfs diff=lfs merge=lfs -text

-*.zip filter=lfs diff=lfs merge=lfs -text

*.zst filter=lfs diff=lfs merge=lfs -text

*tfevents* filter=lfs diff=lfs merge=lfs -text

+*zip filter=lfs diff=lfs merge=lfs -text

+SuperResolutionAnimeDiffusion.zip filter=lfs diff=lfs merge=lfs -text

+random_examples.zip filter=lfs diff=lfs merge=lfs -text

diff --git a/.gitignore b/.gitignore

new file mode 100644

index 0000000000000000000000000000000000000000..98c2d2047b6d29e3897102709df995a10b1bea8b

--- /dev/null

+++ b/.gitignore

@@ -0,0 +1,161 @@

+# dev files

+*.cache

+*.dev.py

+*.mv

+state_dict/

+integrated_datasets/

+*.results

+*.tokenizer

+*.model

+*.state_dict

+*.config

+*.args

+*.gz

+*.bin

+*.result.txt

+*.DS_Store

+*.tmp

+*.args.txt

+*.summary.txt

+*.dat

+*.graph

+# Byte-compiled / optimized / DLL files

+__pycache__/

+*.py[cod]

+*$py.class

+*.pyc

+experiments/

+tests/

+*.result.json

+.idea/

+imgs/

+

+# Embedding

+glove.840B.300d.txt

+glove.42B.300d.txt

+glove.twitter.27B.txt

+

+# project main files

+release_note.json

+

+# C extensions

+*.so

+

+# Distribution / packaging

+.Python

+build/

+develop-eggs/

+dist/

+downloads/

+eggs/

+.eggs/

+lib64/

+parts/

+sdist/

+var/

+wheels/

+pip-wheel-metadata/

+share/python-wheels/

+*.egg-info/

+.installed.cfg

+*.egg

+MANIFEST

+

+# PyInstaller

+# Usually these files are written by a python script from a template

+# before PyInstaller builds the exe, so as to inject date/other infos into it.

+*.manifest

+*.spec

+

+# Installer training_logs

+pip-log.txt

+pip-delete-this-directory.txt

+

+# Unit test / coverage reports

+htmlcov/

+.tox/

+.nox/

+.coverage

+.coverage.*

+.cache

+nosetests.xml

+coverage.xml

+*.cover

+*.py,cover

+.hypothesis/

+.pytest_cache/

+

+# Translations

+*.mo

+*.pot

+

+# Django stuff:

+*.log

+local_settings.py

+db.sqlite3

+db.sqlite3-journal

+

+# Flask stuff:

+instance/

+.webassets-cache

+

+# Scrapy stuff:

+.scrapy

+

+# Sphinx documentation

+docs/_build/

+

+# PyBuilder

+target/

+

+# Jupyter Notebook

+.ipynb_checkpoints

+

+# IPython

+profile_default/

+ipython_config.py

+

+# pyenv

+.python-version

+

+# pipenv

+# According to pypa/pipenv#598, it is recommended to include Pipfile.lock in version control.

+# However, in case of collaboration, if having platform-specific dependencies or dependencies

+# having no cross-platform support, pipenv may install dependencies that don't work, or not

+# install all needed dependencies.

+#Pipfile.lock

+

+# celery beat schedule file

+celerybeat-schedule

+

+# SageMath parsed files

+*.sage.py

+

+# Environments

+.env

+.venv

+env/

+venv/

+ENV/

+env.bak/

+venv.bak/

+

+# Spyder project settings

+.spyderproject

+.spyproject

+

+# Rope project settings

+.ropeproject

+

+# mkdocs documentation

+/site

+

+# mypy

+.mypy_cache/

+.dmypy.json

+dmypy.json

+

+# Pyre type checker

+.pyre/

+.DS_Store

+examples/.DS_Store

diff --git a/README.md b/README.md

index beba3fc4986cd0059b4d6a37297907ed41dff53c..a8c0364b05ee13759f0ef3d3e4b911de523d5414 100644

--- a/README.md

+++ b/README.md

@@ -9,4 +9,126 @@ app_file: app.py

pinned: false

---

-Check out the configuration reference at https://huggingface.co/docs/hub/spaces-config-reference

+# If you have a GPU, try the [Stable Diffusion WebUI](https://github.com/yangheng95/stable-diffusion-webui)

+

+

+# [Online Web Demo](https://huggingface.co/spaces/yangheng/Super-Resolution-Anime-Diffusion)

+

+This is demo forked from https://huggingface.co/Linaqruf/anything-v3.0.

+

+## Super Resolution Anime Diffusion

+At this moment, many diffusion models can only generate <1024 width and length pictures.

+I integrated the Super Resolution with [Anything diffusion model](https://huggingface.co/Linaqruf/anything-v3.0) to produce high resolution pictures.

+Thanks to the open-source project: https://github.com/yu45020/Waifu2x

+

+

+## Modifications

+1. Disable the safety checker to save time and memory. You need to abide the original rules of the model.

+2. Add the Super Resolution function to the model.

+3. Add batch generation function to the model (see inference.py).

+

+## Install

+1. Install [Anaconda](https://www.anaconda.com/products/distribution) or [Miniconda](https://docs.conda.io/en/latest/miniconda.html)

+2. create a conda environment:

+```bash

+conda create -n diffusion python=3.9

+conda activate diffusion

+```

+3. install requirements:

+```ash

+conda install pytorch pytorch-cuda=11.7 -c pytorch -c nvidia

+pip install -r requirements.txt

+```

+4. Run web demo:

+```

+python app.py

+```

+5. or run batch anime-generation

+```

+python inference.py

+```

+see the source code for details, you can set scale factor to magnify pictures

+

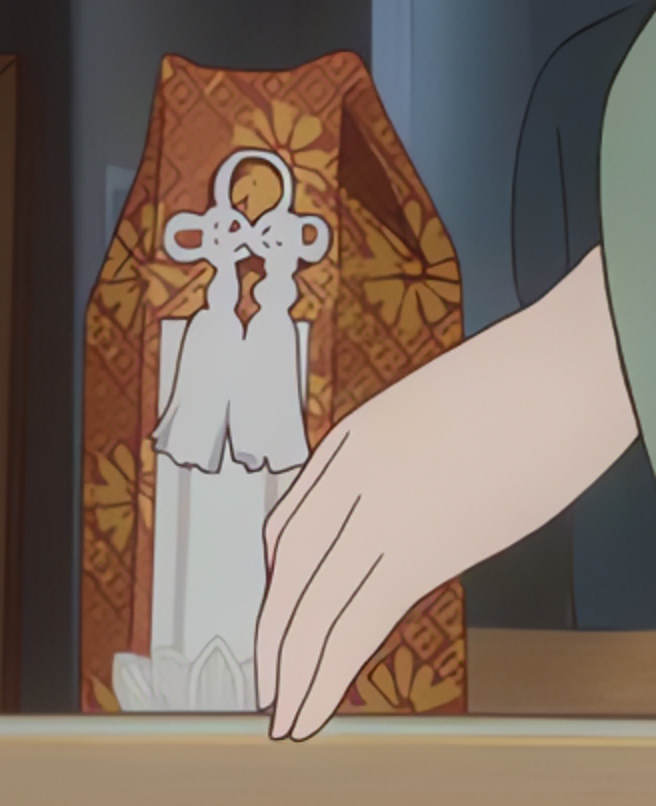

+## Random Examples (512*768) x4 scale factor

+

+

+# Origin README

+---

+language:

+- en

+license: creativeml-openrail-m

+tags:

+- stable-diffusion

+- stable-diffusion-diffusers

+- text-to-image

+- diffusers

+inference: true

+---

+

+# Anything V3

+

+Welcome to Anything V3 - a latent diffusion model for weebs. This model is intended to produce high-quality, highly detailed anime style with just a few prompts. Like other anime-style Stable Diffusion models, it also supports danbooru tags to generate images.

+

+e.g. **_1girl, white hair, golden eyes, beautiful eyes, detail, flower meadow, cumulonimbus clouds, lighting, detailed sky, garden_**

+

+## Gradio

+

+We support a [Gradio](https://github.com/gradio-app/gradio) Web UI to run Anything-V3.0:

+

+[Open in Spaces](https://huggingface.co/spaces/akhaliq/anything-v3.0)

+

+

+

+## 🧨 Diffusers

+

+This model can be used just like any other Stable Diffusion model. For more information,

+please have a look at the [Stable Diffusion](https://huggingface.co/docs/diffusers/api/pipelines/stable_diffusion).

+

+You can also export the model to [ONNX](https://huggingface.co/docs/diffusers/optimization/onnx), [MPS](https://huggingface.co/docs/diffusers/optimization/mps) and/or [FLAX/JAX]().

+

+```python

+from diffusers import StableDiffusionPipeline

+import torch

+

+model_id = "Linaqruf/anything-v3.0"

+pipe = StableDiffusionPipeline.from_pretrained(model_id, torch_dtype=torch.float16)

+pipe = pipe.to("cuda")

+

+prompt = "pikachu"

+image = pipe(prompt).images[0]

+

+image.save("./pikachu.png")

+```

+

+## Examples

+

+Below are some examples of images generated using this model:

+

+**Anime Girl:**

+

+```

+1girl, brown hair, green eyes, colorful, autumn, cumulonimbus clouds, lighting, blue sky, falling leaves, garden

+Steps: 50, Sampler: DDIM, CFG scale: 12

+```

+**Anime Boy:**

+

+```

+1boy, medium hair, blonde hair, blue eyes, bishounen, colorful, autumn, cumulonimbus clouds, lighting, blue sky, falling leaves, garden

+Steps: 50, Sampler: DDIM, CFG scale: 12

+```

+**Scenery:**

+

+```

+scenery, shibuya tokyo, post-apocalypse, ruins, rust, sky, skyscraper, abandoned, blue sky, broken window, building, cloud, crane machine, outdoors, overgrown, pillar, sunset

+Steps: 50, Sampler: DDIM, CFG scale: 12

+```

+

+## License

+

+This model is open access and available to all, with a CreativeML OpenRAIL-M license further specifying rights and usage.

+The CreativeML OpenRAIL License specifies:

+

+1. You can't use the model to deliberately produce nor share illegal or harmful outputs or content

+2. The authors claims no rights on the outputs you generate, you are free to use them and are accountable for their use which must not go against the provisions set in the license

+3. You may re-distribute the weights and use the model commercially and/or as a service. If you do, please be aware you have to include the same use restrictions as the ones in the license and share a copy of the CreativeML OpenRAIL-M to all your users (please read the license entirely and carefully)

+[Please read the full license here](https://huggingface.co/spaces/CompVis/stable-diffusion-license)

diff --git a/README_HG.md b/README_HG.md

new file mode 100644

index 0000000000000000000000000000000000000000..99a0776d1a4669fa8387cc77e162c60084100a92

--- /dev/null

+++ b/README_HG.md

@@ -0,0 +1,12 @@

+---

+title: Anything V3.0

+emoji: 🏃

+colorFrom: gray

+colorTo: yellow

+sdk: gradio

+sdk_version: 3.10.1

+app_file: app.py

+pinned: false

+---

+

+Check out the configuration reference at https://huggingface.co/docs/hub/spaces-config-reference

diff --git a/RealESRGANv030/.github/workflows/no-response.yml b/RealESRGANv030/.github/workflows/no-response.yml

new file mode 100644

index 0000000000000000000000000000000000000000..fa702eeacff13fe8475b0e102a8b8c37602f3963

--- /dev/null

+++ b/RealESRGANv030/.github/workflows/no-response.yml

@@ -0,0 +1,33 @@

+name: No Response

+

+# TODO: it seems not to work

+# Modified from: https://raw.githubusercontent.com/github/docs/main/.github/workflows/no-response.yaml

+

+# **What it does**: Closes issues that don't have enough information to be actionable.

+# **Why we have it**: To remove the need for maintainers to remember to check back on issues periodically

+# to see if contributors have responded.

+# **Who does it impact**: Everyone that works on docs or docs-internal.

+

+on:

+ issue_comment:

+ types: [created]

+

+ schedule:

+ # Schedule for five minutes after the hour every hour

+ - cron: '5 * * * *'

+

+jobs:

+ noResponse:

+ runs-on: ubuntu-latest

+ steps:

+ - uses: lee-dohm/no-response@v0.5.0

+ with:

+ token: ${{ github.token }}

+ closeComment: >

+ This issue has been automatically closed because there has been no response

+ to our request for more information from the original author. With only the

+ information that is currently in the issue, we don't have enough information

+ to take action. Please reach out if you have or find the answers we need so

+ that we can investigate further.

+ If you still have questions, please improve your description and re-open it.

+ Thanks :-)

diff --git a/RealESRGANv030/.github/workflows/publish-pip.yml b/RealESRGANv030/.github/workflows/publish-pip.yml

new file mode 100644

index 0000000000000000000000000000000000000000..f3c8e574fd59fa9a4f3925eee9ee590dbdca965a

--- /dev/null

+++ b/RealESRGANv030/.github/workflows/publish-pip.yml

@@ -0,0 +1,33 @@

+name: PyPI Publish

+

+on: push

+

+jobs:

+ build-n-publish:

+ runs-on: ubuntu-latest

+ if: startsWith(github.event.ref, 'refs/tags')

+

+ steps:

+ - uses: actions/checkout@v2

+ - name: Set up Python 3.8

+ uses: actions/setup-python@v1

+ with:

+ python-version: 3.8

+ - name: Upgrade pip

+ run: pip install pip --upgrade

+ - name: Install PyTorch (cpu)

+ run: pip install torch==1.7.0+cpu torchvision==0.8.1+cpu -f https://download.pytorch.org/whl/torch_stable.html

+ - name: Install dependencies

+ run: |

+ pip install basicsr

+ pip install facexlib

+ pip install gfpgan

+ pip install -r requirements.txt

+ - name: Build and install

+ run: rm -rf .eggs && pip install -e .

+ - name: Build for distribution

+ run: python setup.py sdist bdist_wheel

+ - name: Publish distribution to PyPI

+ uses: pypa/gh-action-pypi-publish@master

+ with:

+ password: ${{ secrets.PYPI_API_TOKEN }}

diff --git a/RealESRGANv030/.github/workflows/pylint.yml b/RealESRGANv030/.github/workflows/pylint.yml

new file mode 100644

index 0000000000000000000000000000000000000000..2084d1aa236b948d8734b6762d3e01054580001a

--- /dev/null

+++ b/RealESRGANv030/.github/workflows/pylint.yml

@@ -0,0 +1,31 @@

+name: PyLint

+

+on: [push, pull_request]

+

+jobs:

+ build:

+

+ runs-on: ubuntu-latest

+ strategy:

+ matrix:

+ python-version: [3.8]

+

+ steps:

+ - uses: actions/checkout@v2

+ - name: Set up Python ${{ matrix.python-version }}

+ uses: actions/setup-python@v2

+ with:

+ python-version: ${{ matrix.python-version }}

+

+ - name: Install dependencies

+ run: |

+ python -m pip install --upgrade pip

+ pip install codespell flake8 isort yapf

+

+ # modify the folders accordingly

+ - name: Lint

+ run: |

+ codespell

+ flake8 .

+ isort --check-only --diff realesrgan/ scripts/ inference_realesrgan.py setup.py

+ yapf -r -d realesrgan/ scripts/ inference_realesrgan.py setup.py

diff --git a/RealESRGANv030/.github/workflows/release.yml b/RealESRGANv030/.github/workflows/release.yml

new file mode 100644

index 0000000000000000000000000000000000000000..18be9e5c31768ab3be3e1075500a35bcb5783434

--- /dev/null

+++ b/RealESRGANv030/.github/workflows/release.yml

@@ -0,0 +1,41 @@

+name: release

+on:

+ push:

+ tags:

+ - '*'

+

+jobs:

+ build:

+ permissions: write-all

+ name: Create Release

+ runs-on: ubuntu-latest

+ steps:

+ - name: Checkout code

+ uses: actions/checkout@v2

+ - name: Create Release

+ id: create_release

+ uses: actions/create-release@v1

+ env:

+ GITHUB_TOKEN: ${{ secrets.GITHUB_TOKEN }}

+ with:

+ tag_name: ${{ github.ref }}

+ release_name: Real-ESRGAN ${{ github.ref }} Release Note

+ body: |

+ 🚀 See you again 😸

+ 🚀Have a nice day 😸 and happy everyday 😃

+ 🚀 Long time no see ☄️

+

+ ✨ **Highlights**

+ ✅ [Features] Support ...

+

+ 🐛 **Bug Fixes**

+

+ 🌴 **Improvements**

+

+ 📢📢📢

+

+

+  +

+

+ draft: true

+ prerelease: false

diff --git a/RealESRGANv030/.gitignore b/RealESRGANv030/.gitignore

new file mode 100644

index 0000000000000000000000000000000000000000..bb86ed0fd8a71305c7d8cc794bfa4591a5ccbc99

--- /dev/null

+++ b/RealESRGANv030/.gitignore

@@ -0,0 +1,140 @@

+# ignored folders

+datasets/*

+experiments/*

+results/*

+tb_logger/*

+wandb/*

+tmp/*

+weights/*

+

+version.py

+

+# Byte-compiled / optimized / DLL files

+__pycache__/

+*.py[cod]

+*$py.class

+

+# C extensions

+*.so

+

+# Distribution / packaging

+.Python

+build/

+develop-eggs/

+dist/

+downloads/

+eggs/

+.eggs/

+lib/

+lib64/

+parts/

+sdist/

+var/

+wheels/

+pip-wheel-metadata/

+share/python-wheels/

+*.egg-info/

+.installed.cfg

+*.egg

+MANIFEST

+

+# PyInstaller

+# Usually these files are written by a python script from a template

+# before PyInstaller builds the exe, so as to inject date/other infos into it.

+*.manifest

+*.spec

+

+# Installer logs

+pip-log.txt

+pip-delete-this-directory.txt

+

+# Unit test / coverage reports

+htmlcov/

+.tox/

+.nox/

+.coverage

+.coverage.*

+.cache

+nosetests.xml

+coverage.xml

+*.cover

+*.py,cover

+.hypothesis/

+.pytest_cache/

+

+# Translations

+*.mo

+*.pot

+

+# Django stuff:

+*.log

+local_settings.py

+db.sqlite3

+db.sqlite3-journal

+

+# Flask stuff:

+instance/

+.webassets-cache

+

+# Scrapy stuff:

+.scrapy

+

+# Sphinx documentation

+docs/_build/

+

+# PyBuilder

+target/

+

+# Jupyter Notebook

+.ipynb_checkpoints

+

+# IPython

+profile_default/

+ipython_config.py

+

+# pyenv

+.python-version

+

+# pipenv

+# According to pypa/pipenv#598, it is recommended to include Pipfile.lock in version control.

+# However, in case of collaboration, if having platform-specific dependencies or dependencies

+# having no cross-platform support, pipenv may install dependencies that don't work, or not

+# install all needed dependencies.

+#Pipfile.lock

+

+# PEP 582; used by e.g. github.com/David-OConnor/pyflow

+__pypackages__/

+

+# Celery stuff

+celerybeat-schedule

+celerybeat.pid

+

+# SageMath parsed files

+*.sage.py

+

+# Environments

+.env

+.venv

+env/

+venv/

+ENV/

+env.bak/

+venv.bak/

+

+# Spyder project settings

+.spyderproject

+.spyproject

+

+# Rope project settings

+.ropeproject

+

+# mkdocs documentation

+/site

+

+# mypy

+.mypy_cache/

+.dmypy.json

+dmypy.json

+

+# Pyre type checker

+.pyre/

diff --git a/RealESRGANv030/.pre-commit-config.yaml b/RealESRGANv030/.pre-commit-config.yaml

new file mode 100644

index 0000000000000000000000000000000000000000..d221d29fbaac74bef1c0cd910ce8d8b6526181b8

--- /dev/null

+++ b/RealESRGANv030/.pre-commit-config.yaml

@@ -0,0 +1,46 @@

+repos:

+ # flake8

+ - repo: https://github.com/PyCQA/flake8

+ rev: 3.8.3

+ hooks:

+ - id: flake8

+ args: ["--config=setup.cfg", "--ignore=W504, W503"]

+

+ # modify known_third_party

+ - repo: https://github.com/asottile/seed-isort-config

+ rev: v2.2.0

+ hooks:

+ - id: seed-isort-config

+

+ # isort

+ - repo: https://github.com/timothycrosley/isort

+ rev: 5.2.2

+ hooks:

+ - id: isort

+

+ # yapf

+ - repo: https://github.com/pre-commit/mirrors-yapf

+ rev: v0.30.0

+ hooks:

+ - id: yapf

+

+ # codespell

+ - repo: https://github.com/codespell-project/codespell

+ rev: v2.1.0

+ hooks:

+ - id: codespell

+

+ # pre-commit-hooks

+ - repo: https://github.com/pre-commit/pre-commit-hooks

+ rev: v3.2.0

+ hooks:

+ - id: trailing-whitespace # Trim trailing whitespace

+ - id: check-yaml # Attempt to load all yaml files to verify syntax

+ - id: check-merge-conflict # Check for files that contain merge conflict strings

+ - id: double-quote-string-fixer # Replace double quoted strings with single quoted strings

+ - id: end-of-file-fixer # Make sure files end in a newline and only a newline

+ - id: requirements-txt-fixer # Sort entries in requirements.txt and remove incorrect entry for pkg-resources==0.0.0

+ - id: fix-encoding-pragma # Remove the coding pragma: # -*- coding: utf-8 -*-

+ args: ["--remove"]

+ - id: mixed-line-ending # Replace or check mixed line ending

+ args: ["--fix=lf"]

diff --git a/RealESRGANv030/.vscode/settings.json b/RealESRGANv030/.vscode/settings.json

new file mode 100644

index 0000000000000000000000000000000000000000..b12635534688a8a8c69033d81fad96ef734ea6bb

--- /dev/null

+++ b/RealESRGANv030/.vscode/settings.json

@@ -0,0 +1,19 @@

+{

+ "files.trimTrailingWhitespace": true,

+ "editor.wordWrap": "on",

+ "editor.rulers": [

+ 80,

+ 120

+ ],

+ "editor.renderWhitespace": "all",

+ "editor.renderControlCharacters": true,

+ "python.formatting.provider": "yapf",

+ "python.formatting.yapfArgs": [

+ "--style",

+ "{BASED_ON_STYLE = pep8, BLANK_LINE_BEFORE_NESTED_CLASS_OR_DEF = true, SPLIT_BEFORE_EXPRESSION_AFTER_OPENING_PAREN = true, COLUMN_LIMIT = 120}"

+ ],

+ "python.linting.flake8Enabled": true,

+ "python.linting.flake8Args": [

+ "max-line-length=120"

+ ],

+}

diff --git a/RealESRGANv030/CODE_OF_CONDUCT.md b/RealESRGANv030/CODE_OF_CONDUCT.md

new file mode 100644

index 0000000000000000000000000000000000000000..e8cc4daa4345590464314889b187d6a2d7a8e20f

--- /dev/null

+++ b/RealESRGANv030/CODE_OF_CONDUCT.md

@@ -0,0 +1,128 @@

+# Contributor Covenant Code of Conduct

+

+## Our Pledge

+

+We as members, contributors, and leaders pledge to make participation in our

+community a harassment-free experience for everyone, regardless of age, body

+size, visible or invisible disability, ethnicity, sex characteristics, gender

+identity and expression, level of experience, education, socio-economic status,

+nationality, personal appearance, race, religion, or sexual identity

+and orientation.

+

+We pledge to act and interact in ways that contribute to an open, welcoming,

+diverse, inclusive, and healthy community.

+

+## Our Standards

+

+Examples of behavior that contributes to a positive environment for our

+community include:

+

+* Demonstrating empathy and kindness toward other people

+* Being respectful of differing opinions, viewpoints, and experiences

+* Giving and gracefully accepting constructive feedback

+* Accepting responsibility and apologizing to those affected by our mistakes,

+ and learning from the experience

+* Focusing on what is best not just for us as individuals, but for the

+ overall community

+

+Examples of unacceptable behavior include:

+

+* The use of sexualized language or imagery, and sexual attention or

+ advances of any kind

+* Trolling, insulting or derogatory comments, and personal or political attacks

+* Public or private harassment

+* Publishing others' private information, such as a physical or email

+ address, without their explicit permission

+* Other conduct which could reasonably be considered inappropriate in a

+ professional setting

+

+## Enforcement Responsibilities

+

+Community leaders are responsible for clarifying and enforcing our standards of

+acceptable behavior and will take appropriate and fair corrective action in

+response to any behavior that they deem inappropriate, threatening, offensive,

+or harmful.

+

+Community leaders have the right and responsibility to remove, edit, or reject

+comments, commits, code, wiki edits, issues, and other contributions that are

+not aligned to this Code of Conduct, and will communicate reasons for moderation

+decisions when appropriate.

+

+## Scope

+

+This Code of Conduct applies within all community spaces, and also applies when

+an individual is officially representing the community in public spaces.

+Examples of representing our community include using an official e-mail address,

+posting via an official social media account, or acting as an appointed

+representative at an online or offline event.

+

+## Enforcement

+

+Instances of abusive, harassing, or otherwise unacceptable behavior may be

+reported to the community leaders responsible for enforcement at

+xintao.wang@outlook.com or xintaowang@tencent.com.

+All complaints will be reviewed and investigated promptly and fairly.

+

+All community leaders are obligated to respect the privacy and security of the

+reporter of any incident.

+

+## Enforcement Guidelines

+

+Community leaders will follow these Community Impact Guidelines in determining

+the consequences for any action they deem in violation of this Code of Conduct:

+

+### 1. Correction

+

+**Community Impact**: Use of inappropriate language or other behavior deemed

+unprofessional or unwelcome in the community.

+

+**Consequence**: A private, written warning from community leaders, providing

+clarity around the nature of the violation and an explanation of why the

+behavior was inappropriate. A public apology may be requested.

+

+### 2. Warning

+

+**Community Impact**: A violation through a single incident or series

+of actions.

+

+**Consequence**: A warning with consequences for continued behavior. No

+interaction with the people involved, including unsolicited interaction with

+those enforcing the Code of Conduct, for a specified period of time. This

+includes avoiding interactions in community spaces as well as external channels

+like social media. Violating these terms may lead to a temporary or

+permanent ban.

+

+### 3. Temporary Ban

+

+**Community Impact**: A serious violation of community standards, including

+sustained inappropriate behavior.

+

+**Consequence**: A temporary ban from any sort of interaction or public

+communication with the community for a specified period of time. No public or

+private interaction with the people involved, including unsolicited interaction

+with those enforcing the Code of Conduct, is allowed during this period.

+Violating these terms may lead to a permanent ban.

+

+### 4. Permanent Ban

+

+**Community Impact**: Demonstrating a pattern of violation of community

+standards, including sustained inappropriate behavior, harassment of an

+individual, or aggression toward or disparagement of classes of individuals.

+

+**Consequence**: A permanent ban from any sort of public interaction within

+the community.

+

+## Attribution

+

+This Code of Conduct is adapted from the [Contributor Covenant][homepage],

+version 2.0, available at

+https://www.contributor-covenant.org/version/2/0/code_of_conduct.html.

+

+Community Impact Guidelines were inspired by [Mozilla's code of conduct

+enforcement ladder](https://github.com/mozilla/diversity).

+

+[homepage]: https://www.contributor-covenant.org

+

+For answers to common questions about this code of conduct, see the FAQ at

+https://www.contributor-covenant.org/faq. Translations are available at

+https://www.contributor-covenant.org/translations.

diff --git a/RealESRGANv030/LICENSE b/RealESRGANv030/LICENSE

new file mode 100644

index 0000000000000000000000000000000000000000..552a1eeaf01f4e7077013ed3496600c608f35202

--- /dev/null

+++ b/RealESRGANv030/LICENSE

@@ -0,0 +1,29 @@

+BSD 3-Clause License

+

+Copyright (c) 2021, Xintao Wang

+All rights reserved.

+

+Redistribution and use in source and binary forms, with or without

+modification, are permitted provided that the following conditions are met:

+

+1. Redistributions of source code must retain the above copyright notice, this

+ list of conditions and the following disclaimer.

+

+2. Redistributions in binary form must reproduce the above copyright notice,

+ this list of conditions and the following disclaimer in the documentation

+ and/or other materials provided with the distribution.

+

+3. Neither the name of the copyright holder nor the names of its

+ contributors may be used to endorse or promote products derived from

+ this software without specific prior written permission.

+

+THIS SOFTWARE IS PROVIDED BY THE COPYRIGHT HOLDERS AND CONTRIBUTORS "AS IS"

+AND ANY EXPRESS OR IMPLIED WARRANTIES, INCLUDING, BUT NOT LIMITED TO, THE

+IMPLIED WARRANTIES OF MERCHANTABILITY AND FITNESS FOR A PARTICULAR PURPOSE ARE

+DISCLAIMED. IN NO EVENT SHALL THE COPYRIGHT HOLDER OR CONTRIBUTORS BE LIABLE

+FOR ANY DIRECT, INDIRECT, INCIDENTAL, SPECIAL, EXEMPLARY, OR CONSEQUENTIAL

+DAMAGES (INCLUDING, BUT NOT LIMITED TO, PROCUREMENT OF SUBSTITUTE GOODS OR

+SERVICES; LOSS OF USE, DATA, OR PROFITS; OR BUSINESS INTERRUPTION) HOWEVER

+CAUSED AND ON ANY THEORY OF LIABILITY, WHETHER IN CONTRACT, STRICT LIABILITY,

+OR TORT (INCLUDING NEGLIGENCE OR OTHERWISE) ARISING IN ANY WAY OUT OF THE USE

+OF THIS SOFTWARE, EVEN IF ADVISED OF THE POSSIBILITY OF SUCH DAMAGE.

diff --git a/RealESRGANv030/MANIFEST.in b/RealESRGANv030/MANIFEST.in

new file mode 100644

index 0000000000000000000000000000000000000000..b87c827c894c82b5530c1267ea1d57e86c5f515b

--- /dev/null

+++ b/RealESRGANv030/MANIFEST.in

@@ -0,0 +1,8 @@

+include assets/*

+include inputs/*

+include scripts/*.py

+include inference_realesrgan.py

+include VERSION

+include LICENSE

+include requirements.txt

+include weights/README.md

diff --git a/RealESRGANv030/README.md b/RealESRGANv030/README.md

new file mode 100644

index 0000000000000000000000000000000000000000..118e930c12bffd9e6da1df03180f5c9a8dcaabc3

--- /dev/null

+++ b/RealESRGANv030/README.md

@@ -0,0 +1,272 @@

+

+  +

+

+

+##

+

+

+

+👀[**Demos**](#-demos-videos) **|** 🚩[**Updates**](#-updates) **|** ⚡[**Usage**](#-quick-inference) **|** 🏰[**Model Zoo**](docs/model_zoo.md) **|** 🔧[Install](#-dependencies-and-installation) **|** 💻[Train](docs/Training.md) **|** ❓[FAQ](docs/FAQ.md) **|** 🎨[Contribution](docs/CONTRIBUTING.md)

+

+[](https://github.com/xinntao/Real-ESRGAN/releases)

+[](https://pypi.org/project/realesrgan/)

+[](https://github.com/xinntao/Real-ESRGAN/issues)

+[](https://github.com/xinntao/Real-ESRGAN/issues)

+[](https://github.com/xinntao/Real-ESRGAN/blob/master/LICENSE)

+[](https://github.com/xinntao/Real-ESRGAN/blob/master/.github/workflows/pylint.yml)

+[](https://github.com/xinntao/Real-ESRGAN/blob/master/.github/workflows/publish-pip.yml)

+

+

+

+🔥 **AnimeVideo-v3 model (动漫视频小模型)**. Please see [[*anime video models*](docs/anime_video_model.md)] and [[*comparisons*](docs/anime_comparisons.md)]

+🔥 **RealESRGAN_x4plus_anime_6B** for anime images **(动漫插图模型)**. Please see [[*anime_model*](docs/anime_model.md)]

+

+

+1. :boom: **Update** online Replicate demo: [](https://replicate.com/xinntao/realesrgan)

+1. Online Colab demo for Real-ESRGAN: [](https://colab.research.google.com/drive/1k2Zod6kSHEvraybHl50Lys0LerhyTMCo?usp=sharing) **|** Online Colab demo for for Real-ESRGAN (**anime videos**): [](https://colab.research.google.com/drive/1yNl9ORUxxlL4N0keJa2SEPB61imPQd1B?usp=sharing)

+1. Portable [Windows](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.5.0/realesrgan-ncnn-vulkan-20220424-windows.zip) / [Linux](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.5.0/realesrgan-ncnn-vulkan-20220424-ubuntu.zip) / [MacOS](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.5.0/realesrgan-ncnn-vulkan-20220424-macos.zip) **executable files for Intel/AMD/Nvidia GPU**. You can find more information [here](#portable-executable-files-ncnn). The ncnn implementation is in [Real-ESRGAN-ncnn-vulkan](https://github.com/xinntao/Real-ESRGAN-ncnn-vulkan)

+

+

+Real-ESRGAN aims at developing **Practical Algorithms for General Image/Video Restoration**.

+We extend the powerful ESRGAN to a practical restoration application (namely, Real-ESRGAN), which is trained with pure synthetic data.

+

+🌌 Thanks for your valuable feedbacks/suggestions. All the feedbacks are updated in [feedback.md](docs/feedback.md).

+

+---

+

+If Real-ESRGAN is helpful, please help to ⭐ this repo or recommend it to your friends 😊

+Other recommended projects:

+▶️ [GFPGAN](https://github.com/TencentARC/GFPGAN): A practical algorithm for real-world face restoration

+▶️ [BasicSR](https://github.com/xinntao/BasicSR): An open-source image and video restoration toolbox

+▶️ [facexlib](https://github.com/xinntao/facexlib): A collection that provides useful face-relation functions.

+▶️ [HandyView](https://github.com/xinntao/HandyView): A PyQt5-based image viewer that is handy for view and comparison

+▶️ [HandyFigure](https://github.com/xinntao/HandyFigure): Open source of paper figures

+

+---

+

+### 📖 Real-ESRGAN: Training Real-World Blind Super-Resolution with Pure Synthetic Data

+

+> [[Paper](https://arxiv.org/abs/2107.10833)] [[YouTube Video](https://www.youtube.com/watch?v=fxHWoDSSvSc)] [[B站讲解](https://www.bilibili.com/video/BV1H34y1m7sS/)] [[Poster](https://xinntao.github.io/projects/RealESRGAN_src/RealESRGAN_poster.pdf)] [[PPT slides](https://docs.google.com/presentation/d/1QtW6Iy8rm8rGLsJ0Ldti6kP-7Qyzy6XL/edit?usp=sharing&ouid=109799856763657548160&rtpof=true&sd=true)]

+> [Xintao Wang](https://xinntao.github.io/), Liangbin Xie, [Chao Dong](https://scholar.google.com.hk/citations?user=OSDCB0UAAAAJ), [Ying Shan](https://scholar.google.com/citations?user=4oXBp9UAAAAJ&hl=en)

+> [Tencent ARC Lab](https://arc.tencent.com/en/ai-demos/imgRestore); Shenzhen Institutes of Advanced Technology, Chinese Academy of Sciences

+

+

+  +

+

+

+---

+

+

+## 🚩 Updates

+

+- ✅ Add the **realesr-general-x4v3** model - a tiny small model for general scenes. It also supports the **--dn** option to balance the noise (avoiding over-smooth results). **--dn** is short for denoising strength.

+- ✅ Update the **RealESRGAN AnimeVideo-v3** model. Please see [anime video models](docs/anime_video_model.md) and [comparisons](docs/anime_comparisons.md) for more details.

+- ✅ Add small models for anime videos. More details are in [anime video models](docs/anime_video_model.md).

+- ✅ Add the ncnn implementation [Real-ESRGAN-ncnn-vulkan](https://github.com/xinntao/Real-ESRGAN-ncnn-vulkan).

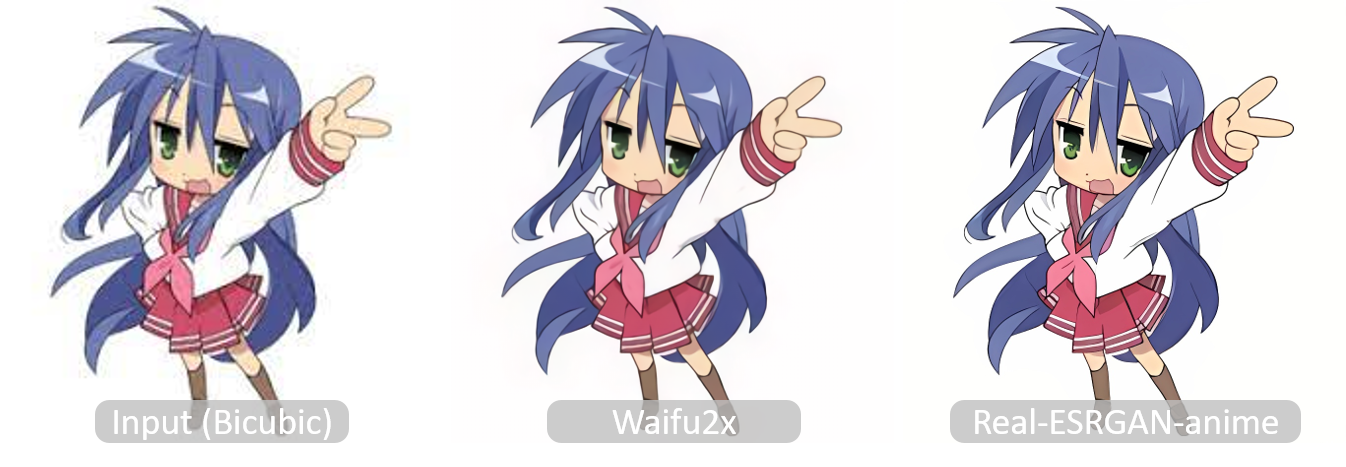

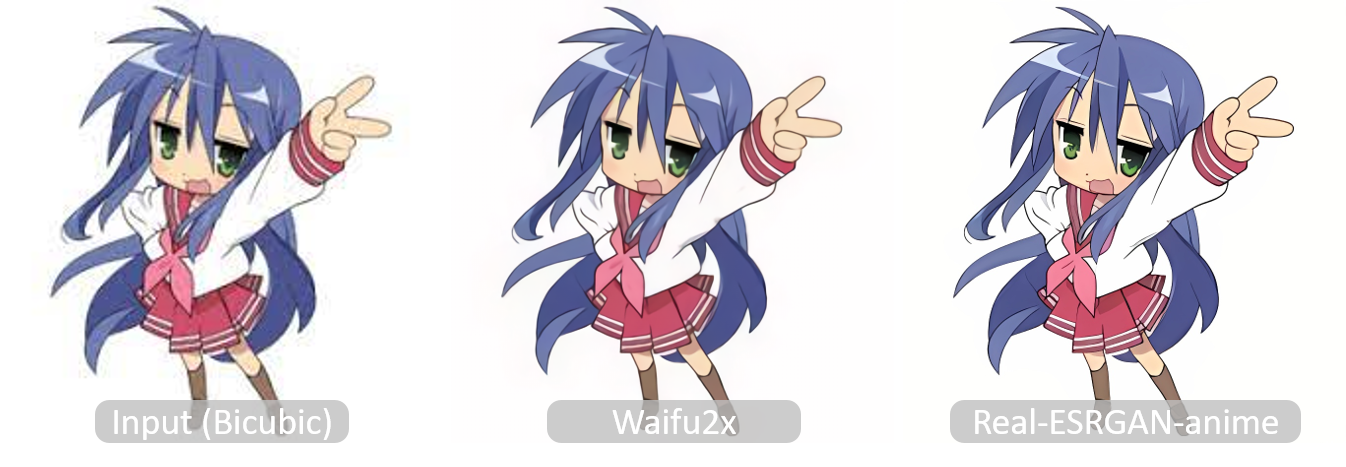

+- ✅ Add [*RealESRGAN_x4plus_anime_6B.pth*](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.2.4/RealESRGAN_x4plus_anime_6B.pth), which is optimized for **anime** images with much smaller model size. More details and comparisons with [waifu2x](https://github.com/nihui/waifu2x-ncnn-vulkan) are in [**anime_model.md**](docs/anime_model.md)

+- ✅ Support finetuning on your own data or paired data (*i.e.*, finetuning ESRGAN). See [here](docs/Training.md#Finetune-Real-ESRGAN-on-your-own-dataset)

+- ✅ Integrate [GFPGAN](https://github.com/TencentARC/GFPGAN) to support **face enhancement**.

+- ✅ Integrated to [Huggingface Spaces](https://huggingface.co/spaces) with [Gradio](https://github.com/gradio-app/gradio). See [Gradio Web Demo](https://huggingface.co/spaces/akhaliq/Real-ESRGAN). Thanks [@AK391](https://github.com/AK391)

+- ✅ Support arbitrary scale with `--outscale` (It actually further resizes outputs with `LANCZOS4`). Add *RealESRGAN_x2plus.pth* model.

+- ✅ [The inference code](inference_realesrgan.py) supports: 1) **tile** options; 2) images with **alpha channel**; 3) **gray** images; 4) **16-bit** images.

+- ✅ The training codes have been released. A detailed guide can be found in [Training.md](docs/Training.md).

+

+---

+

+

+## 👀 Demos Videos

+

+#### Bilibili

+

+- [大闹天宫片段](https://www.bilibili.com/video/BV1ja41117zb)

+- [Anime dance cut 动漫魔性舞蹈](https://www.bilibili.com/video/BV1wY4y1L7hT/)

+- [海贼王片段](https://www.bilibili.com/video/BV1i3411L7Gy/)

+

+#### YouTube

+

+## 🔧 Dependencies and Installation

+

+- Python >= 3.7 (Recommend to use [Anaconda](https://www.anaconda.com/download/#linux) or [Miniconda](https://docs.conda.io/en/latest/miniconda.html))

+- [PyTorch >= 1.7](https://pytorch.org/)

+

+### Installation

+

+1. Clone repo

+

+ ```bash

+ git clone https://github.com/xinntao/Real-ESRGAN.git

+ cd Real-ESRGAN

+ ```

+

+1. Install dependent packages

+

+ ```bash

+ # Install basicsr - https://github.com/xinntao/BasicSR

+ # We use BasicSR for both training and inference

+ pip install basicsr

+ # facexlib and gfpgan are for face enhancement

+ pip install facexlib

+ pip install gfpgan

+ pip install -r requirements.txt

+ python setup.py develop

+ ```

+

+---

+

+## ⚡ Quick Inference

+

+There are usually three ways to inference Real-ESRGAN.

+

+1. [Online inference](#online-inference)

+1. [Portable executable files (NCNN)](#portable-executable-files-ncnn)

+1. [Python script](#python-script)

+

+### Online inference

+

+1. You can try in our website: [ARC Demo](https://arc.tencent.com/en/ai-demos/imgRestore) (now only support RealESRGAN_x4plus_anime_6B)

+1. [Colab Demo](https://colab.research.google.com/drive/1k2Zod6kSHEvraybHl50Lys0LerhyTMCo?usp=sharing) for Real-ESRGAN **|** [Colab Demo](https://colab.research.google.com/drive/1yNl9ORUxxlL4N0keJa2SEPB61imPQd1B?usp=sharing) for Real-ESRGAN (**anime videos**).

+

+### Portable executable files (NCNN)

+

+You can download [Windows](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.5.0/realesrgan-ncnn-vulkan-20220424-windows.zip) / [Linux](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.5.0/realesrgan-ncnn-vulkan-20220424-ubuntu.zip) / [MacOS](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.5.0/realesrgan-ncnn-vulkan-20220424-macos.zip) **executable files for Intel/AMD/Nvidia GPU**.

+

+This executable file is **portable** and includes all the binaries and models required. No CUDA or PyTorch environment is needed.

+

+You can simply run the following command (the Windows example, more information is in the README.md of each executable files):

+

+```bash

+./realesrgan-ncnn-vulkan.exe -i input.jpg -o output.png -n model_name

+```

+

+We have provided five models:

+

+1. realesrgan-x4plus (default)

+2. realesrnet-x4plus

+3. realesrgan-x4plus-anime (optimized for anime images, small model size)

+4. realesr-animevideov3 (animation video)

+

+You can use the `-n` argument for other models, for example, `./realesrgan-ncnn-vulkan.exe -i input.jpg -o output.png -n realesrnet-x4plus`

+

+#### Usage of portable executable files

+

+1. Please refer to [Real-ESRGAN-ncnn-vulkan](https://github.com/xinntao/Real-ESRGAN-ncnn-vulkan#computer-usages) for more details.

+1. Note that it does not support all the functions (such as `outscale`) as the python script `inference_realesrgan.py`.

+

+```console

+Usage: realesrgan-ncnn-vulkan.exe -i infile -o outfile [options]...

+

+ -h show this help

+ -i input-path input image path (jpg/png/webp) or directory

+ -o output-path output image path (jpg/png/webp) or directory

+ -s scale upscale ratio (can be 2, 3, 4. default=4)

+ -t tile-size tile size (>=32/0=auto, default=0) can be 0,0,0 for multi-gpu

+ -m model-path folder path to the pre-trained models. default=models

+ -n model-name model name (default=realesr-animevideov3, can be realesr-animevideov3 | realesrgan-x4plus | realesrgan-x4plus-anime | realesrnet-x4plus)

+ -g gpu-id gpu device to use (default=auto) can be 0,1,2 for multi-gpu

+ -j load:proc:save thread count for load/proc/save (default=1:2:2) can be 1:2,2,2:2 for multi-gpu

+ -x enable tta mode"

+ -f format output image format (jpg/png/webp, default=ext/png)

+ -v verbose output

+```

+

+Note that it may introduce block inconsistency (and also generate slightly different results from the PyTorch implementation), because this executable file first crops the input image into several tiles, and then processes them separately, finally stitches together.

+

+### Python script

+

+#### Usage of python script

+

+1. You can use X4 model for **arbitrary output size** with the argument `outscale`. The program will further perform cheap resize operation after the Real-ESRGAN output.

+

+```console

+Usage: python inference_realesrgan.py -n RealESRGAN_x4plus -i infile -o outfile [options]...

+

+A common command: python inference_realesrgan.py -n RealESRGAN_x4plus -i infile --outscale 3.5 --face_enhance

+

+ -h show this help

+ -i --input Input image or folder. Default: inputs

+ -o --output Output folder. Default: results

+ -n --model_name Model name. Default: RealESRGAN_x4plus

+ -s, --outscale The final upsampling scale of the image. Default: 4

+ --suffix Suffix of the restored image. Default: out

+ -t, --tile Tile size, 0 for no tile during testing. Default: 0

+ --face_enhance Whether to use GFPGAN to enhance face. Default: False

+ --fp32 Use fp32 precision during inference. Default: fp16 (half precision).

+ --ext Image extension. Options: auto | jpg | png, auto means using the same extension as inputs. Default: auto

+```

+

+#### Inference general images

+

+Download pre-trained models: [RealESRGAN_x4plus.pth](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.1.0/RealESRGAN_x4plus.pth)

+

+```bash

+wget https://github.com/xinntao/Real-ESRGAN/releases/download/v0.1.0/RealESRGAN_x4plus.pth -P weights

+```

+

+Inference!

+

+```bash

+python inference_realesrgan.py -n RealESRGAN_x4plus -i inputs --face_enhance

+```

+

+Results are in the `results` folder

+

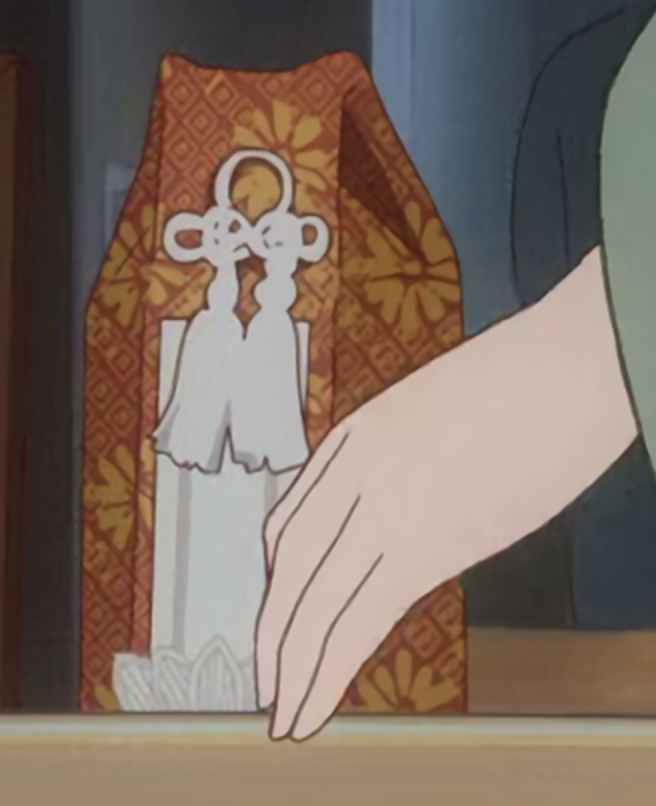

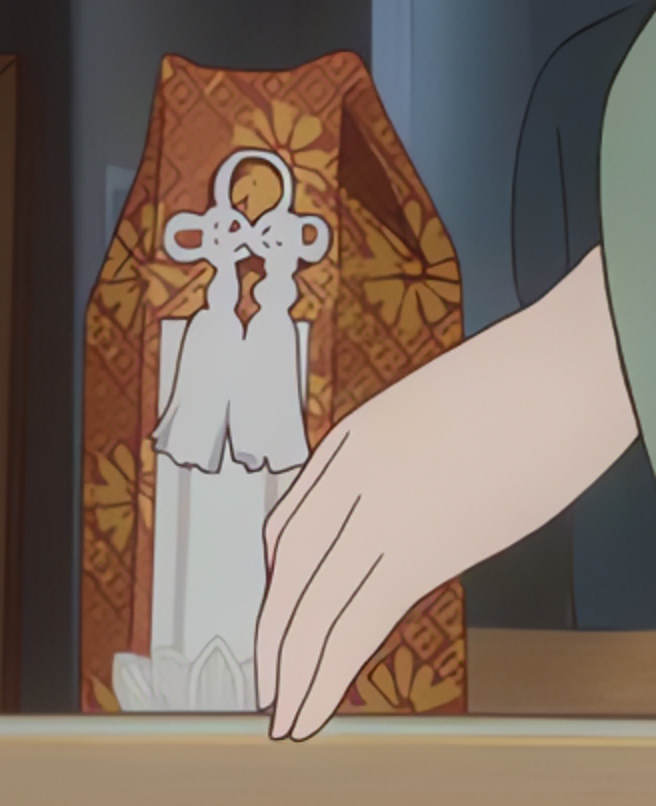

+#### Inference anime images

+

+

+  +

+

+

+Pre-trained models: [RealESRGAN_x4plus_anime_6B](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.2.4/RealESRGAN_x4plus_anime_6B.pth)

+ More details and comparisons with [waifu2x](https://github.com/nihui/waifu2x-ncnn-vulkan) are in [**anime_model.md**](docs/anime_model.md)

+

+```bash

+# download model

+wget https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.2.4/RealESRGAN_x4plus_anime_6B.pth -P weights

+# inference

+python inference_realesrgan.py -n RealESRGAN_x4plus_anime_6B -i inputs

+```

+

+Results are in the `results` folder

+

+---

+

+## BibTeX

+

+ @InProceedings{wang2021realesrgan,

+ author = {Xintao Wang and Liangbin Xie and Chao Dong and Ying Shan},

+ title = {Real-ESRGAN: Training Real-World Blind Super-Resolution with Pure Synthetic Data},

+ booktitle = {International Conference on Computer Vision Workshops (ICCVW)},

+ date = {2021}

+ }

+

+## 📧 Contact

+

+If you have any question, please email `xintao.wang@outlook.com` or `xintaowang@tencent.com`.

+

+

+## 🧩 Projects that use Real-ESRGAN

+

+If you develop/use Real-ESRGAN in your projects, welcome to let me know.

+

+- NCNN-Android: [RealSR-NCNN-Android](https://github.com/tumuyan/RealSR-NCNN-Android) by [tumuyan](https://github.com/tumuyan)

+- VapourSynth: [vs-realesrgan](https://github.com/HolyWu/vs-realesrgan) by [HolyWu](https://github.com/HolyWu)

+- NCNN: [Real-ESRGAN-ncnn-vulkan](https://github.com/xinntao/Real-ESRGAN-ncnn-vulkan)

+

+ **GUI**

+

+- [Waifu2x-Extension-GUI](https://github.com/AaronFeng753/Waifu2x-Extension-GUI) by [AaronFeng753](https://github.com/AaronFeng753)

+- [Squirrel-RIFE](https://github.com/Justin62628/Squirrel-RIFE) by [Justin62628](https://github.com/Justin62628)

+- [Real-GUI](https://github.com/scifx/Real-GUI) by [scifx](https://github.com/scifx)

+- [Real-ESRGAN_GUI](https://github.com/net2cn/Real-ESRGAN_GUI) by [net2cn](https://github.com/net2cn)

+- [Real-ESRGAN-EGUI](https://github.com/WGzeyu/Real-ESRGAN-EGUI) by [WGzeyu](https://github.com/WGzeyu)

+- [anime_upscaler](https://github.com/shangar21/anime_upscaler) by [shangar21](https://github.com/shangar21)

+- [Upscayl](https://github.com/upscayl/upscayl) by [Nayam Amarshe](https://github.com/NayamAmarshe) and [TGS963](https://github.com/TGS963)

+

+## 🤗 Acknowledgement

+

+Thanks for all the contributors.

+

+- [AK391](https://github.com/AK391): Integrate RealESRGAN to [Huggingface Spaces](https://huggingface.co/spaces) with [Gradio](https://github.com/gradio-app/gradio). See [Gradio Web Demo](https://huggingface.co/spaces/akhaliq/Real-ESRGAN).

+- [Asiimoviet](https://github.com/Asiimoviet): Translate the README.md to Chinese (中文).

+- [2ji3150](https://github.com/2ji3150): Thanks for the [detailed and valuable feedbacks/suggestions](https://github.com/xinntao/Real-ESRGAN/issues/131).

+- [Jared-02](https://github.com/Jared-02): Translate the Training.md to Chinese (中文).

diff --git a/RealESRGANv030/README_CN.md b/RealESRGANv030/README_CN.md

new file mode 100644

index 0000000000000000000000000000000000000000..fda1217bec600c5dcea72624c13533be6b71453e

--- /dev/null

+++ b/RealESRGANv030/README_CN.md

@@ -0,0 +1,276 @@

+

+  +

+

+

+##

+

+[](https://github.com/xinntao/Real-ESRGAN/releases)

+[](https://pypi.org/project/realesrgan/)

+[](https://github.com/xinntao/Real-ESRGAN/issues)

+[](https://github.com/xinntao/Real-ESRGAN/issues)

+[](https://github.com/xinntao/Real-ESRGAN/blob/master/LICENSE)

+[](https://github.com/xinntao/Real-ESRGAN/blob/master/.github/workflows/pylint.yml)

+[](https://github.com/xinntao/Real-ESRGAN/blob/master/.github/workflows/publish-pip.yml)

+

+:fire: 更新动漫视频的小模型 **RealESRGAN AnimeVideo-v3**. 更多信息在 [[动漫视频模型介绍](docs/anime_video_model.md)] 和 [[比较](docs/anime_comparisons_CN.md)] 中.

+

+1. Real-ESRGAN的[Colab Demo](https://colab.research.google.com/drive/1k2Zod6kSHEvraybHl50Lys0LerhyTMCo?usp=sharing) | Real-ESRGAN**动漫视频** 的[Colab Demo](https://colab.research.google.com/drive/1yNl9ORUxxlL4N0keJa2SEPB61imPQd1B?usp=sharing)

+2. **支持Intel/AMD/Nvidia显卡**的绿色版exe文件: [Windows版](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.5.0/realesrgan-ncnn-vulkan-20220424-windows.zip) / [Linux版](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.5.0/realesrgan-ncnn-vulkan-20220424-ubuntu.zip) / [macOS版](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.5.0/realesrgan-ncnn-vulkan-20220424-macos.zip),详情请移步[这里](#便携版(绿色版)可执行文件)。NCNN的实现在 [Real-ESRGAN-ncnn-vulkan](https://github.com/xinntao/Real-ESRGAN-ncnn-vulkan)。

+

+Real-ESRGAN 的目标是开发出**实用的图像/视频修复算法**。

+我们在 ESRGAN 的基础上使用纯合成的数据来进行训练,以使其能被应用于实际的图片修复的场景(顾名思义:Real-ESRGAN)。

+

+:art: Real-ESRGAN 需要,也很欢迎你的贡献,如新功能、模型、bug修复、建议、维护等等。详情可以查看[CONTRIBUTING.md](docs/CONTRIBUTING.md),所有的贡献者都会被列在[此处](README_CN.md#hugs-感谢)。

+

+:milky_way: 感谢大家提供了很好的反馈。这些反馈会逐步更新在 [这个文档](docs/feedback.md)。

+

+:question: 常见的问题可以在[FAQ.md](docs/FAQ.md)中找到答案。(好吧,现在还是空白的=-=||)

+

+---

+

+如果 Real-ESRGAN 对你有帮助,可以给本项目一个 Star :star: ,或者推荐给你的朋友们,谢谢!:blush:

+其他推荐的项目:

+:arrow_forward: [GFPGAN](https://github.com/TencentARC/GFPGAN): 实用的人脸复原算法

+:arrow_forward: [BasicSR](https://github.com/xinntao/BasicSR): 开源的图像和视频工具箱

+:arrow_forward: [facexlib](https://github.com/xinntao/facexlib): 提供与人脸相关的工具箱

+:arrow_forward: [HandyView](https://github.com/xinntao/HandyView): 基于PyQt5的图片查看器,方便查看以及比较

+

+---

+

+

+

+🚩更新

+

+- ✅ 更新动漫视频的小模型 **RealESRGAN AnimeVideo-v3**. 更多信息在 [anime video models](docs/anime_video_model.md) 和 [comparisons](docs/anime_comparisons.md)中.

+- ✅ 添加了针对动漫视频的小模型, 更多信息在 [anime video models](docs/anime_video_model.md) 中.

+- ✅ 添加了ncnn 实现:[Real-ESRGAN-ncnn-vulkan](https://github.com/xinntao/Real-ESRGAN-ncnn-vulkan).

+- ✅ 添加了 [*RealESRGAN_x4plus_anime_6B.pth*](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.2.4/RealESRGAN_x4plus_anime_6B.pth),对二次元图片进行了优化,并减少了model的大小。详情 以及 与[waifu2x](https://github.com/nihui/waifu2x-ncnn-vulkan)的对比请查看[**anime_model.md**](docs/anime_model.md)

+- ✅支持用户在自己的数据上进行微调 (finetune):[详情](docs/Training.md#Finetune-Real-ESRGAN-on-your-own-dataset)

+- ✅ 支持使用[GFPGAN](https://github.com/TencentARC/GFPGAN)**增强人脸**

+- ✅ 通过[Gradio](https://github.com/gradio-app/gradio)添加到了[Huggingface Spaces](https://huggingface.co/spaces)(一个机器学习应用的在线平台):[Gradio在线版](https://huggingface.co/spaces/akhaliq/Real-ESRGAN)。感谢[@AK391](https://github.com/AK391)

+- ✅ 支持任意比例的缩放:`--outscale`(实际上使用`LANCZOS4`来更进一步调整输出图像的尺寸)。添加了*RealESRGAN_x2plus.pth*模型

+- ✅ [推断脚本](inference_realesrgan.py)支持: 1) 分块处理**tile**; 2) 带**alpha通道**的图像; 3) **灰色**图像; 4) **16-bit**图像.

+- ✅ 训练代码已经发布,具体做法可查看:[Training.md](docs/Training.md)。

+

+

+

+

+

+🧩使用Real-ESRGAN的项目

+

+ 👋 如果你开发/使用/集成了Real-ESRGAN, 欢迎联系我添加

+

+- NCNN-Android: [RealSR-NCNN-Android](https://github.com/tumuyan/RealSR-NCNN-Android) by [tumuyan](https://github.com/tumuyan)

+- VapourSynth: [vs-realesrgan](https://github.com/HolyWu/vs-realesrgan) by [HolyWu](https://github.com/HolyWu)

+- NCNN: [Real-ESRGAN-ncnn-vulkan](https://github.com/xinntao/Real-ESRGAN-ncnn-vulkan)

+

+ **易用的图形界面**

+

+- [Waifu2x-Extension-GUI](https://github.com/AaronFeng753/Waifu2x-Extension-GUI) by [AaronFeng753](https://github.com/AaronFeng753)

+- [Squirrel-RIFE](https://github.com/Justin62628/Squirrel-RIFE) by [Justin62628](https://github.com/Justin62628)

+- [Real-GUI](https://github.com/scifx/Real-GUI) by [scifx](https://github.com/scifx)

+- [Real-ESRGAN_GUI](https://github.com/net2cn/Real-ESRGAN_GUI) by [net2cn](https://github.com/net2cn)

+- [Real-ESRGAN-EGUI](https://github.com/WGzeyu/Real-ESRGAN-EGUI) by [WGzeyu](https://github.com/WGzeyu)

+- [anime_upscaler](https://github.com/shangar21/anime_upscaler) by [shangar21](https://github.com/shangar21)

+- [RealESRGAN-GUI](https://github.com/Baiyuetribe/paper2gui/blob/main/Video%20Super%20Resolution/RealESRGAN-GUI.md) by [Baiyuetribe](https://github.com/Baiyuetribe)

+

+

+

+

+👀Demo视频(B站)

+

+- [大闹天宫片段](https://www.bilibili.com/video/BV1ja41117zb)

+

+

+

+### :book: Real-ESRGAN: Training Real-World Blind Super-Resolution with Pure Synthetic Data

+

+> [[论文](https://arxiv.org/abs/2107.10833)] [项目主页] [[YouTube 视频](https://www.youtube.com/watch?v=fxHWoDSSvSc)] [[B站视频](https://www.bilibili.com/video/BV1H34y1m7sS/)] [[Poster](https://xinntao.github.io/projects/RealESRGAN_src/RealESRGAN_poster.pdf)] [[PPT](https://docs.google.com/presentation/d/1QtW6Iy8rm8rGLsJ0Ldti6kP-7Qyzy6XL/edit?usp=sharing&ouid=109799856763657548160&rtpof=true&sd=true)]

+> [Xintao Wang](https://xinntao.github.io/), Liangbin Xie, [Chao Dong](https://scholar.google.com.hk/citations?user=OSDCB0UAAAAJ), [Ying Shan](https://scholar.google.com/citations?user=4oXBp9UAAAAJ&hl=en)

+> Tencent ARC Lab; Shenzhen Institutes of Advanced Technology, Chinese Academy of Sciences

+

+

+  +

+

+

+---

+

+我们提供了一套训练好的模型(*RealESRGAN_x4plus.pth*),可以进行4倍的超分辨率。

+**现在的 Real-ESRGAN 还是有几率失败的,因为现实生活的降质过程比较复杂。**

+而且,本项目对**人脸以及文字之类**的效果还不是太好,但是我们会持续进行优化的。

+

+Real-ESRGAN 将会被长期支持,我会在空闲的时间中持续维护更新。

+

+这些是未来计划的几个新功能:

+

+- [ ] 优化人脸

+- [ ] 优化文字

+- [x] 优化动画图像

+- [ ] 支持更多的超分辨率比例

+- [ ] 可调节的复原

+

+如果你有好主意或需求,欢迎在 issue 或 discussion 中提出。

+如果你有一些 Real-ESRGAN 中有问题的照片,你也可以在 issue 或者 discussion 中发出来。我会留意(但是不一定能解决:stuck_out_tongue:)。如果有必要的话,我还会专门开一页来记录那些有待解决的图像。

+

+---

+

+### 便携版(绿色版)可执行文件

+

+你可以下载**支持Intel/AMD/Nvidia显卡**的绿色版exe文件: [Windows版](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.5.0/realesrgan-ncnn-vulkan-20220424-windows.zip) / [Linux版](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.5.0/realesrgan-ncnn-vulkan-20220424-ubuntu.zip) / [macOS版](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.5.0/realesrgan-ncnn-vulkan-20220424-macos.zip)。

+

+绿色版指的是这些exe你可以直接运行(放U盘里拷走都没问题),因为里面已经有所需的文件和模型了。它不需要 CUDA 或者 PyTorch运行环境。

+

+你可以通过下面这个命令来运行(Windows版本的例子,更多信息请查看对应版本的README.md):

+

+```bash

+./realesrgan-ncnn-vulkan.exe -i 输入图像.jpg -o 输出图像.png -n 模型名字

+```

+

+我们提供了五种模型:

+

+1. realesrgan-x4plus(默认)

+2. reaesrnet-x4plus

+3. realesrgan-x4plus-anime(针对动漫插画图像优化,有更小的体积)

+4. realesr-animevideov3 (针对动漫视频)

+

+你可以通过`-n`参数来使用其他模型,例如`./realesrgan-ncnn-vulkan.exe -i 二次元图片.jpg -o 二刺螈图片.png -n realesrgan-x4plus-anime`

+

+### 可执行文件的用法

+

+1. 更多细节可以参考 [Real-ESRGAN-ncnn-vulkan](https://github.com/xinntao/Real-ESRGAN-ncnn-vulkan#computer-usages).

+2. 注意:可执行文件并没有支持 python 脚本 `inference_realesrgan.py` 中所有的功能,比如 `outscale` 选项) .

+

+```console

+Usage: realesrgan-ncnn-vulkan.exe -i infile -o outfile [options]...

+

+ -h show this help

+ -i input-path input image path (jpg/png/webp) or directory

+ -o output-path output image path (jpg/png/webp) or directory

+ -s scale upscale ratio (can be 2, 3, 4. default=4)

+ -t tile-size tile size (>=32/0=auto, default=0) can be 0,0,0 for multi-gpu

+ -m model-path folder path to the pre-trained models. default=models

+ -n model-name model name (default=realesr-animevideov3, can be realesr-animevideov3 | realesrgan-x4plus | realesrgan-x4plus-anime | realesrnet-x4plus)

+ -g gpu-id gpu device to use (default=auto) can be 0,1,2 for multi-gpu

+ -j load:proc:save thread count for load/proc/save (default=1:2:2) can be 1:2,2,2:2 for multi-gpu

+ -x enable tta mode"

+ -f format output image format (jpg/png/webp, default=ext/png)

+ -v verbose output

+```

+

+由于这些exe文件会把图像分成几个板块,然后来分别进行处理,再合成导出,输出的图像可能会有一点割裂感(而且可能跟PyTorch的输出不太一样)

+

+---

+

+## :wrench: 依赖以及安装

+

+- Python >= 3.7 (推荐使用[Anaconda](https://www.anaconda.com/download/#linux)或[Miniconda](https://docs.conda.io/en/latest/miniconda.html))

+- [PyTorch >= 1.7](https://pytorch.org/)

+

+#### 安装

+

+1. 把项目克隆到本地

+

+ ```bash

+ git clone https://github.com/xinntao/Real-ESRGAN.git

+ cd Real-ESRGAN

+ ```

+

+2. 安装各种依赖

+

+ ```bash

+ # 安装 basicsr - https://github.com/xinntao/BasicSR

+ # 我们使用BasicSR来训练以及推断

+ pip install basicsr

+ # facexlib和gfpgan是用来增强人脸的

+ pip install facexlib

+ pip install gfpgan

+ pip install -r requirements.txt

+ python setup.py develop

+ ```

+

+## :zap: 快速上手

+

+### 普通图片

+

+下载我们训练好的模型: [RealESRGAN_x4plus.pth](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.1.0/RealESRGAN_x4plus.pth)

+

+```bash

+wget https://github.com/xinntao/Real-ESRGAN/releases/download/v0.1.0/RealESRGAN_x4plus.pth -P weights

+```

+

+推断!

+

+```bash

+python inference_realesrgan.py -n RealESRGAN_x4plus -i inputs --face_enhance

+```

+

+结果在`results`文件夹

+

+### 动画图片

+

+

+  +

+

+

+训练好的模型: [RealESRGAN_x4plus_anime_6B](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.2.4/RealESRGAN_x4plus_anime_6B.pth)

+有关[waifu2x](https://github.com/nihui/waifu2x-ncnn-vulkan)的更多信息和对比在[**anime_model.md**](docs/anime_model.md)中。

+

+```bash

+# 下载模型

+wget https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.2.4/RealESRGAN_x4plus_anime_6B.pth -P weights

+# 推断

+python inference_realesrgan.py -n RealESRGAN_x4plus_anime_6B -i inputs

+```

+

+结果在`results`文件夹

+

+### Python 脚本的用法

+

+1. 虽然你使用了 X4 模型,但是你可以 **输出任意尺寸比例的图片**,只要实用了 `outscale` 参数. 程序会进一步对模型的输出图像进行缩放。

+

+```console

+Usage: python inference_realesrgan.py -n RealESRGAN_x4plus -i infile -o outfile [options]...

+

+A common command: python inference_realesrgan.py -n RealESRGAN_x4plus -i infile --outscale 3.5 --face_enhance

+

+ -h show this help

+ -i --input Input image or folder. Default: inputs

+ -o --output Output folder. Default: results

+ -n --model_name Model name. Default: RealESRGAN_x4plus

+ -s, --outscale The final upsampling scale of the image. Default: 4

+ --suffix Suffix of the restored image. Default: out

+ -t, --tile Tile size, 0 for no tile during testing. Default: 0

+ --face_enhance Whether to use GFPGAN to enhance face. Default: False

+ --fp32 Whether to use half precision during inference. Default: False

+ --ext Image extension. Options: auto | jpg | png, auto means using the same extension as inputs. Default: auto

+```

+

+## :european_castle: 模型库

+

+请参见 [docs/model_zoo.md](docs/model_zoo.md)

+

+## :computer: 训练,在你的数据上微调(Fine-tune)

+

+这里有一份详细的指南:[Training.md](docs/Training.md).

+

+## BibTeX 引用

+

+ @Article{wang2021realesrgan,

+ title={Real-ESRGAN: Training Real-World Blind Super-Resolution with Pure Synthetic Data},

+ author={Xintao Wang and Liangbin Xie and Chao Dong and Ying Shan},

+ journal={arXiv:2107.10833},

+ year={2021}

+ }

+

+## :e-mail: 联系我们

+

+如果你有任何问题,请通过 `xintao.wang@outlook.com` 或 `xintaowang@tencent.com` 联系我们。

+

+## :hugs: 感谢

+

+感谢所有的贡献者大大们~

+

+- [AK391](https://github.com/AK391): 通过[Gradio](https://github.com/gradio-app/gradio)添加到了[Huggingface Spaces](https://huggingface.co/spaces)(一个机器学习应用的在线平台):[Gradio在线版](https://huggingface.co/spaces/akhaliq/Real-ESRGAN)。

+- [Asiimoviet](https://github.com/Asiimoviet): 把 README.md 文档 翻译成了中文。

+- [2ji3150](https://github.com/2ji3150): 感谢详尽并且富有价值的[反馈、建议](https://github.com/xinntao/Real-ESRGAN/issues/131).

+- [Jared-02](https://github.com/Jared-02): 把 Training.md 文档 翻译成了中文。

diff --git a/RealESRGANv030/VERSION b/RealESRGANv030/VERSION

new file mode 100644

index 0000000000000000000000000000000000000000..0d91a54c7d439e84e3dd17d3594f1b2b6737f430

--- /dev/null

+++ b/RealESRGANv030/VERSION

@@ -0,0 +1 @@

+0.3.0

diff --git a/RealESRGANv030/__init__.py b/RealESRGANv030/__init__.py

new file mode 100644

index 0000000000000000000000000000000000000000..e69de29bb2d1d6434b8b29ae775ad8c2e48c5391

diff --git a/RealESRGANv030/assets/realesrgan_logo.png b/RealESRGANv030/assets/realesrgan_logo.png

new file mode 100644

index 0000000000000000000000000000000000000000..88cd1ad6170794c2becb95006edffa0655d9372a

Binary files /dev/null and b/RealESRGANv030/assets/realesrgan_logo.png differ

diff --git a/RealESRGANv030/assets/realesrgan_logo_ai.png b/RealESRGANv030/assets/realesrgan_logo_ai.png

new file mode 100644

index 0000000000000000000000000000000000000000..b0f595cf2535de7e69393384d8d056300f1cdddc

Binary files /dev/null and b/RealESRGANv030/assets/realesrgan_logo_ai.png differ

diff --git a/RealESRGANv030/assets/realesrgan_logo_av.png b/RealESRGANv030/assets/realesrgan_logo_av.png

new file mode 100644

index 0000000000000000000000000000000000000000..501ac8e81292d9369122a69ec2dd56a3ae8beca6

Binary files /dev/null and b/RealESRGANv030/assets/realesrgan_logo_av.png differ

diff --git a/RealESRGANv030/assets/realesrgan_logo_gi.png b/RealESRGANv030/assets/realesrgan_logo_gi.png

new file mode 100644

index 0000000000000000000000000000000000000000..cdb0a1a74e0b54a1c684141324c6635acf2f60f8

Binary files /dev/null and b/RealESRGANv030/assets/realesrgan_logo_gi.png differ

diff --git a/RealESRGANv030/assets/realesrgan_logo_gv.png b/RealESRGANv030/assets/realesrgan_logo_gv.png

new file mode 100644

index 0000000000000000000000000000000000000000..21dfba05f3855f1d9740e6d2cbe2a8ac736f4508

Binary files /dev/null and b/RealESRGANv030/assets/realesrgan_logo_gv.png differ

diff --git a/RealESRGANv030/assets/teaser-text.png b/RealESRGANv030/assets/teaser-text.png

new file mode 100644

index 0000000000000000000000000000000000000000..af9b424e390bf454838d962f049db9bb5ef1064d

Binary files /dev/null and b/RealESRGANv030/assets/teaser-text.png differ

diff --git a/RealESRGANv030/assets/teaser.jpg b/RealESRGANv030/assets/teaser.jpg

new file mode 100644

index 0000000000000000000000000000000000000000..dc9b7ccdf78e3c816b0b6ca567433b53253b2e1e

Binary files /dev/null and b/RealESRGANv030/assets/teaser.jpg differ

diff --git a/RealESRGANv030/cog.yaml b/RealESRGANv030/cog.yaml

new file mode 100644

index 0000000000000000000000000000000000000000..daa6983934b6e186ecd0cf1d4e038acdb9910cbc

--- /dev/null

+++ b/RealESRGANv030/cog.yaml

@@ -0,0 +1,22 @@

+# This file is used for constructing replicate env

+image: "r8.im/tencentarc/realesrgan"

+

+build:

+ gpu: true

+ python_version: "3.8"

+ system_packages:

+ - "libgl1-mesa-glx"

+ - "libglib2.0-0"

+ python_packages:

+ - "torch==1.7.1"

+ - "torchvision==0.8.2"

+ - "numpy==1.21.1"

+ - "lmdb==1.2.1"

+ - "opencv-python==4.5.3.56"

+ - "PyYAML==5.4.1"

+ - "tqdm==4.62.2"

+ - "yapf==0.31.0"

+ - "basicsr==1.4.2"

+ - "facexlib==0.2.5"

+

+predict: "cog_predict.py:Predictor"

diff --git a/RealESRGANv030/cog_predict.py b/RealESRGANv030/cog_predict.py

new file mode 100644

index 0000000000000000000000000000000000000000..f314611be45d716664670fd39f90a1cfc18606e1

--- /dev/null

+++ b/RealESRGANv030/cog_predict.py

@@ -0,0 +1,219 @@

+# flake8: noqa

+# This file is used for deploying replicate models

+# running: cog predict -i img=@inputs/00017_gray.png -i version='General - v3' -i scale=2 -i face_enhance=True -i tile=0

+# push: cog push r8.im/xinntao/realesrgan

+

+import os

+

+os.system("pip install gfpgan")

+os.system("python setup.py develop")

+

+import cv2

+import shutil

+import tempfile

+import torch

+from basicsr.archs.rrdbnet_arch import RRDBNet

+from basicsr.archs.srvgg_arch import SRVGGNetCompact

+

+from realesrgan.utils import RealESRGANer

+

+try:

+ from cog import BasePredictor, Input, Path

+ from gfpgan import GFPGANer

+except Exception:

+ print("please install cog and realesrgan package")

+

+

+class Predictor(BasePredictor):

+ def setup(self):

+ os.makedirs("output", exist_ok=True)

+ # download weights

+ if not os.path.exists("weights/realesr-general-x4v3.pth"):

+ os.system(

+ "wget https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.5.0/realesr-general-x4v3.pth -P ./weights"

+ )

+ if not os.path.exists("weights/GFPGANv1.4.pth"):

+ os.system(

+ "wget https://github.com/TencentARC/GFPGAN/releases/download/v1.3.0/GFPGANv1.4.pth -P ./weights"

+ )

+ if not os.path.exists("weights/RealESRGAN_x4plus.pth"):

+ os.system(

+ "wget https://github.com/xinntao/Real-ESRGAN/releases/download/v0.1.0/RealESRGAN_x4plus.pth -P ./weights"

+ )

+ if not os.path.exists("weights/RealESRGAN_x4plus_anime_6B.pth"):

+ os.system(

+ "wget https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.2.4/RealESRGAN_x4plus_anime_6B.pth -P ./weights"

+ )

+ if not os.path.exists("weights/realesr-animevideov3.pth"):

+ os.system(

+ "wget https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.5.0/realesr-animevideov3.pth -P ./weights"

+ )

+

+ def choose_model(self, scale, version, tile=0):

+ half = True if torch.cuda.is_available() else False

+ if version == "General - RealESRGANplus":

+ model = RRDBNet(

+ num_in_ch=3,

+ num_out_ch=3,

+ num_feat=64,

+ num_block=23,

+ num_grow_ch=32,

+ scale=4,

+ )

+ model_path = "weights/RealESRGAN_x4plus.pth"

+ self.upsampler = RealESRGANer(

+ scale=4,

+ model_path=model_path,

+ model=model,

+ tile=tile,

+ tile_pad=10,

+ pre_pad=0,

+ half=half,

+ )

+ elif version == "General - v3":

+ model = SRVGGNetCompact(

+ num_in_ch=3,

+ num_out_ch=3,

+ num_feat=64,

+ num_conv=32,

+ upscale=4,

+ act_type="prelu",

+ )

+ model_path = "weights/realesr-general-x4v3.pth"

+ self.upsampler = RealESRGANer(

+ scale=4,

+ model_path=model_path,

+ model=model,

+ tile=tile,

+ tile_pad=10,

+ pre_pad=0,

+ half=half,

+ )

+ elif version == "Anime - anime6B":

+ model = RRDBNet(

+ num_in_ch=3,

+ num_out_ch=3,

+ num_feat=64,

+ num_block=6,

+ num_grow_ch=32,

+ scale=4,

+ )

+ model_path = "weights/RealESRGAN_x4plus_anime_6B.pth"

+ self.upsampler = RealESRGANer(

+ scale=4,

+ model_path=model_path,

+ model=model,

+ tile=tile,

+ tile_pad=10,

+ pre_pad=0,

+ half=half,

+ )

+ elif version == "AnimeVideo - v3":

+ model = SRVGGNetCompact(

+ num_in_ch=3,

+ num_out_ch=3,

+ num_feat=64,

+ num_conv=16,

+ upscale=4,

+ act_type="prelu",

+ )

+ model_path = "weights/realesr-animevideov3.pth"

+ self.upsampler = RealESRGANer(

+ scale=4,

+ model_path=model_path,

+ model=model,

+ tile=tile,

+ tile_pad=10,

+ pre_pad=0,

+ half=half,

+ )

+

+ self.face_enhancer = GFPGANer(

+ model_path="weights/GFPGANv1.4.pth",

+ upscale=scale,

+ arch="clean",

+ channel_multiplier=2,

+ bg_upsampler=self.upsampler,

+ )

+

+ def predict(

+ self,

+ img: Path = Input(description="Input"),

+ version: str = Input(

+ description="RealESRGAN version. Please see [Readme] below for more descriptions",

+ choices=[

+ "General - RealESRGANplus",

+ "General - v3",

+ "Anime - anime6B",

+ "AnimeVideo - v3",

+ ],

+ default="General - v3",

+ ),

+ scale: float = Input(description="Rescaling factor", default=2),

+ face_enhance: bool = Input(

+ description="Enhance faces with GFPGAN. Note that it does not work for anime images/vidoes",

+ default=False,

+ ),

+ tile: int = Input(

+ description="Tile size. Default is 0, that is no tile. When encountering the out-of-GPU-memory issue, please specify it, e.g., 400 or 200",

+ default=0,

+ ),

+ ) -> Path:

+ if tile <= 100 or tile is None:

+ tile = 0

+ print(

+ f"img: {img}. version: {version}. scale: {scale}. face_enhance: {face_enhance}. tile: {tile}."

+ )

+ try:

+ extension = os.path.splitext(os.path.basename(str(img)))[1]

+ img = cv2.imread(str(img), cv2.IMREAD_UNCHANGED)

+ if len(img.shape) == 3 and img.shape[2] == 4:

+ img_mode = "RGBA"

+ elif len(img.shape) == 2:

+ img_mode = None

+ img = cv2.cvtColor(img, cv2.COLOR_GRAY2BGR)

+ else:

+ img_mode = None

+

+ h, w = img.shape[0:2]

+ if h < 300:

+ img = cv2.resize(img, (w * 2, h * 2), interpolation=cv2.INTER_LANCZOS4)

+

+ self.choose_model(scale, version, tile)

+

+ try:

+ if face_enhance:

+ _, _, output = self.face_enhancer.enhance(

+ img, has_aligned=False, only_center_face=False, paste_back=True

+ )

+ else:

+ output, _ = self.upsampler.enhance(img, outscale=scale)

+ except RuntimeError as error:

+ print("Error", error)

+ print(

+ 'If you encounter CUDA out of memory, try to set "tile" to a smaller size, e.g., 400.'

+ )

+

+ if img_mode == "RGBA": # RGBA images should be saved in png format

+ extension = "png"

+ # save_path = f'output/out.{extension}'

+ # cv2.imwrite(save_path, output)

+ out_path = Path(tempfile.mkdtemp()) / f"out.{extension}"

+ cv2.imwrite(str(out_path), output)

+ except Exception as error:

+ print("global exception: ", error)

+ finally:

+ clean_folder("output")

+ return out_path

+

+

+def clean_folder(folder):

+ for filename in os.listdir(folder):

+ file_path = os.path.join(folder, filename)

+ try:

+ if os.path.isfile(file_path) or os.path.islink(file_path):

+ os.unlink(file_path)

+ elif os.path.isdir(file_path):

+ shutil.rmtree(file_path)

+ except Exception as e:

+ print(f"Failed to delete {file_path}. Reason: {e}")

diff --git a/RealESRGANv030/docs/CONTRIBUTING.md b/RealESRGANv030/docs/CONTRIBUTING.md

new file mode 100644

index 0000000000000000000000000000000000000000..75990c2ce7545b72fb6ebad8295ca4895f437205

--- /dev/null

+++ b/RealESRGANv030/docs/CONTRIBUTING.md

@@ -0,0 +1,44 @@

+# Contributing to Real-ESRGAN

+

+:art: Real-ESRGAN needs your contributions. Any contributions are welcome, such as new features/models/typo fixes/suggestions/maintenance, *etc*. See [CONTRIBUTING.md](docs/CONTRIBUTING.md). All contributors are list [here](README.md#hugs-acknowledgement).

+

+We like open-source and want to develop practical algorithms for general image restoration. However, individual strength is limited. So, any kinds of contributions are welcome, such as:

+

+- New features

+- New models (your fine-tuned models)

+- Bug fixes

+- Typo fixes

+- Suggestions

+- Maintenance

+- Documents

+- *etc*

+

+## Workflow

+

+1. Fork and pull the latest Real-ESRGAN repository

+1. Checkout a new branch (do not use master branch for PRs)

+1. Commit your changes

+1. Create a PR

+

+**Note**:

+

+1. Please check the code style and linting

+ 1. The style configuration is specified in [setup.cfg](setup.cfg)

+ 1. If you use VSCode, the settings are configured in [.vscode/settings.json](.vscode/settings.json)

+1. Strongly recommend using `pre-commit hook`. It will check your code style and linting before your commit.

+ 1. In the root path of project folder, run `pre-commit install`

+ 1. The pre-commit configuration is listed in [.pre-commit-config.yaml](.pre-commit-config.yaml)

+1. Better to [open a discussion](https://github.com/xinntao/Real-ESRGAN/discussions) before large changes.

+ 1. Welcome to discuss :sunglasses:. I will try my best to join the discussion.

+

+## TODO List

+

+:zero: The most straightforward way of improving model performance is to fine-tune on some specific datasets.

+

+Here are some TODOs:

+

+- [ ] optimize for human faces

+- [ ] optimize for texts

+- [ ] support controllable restoration strength

+

+:one: There are also [several issues](https://github.com/xinntao/Real-ESRGAN/issues) that require helpers to improve. If you can help, please let me know :smile:

diff --git a/RealESRGANv030/docs/FAQ.md b/RealESRGANv030/docs/FAQ.md

new file mode 100644

index 0000000000000000000000000000000000000000..843f4dd847487066a1c7c105c7292e2de0bd5f1a

--- /dev/null

+++ b/RealESRGANv030/docs/FAQ.md

@@ -0,0 +1,10 @@

+# FAQ

+

+1. **Q: How to select models?**

+A: Please refer to [docs/model_zoo.md](docs/model_zoo.md)

+

+1. **Q: Can `face_enhance` be used for anime images/animation videos?**

+A: No, it can only be used for real faces. It is recommended not to use this option for anime images/animation videos to save GPU memory.

+

+1. **Q: Error "slow_conv2d_cpu" not implemented for 'Half'**

+A: In order to save GPU memory consumption and speed up inference, Real-ESRGAN uses half precision (fp16) during inference by default. However, some operators for half inference are not implemented in CPU mode. You need to add **`--fp32` option** for the commands. For example, `python inference_realesrgan.py -n RealESRGAN_x4plus.pth -i inputs --fp32`.

diff --git a/RealESRGANv030/docs/Training.md b/RealESRGANv030/docs/Training.md

new file mode 100644

index 0000000000000000000000000000000000000000..77da5ea5763f7a6ab291ebc28afb13be37df3f50

--- /dev/null

+++ b/RealESRGANv030/docs/Training.md

@@ -0,0 +1,271 @@

+# :computer: How to Train/Finetune Real-ESRGAN

+

+- [Train Real-ESRGAN](#train-real-esrgan)

+ - [Overview](#overview)

+ - [Dataset Preparation](#dataset-preparation)

+ - [Train Real-ESRNet](#Train-Real-ESRNet)

+ - [Train Real-ESRGAN](#Train-Real-ESRGAN)

+- [Finetune Real-ESRGAN on your own dataset](#Finetune-Real-ESRGAN-on-your-own-dataset)

+ - [Generate degraded images on the fly](#Generate-degraded-images-on-the-fly)

+ - [Use paired training data](#use-your-own-paired-data)

+

+[English](Training.md) **|** [简体中文](Training_CN.md)

+

+## Train Real-ESRGAN

+

+### Overview

+

+The training has been divided into two stages. These two stages have the same data synthesis process and training pipeline, except for the loss functions. Specifically,

+

+1. We first train Real-ESRNet with L1 loss from the pre-trained model ESRGAN.

+1. We then use the trained Real-ESRNet model as an initialization of the generator, and train the Real-ESRGAN with a combination of L1 loss, perceptual loss and GAN loss.

+

+### Dataset Preparation

+

+We use DF2K (DIV2K and Flickr2K) + OST datasets for our training. Only HR images are required.

+You can download from :

+

+1. DIV2K: http://data.vision.ee.ethz.ch/cvl/DIV2K/DIV2K_train_HR.zip

+2. Flickr2K: https://cv.snu.ac.kr/research/EDSR/Flickr2K.tar

+3. OST: https://openmmlab.oss-cn-hangzhou.aliyuncs.com/datasets/OST_dataset.zip

+

+Here are steps for data preparation.

+

+#### Step 1: [Optional] Generate multi-scale images

+

+For the DF2K dataset, we use a multi-scale strategy, *i.e.*, we downsample HR images to obtain several Ground-Truth images with different scales.

+You can use the [scripts/generate_multiscale_DF2K.py](scripts/generate_multiscale_DF2K.py) script to generate multi-scale images.

+Note that this step can be omitted if you just want to have a fast try.

+

+```bash

+python scripts/generate_multiscale_DF2K.py --input datasets/DF2K/DF2K_HR --output datasets/DF2K/DF2K_multiscale

+```

+

+#### Step 2: [Optional] Crop to sub-images

+

+We then crop DF2K images into sub-images for faster IO and processing.

+This step is optional if your IO is enough or your disk space is limited.

+

+You can use the [scripts/extract_subimages.py](scripts/extract_subimages.py) script. Here is the example:

+

+```bash

+ python scripts/extract_subimages.py --input datasets/DF2K/DF2K_multiscale --output datasets/DF2K/DF2K_multiscale_sub --crop_size 400 --step 200

+```

+

+#### Step 3: Prepare a txt for meta information

+

+You need to prepare a txt file containing the image paths. The following are some examples in `meta_info_DF2Kmultiscale+OST_sub.txt` (As different users may have different sub-images partitions, this file is not suitable for your purpose and you need to prepare your own txt file):

+

+```txt

+DF2K_HR_sub/000001_s001.png

+DF2K_HR_sub/000001_s002.png

+DF2K_HR_sub/000001_s003.png

+...

+```

+

+You can use the [scripts/generate_meta_info.py](scripts/generate_meta_info.py) script to generate the txt file.