hexsha

stringlengths 40

40

| size

int64 6

14.9M

| ext

stringclasses 1

value | lang

stringclasses 1

value | max_stars_repo_path

stringlengths 6

260

| max_stars_repo_name

stringlengths 6

119

| max_stars_repo_head_hexsha

stringlengths 40

41

| max_stars_repo_licenses

list | max_stars_count

int64 1

191k

⌀ | max_stars_repo_stars_event_min_datetime

stringlengths 24

24

⌀ | max_stars_repo_stars_event_max_datetime

stringlengths 24

24

⌀ | max_issues_repo_path

stringlengths 6

260

| max_issues_repo_name

stringlengths 6

119

| max_issues_repo_head_hexsha

stringlengths 40

41

| max_issues_repo_licenses

list | max_issues_count

int64 1

67k

⌀ | max_issues_repo_issues_event_min_datetime

stringlengths 24

24

⌀ | max_issues_repo_issues_event_max_datetime

stringlengths 24

24

⌀ | max_forks_repo_path

stringlengths 6

260

| max_forks_repo_name

stringlengths 6

119

| max_forks_repo_head_hexsha

stringlengths 40

41

| max_forks_repo_licenses

list | max_forks_count

int64 1

105k

⌀ | max_forks_repo_forks_event_min_datetime

stringlengths 24

24

⌀ | max_forks_repo_forks_event_max_datetime

stringlengths 24

24

⌀ | avg_line_length

float64 2

1.04M

| max_line_length

int64 2

11.2M

| alphanum_fraction

float64 0

1

| cells

list | cell_types

list | cell_type_groups

list |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

4a9d545de48b83bd7e65462623293329b94d66f9

| 13,260 |

ipynb

|

Jupyter Notebook

|

Introduction to ML.ipynb

|

anikannal/ML_Projects

|

ae58eb8928a1aff7f205ab663adaeb15376d0183

|

[

"MIT"

] | null | null | null |

Introduction to ML.ipynb

|

anikannal/ML_Projects

|

ae58eb8928a1aff7f205ab663adaeb15376d0183

|

[

"MIT"

] | null | null | null |

Introduction to ML.ipynb

|

anikannal/ML_Projects

|

ae58eb8928a1aff7f205ab663adaeb15376d0183

|

[

"MIT"

] | null | null | null | 33.15 | 387 | 0.594495 |

[

[

[

"# Introduction to Machine Learning",

"_____no_output_____"

],

[

"## What is Machine Learning?\n",

"_____no_output_____"

],

[

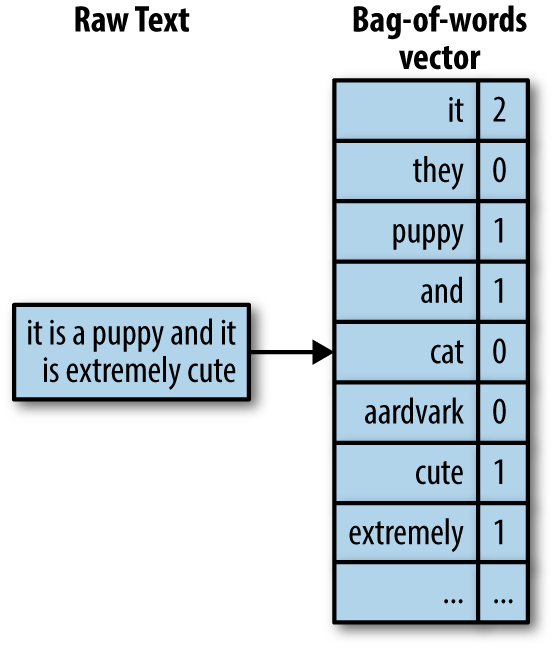

"Machine Learning is the field of study that gives computers the capability to learn without being explicitly programmed.\n\nI like to think of it as a comparison rather than a definition.\n\n- If you **can** give clear instructions on how to do the task - traditional computing\n- If you **cannot** give clear instructions but can give lots of examples - machine learning\n\nLet's look at a few illustrations - \n\n1. Complex mathematical calculations - can give clear instructions: traditional computing\n2. Processing a financial transaction - can give clear instructions: traditional computing\n3. Differentiate between pictures of cats and dogs - cant give instructions but can give examples: machine learning\n4. Playing chess - can give clear instructions for how to play but cannot give instructions on how to win! We can give a lot of past games as examples though: machine learning\n5. Customer segmentation - dont know what the segments/groupings are, so giving clear instructions is out of question. Can give a large amount of examples with customer demographic data and purchase history: machine learning\n\nSo we can say that traditional programming takes data and program to give us output, while machine learning takes data and output (examples) to give us a program!",

"_____no_output_____"

],

[

"<img src='https://drive.google.com/uc?id=1SAu0GNpDqDNRNxEtXRqBX-t20BuB0HcR' align = 'left'/>",

"_____no_output_____"

],

[

"## Data is the New Oil!\n\nData is absolutely **critical** to creating a viable Machine Learning model. Here's simple representation of how data helps us create a model and a model helps us make predictions.",

"_____no_output_____"

],

[

"<img src=\"https://drive.google.com/uc?id=1rM6SBXOMeAcFXu1OLtvk4HOWsXdY_xGU\" width=500 height=300 align=\"left\"/>",

"_____no_output_____"

],

[

"Here's a short explainer video if the pictures didnt really do it for you...",

"_____no_output_____"

]

],

[

[

"## Run this cell (shift+enter) to see the video\n\nfrom IPython.display import IFrame\nIFrame(\"https://www.youtube.com/embed/f_uwKZIAeM0\", width=\"600\", height=\"400\")",

"_____no_output_____"

]

],

[

[

"## What are the Different Types of Machine Learning?",

"_____no_output_____"

],

[

"<img src=\"https://drive.google.com/uc?id=1ESgroj56fbOoE0_xiMhsaibVa8D-_80H\" align=\"left\" width=\"1000\" height=\"800\"/>",

"_____no_output_____"

],

[

"---\n## Course Overview",

"_____no_output_____"

],

[

"This course is designed for the 'do-ers'. Our entire focus during this course will be to apply and experiment. Conceptual understanding is very important and we will build a strong conceptual foundation but it will always be in context of a project rather than just a theoretical understanding.\n\nWe will be exploring a variety of Machine Learning algorithms. For each we will use an appropriate real world dataset, work on a real problem statement, and execute a project that can become the foundation of your ML skills portfolio and your resume.\n\nYou now have access to a full scale ML lab-on-cloud. This is a very powerful tool, IF you use it. Make the most of what you have - explore, experiment, break a few things. You learn the most out of failure!",

"_____no_output_____"

],

[

"### What Will We Do?\n\n- We will understand the life cycle of a typical ML project and exercise it through real projects\n- We will be exploring a slew of ML algorithms (supervised and un-supervised learning)\n- For each of these algorithms we will understand how it works and apply it in a project\n- We will extensively work on real world datasets and strive to be hands-on",

"_____no_output_____"

],

[

"### What Will We NOT Do?\n\n- We will not cover every ML algorithm under the sun\n- We will not cover reinforced learning and deep learning in this course\n- We will not go deep into the mathematical, probabilistic, and statistical foundations of ML",

"_____no_output_____"

],

[

"## Course Curriculum\n\n**Key Concepts Covered**\n1. Lifecycle of a typical ML project\n2. Data Pre-processing</td>\n - Data acquisition and loading\\n\n - Data integration\\n\n - Exploratory data analysis\n - Data cleaning\n - Feature selection\n - Encoding\n - Normalization\n3. Picking the Right Algorithm\n4. Evaluating Your Model\n - Train - Test Split\n - Evaluation Metrics\n - Under and Over Fitting\n5. Other key concepts \n - Imputation\n - Kernel Functions\n - Bagging\n - Hyperparameters\n - Boosting\n\n**Algorithms Covered**\n1. Linear Regression\n2. Logistic Regression\n3. K Nearest Neighbors\n4. Decision Trees\n5. Random Forest\n6. Naive Bayes\n7. Support Vector Machine\n8. K Means Clustering\n9. Hierarchical Clustering\n\n**Datasets Used**\n1. Healthcare - patient data on drug efficacy\n2. Telecom - customer profiles\n3. Retail - customer profiles\n4. Automobile - automobile catalogue make, model, engine specs, etc.\n5. Environment - CO2 emmissions data\n6. Health Informatics - cancer cell biopsy observations",

"_____no_output_____"

],

[

"---\n## Life Cycle of a Typical ML Project",

"_____no_output_____"

],

[

"A typical ML project goes through 5 major steps - \n\n1. Define Project Objectives\n2. Acquire, Explore and Prepare Data\n3. Model Data\n4. Interpret and Communicate the Insights\n5. Implement, Document, and Maintain\n\nWe will work through steps 1 thru 4 during this course. We will **not** be deploying, documenting or maintaining our models.\n\n<img src=\"https://drive.google.com/uc?id=1hQrE2Q7D_j4T8y5aM8pW-ejuS4VUP7Co\" align=\"left\"/>\n\n\n\n\nLet's look at each of steps in further detail - \n\n1. **Define Project Objectives** - this is very important step that most of us tend to forget. Without a clear understand of why you are doing any project, the project will fail. What the business or clients expects as outcome of the project has to be discussed and understood before you start off.\n\n\n2. **Acquire, Explore, and Prepare Data** - you will spend a lot of your time on this step when you do an ML project. This is a critical step - exploring the data will help you decide which models you might want to employ, based this preliminary hypothesis you will prepare the data for the next step (Model Data). Here are a few things you will end up doing within this step - \n - Data acquisition and loading\n - Data integration\n - Exploratory data analysis\n - Data cleaning\n - Feature selection\n - Encoding\n - Normalization\n\n\n3. **Model Data** - this is the heart of our project. But, most students of ML get stuck on fancy algorithm names. There's a lot more to it than just claiming that you have done a project using SVM or Logistic Regression. You have to be able to articulate how you picked a model, how you trained it, and why did you conclude that the output looks good.\n - Select the algorithm(s) to use\n - Train the model(s)\n - Evaluate performance\n - Tweak parameters and re-evaluate\n\n\n4. **Interpret and Communicate the Insights** - just modeling the data, showing a few visualizations, and reducing the error is not enough. As an ML engineer you have to be able to talk to your client and help them interpret the outcome of all your hard work. Be ready to answer a few questions - \n - What interesting patterns did you notice in the data?\n - Did you notice any intrinsic dependencies, correlation, or causation in the features?\n - Why did you pick the algorithm that you did?\n - How did you split the train-test data? why?\n - Is this error rate acceptable? why?\n - How will the outcome of this project help the client?\n\n\n5. **Implement, Document, and Maintain** - at a real client, you will have to deploy your model in production, document it extensively, and also maintain it going forward. We will not go into this step given we are not going to be deploying our models in production.\n",

"_____no_output_____"

],

[

"## Kick Start!\nHere's a 12 minute crash course on ML to kick-start our journey!",

"_____no_output_____"

]

],

[

[

"## Run this cell (shift+enter) to see the video\n\nfrom IPython.display import IFrame\nIFrame(\"https://www.youtube.com/embed/z-EtmaFJieY\", width=\"814\", height=\"509\")",

"_____no_output_____"

]

],

[

[

"Here's a great article that summarizes Machine Learning really well.\n\nhttps://machinelearningmastery.com/basic-concepts-in-machine-learning/",

"_____no_output_____"

]

]

] |

[

"markdown",

"code",

"markdown",

"code",

"markdown"

] |

[

[

"markdown",

"markdown",

"markdown",

"markdown",

"markdown",

"markdown",

"markdown"

],

[

"code"

],

[

"markdown",

"markdown",

"markdown",

"markdown",

"markdown",

"markdown",

"markdown",

"markdown",

"markdown",

"markdown"

],

[

"code"

],

[

"markdown"

]

] |

4a9d6f67fbdedc6eafa2d09f9009d9a0098a213d

| 16,872 |

ipynb

|

Jupyter Notebook

|

3_building_xrrays_with_ard_and_brute_force/1_what_does_c2ARD_look_like_answer_non_existant.ipynb

|

tonybutzer/c-experiments

|

750393ed5e24c492162db392da10d4958ef632ee

|

[

"MIT"

] | null | null | null |

3_building_xrrays_with_ard_and_brute_force/1_what_does_c2ARD_look_like_answer_non_existant.ipynb

|

tonybutzer/c-experiments

|

750393ed5e24c492162db392da10d4958ef632ee

|

[

"MIT"

] | null | null | null |

3_building_xrrays_with_ard_and_brute_force/1_what_does_c2ARD_look_like_answer_non_existant.ipynb

|

tonybutzer/c-experiments

|

750393ed5e24c492162db392da10d4958ef632ee

|

[

"MIT"

] | null | null | null | 19.943262 | 181 | 0.495792 |

[

[

[

"from cubelib.stac_eco import Stac_eco\nfrom cubelib.fm_map import Fmap",

"_____no_output_____"

],

[

"import pandas as pd\n# pd.set_option('display.max_colwidth', None)\n# pd.set_option('display.max_rows', None)\n# pd.set_option('display.max_columns', None)\n# pd.set_option('display.width', 2000)",

"_____no_output_____"

],

[

"#! cp ../2_Gridding_For_Scale/*.geojson .\n! ls *.geojson",

"_____no_output_____"

],

[

"geojson_file = 'one_tile.geojson'\nse = Stac_eco(geojson_file)",

"_____no_output_____"

],

[

"se",

"_____no_output_____"

],

[

"se.set_collection('landsat-c2ard-sr')",

"_____no_output_____"

],

[

"se",

"_____no_output_____"

],

[

"fm = Fmap()\nfm.sat_geojson(geojson_file)",

"_____no_output_____"

],

[

"dates=\"2020-04-01/2020-10-31\"",

"_____no_output_____"

],

[

"search_object_eco = se.search(dates, cloud_cover=100)",

"_____no_output_____"

],

[

"number_of_matched_scenes = search_object_eco.matched()",

"_____no_output_____"

],

[

"print(f\"I found {number_of_matched_scenes} Scenes yay!\")",

"_____no_output_____"

],

[

"so = search_object_eco",

"_____no_output_____"

],

[

"gdf1 = se.items_gdf(so)",

"_____no_output_____"

],

[

"#gdf1",

"_____no_output_____"

],

[

"gdf1.T",

"_____no_output_____"

],

[

"import pandas as pd\npd.set_option('display.max_colwidth', None)\ngdf1['stac_extensions']",

"_____no_output_____"

],

[

"se.plot_polygons(so)",

"_____no_output_____"

],

[

"gdf1['properties.landsat:grid_vertical']",

"_____no_output_____"

],

[

"gdf1['properties.landsat:grid_horizontal']",

"_____no_output_____"

],

[

"gdf2 = gdf1[gdf1['properties.landsat:grid_horizontal']=='29']",

"_____no_output_____"

],

[

"gdf2.T",

"_____no_output_____"

],

[

"gdf3 = gdf2[gdf2['properties.landsat:grid_vertical']=='03']",

"_____no_output_____"

],

[

"gdf3[['properties.landsat:grid_horizontal', 'properties.landsat:grid_vertical']]",

"_____no_output_____"

],

[

"len(gdf3[['properties.landsat:grid_horizontal', 'properties.landsat:grid_vertical']])",

"_____no_output_____"

],

[

"dir(se)",

"_____no_output_____"

],

[

"se.df_assets(so)",

"_____no_output_____"

],

[

"import boto3\nfrom rasterio.session import AWSSession\naws_session = AWSSession(boto3.Session(), requester_pays=True)",

"_____no_output_____"

],

[

"import rasterio as rio\nimport xarray as xr\ndef create_dataset(row, bands = ['Swirs', 'Green'], chunks = {'band': 1, 'x':2048, 'y':2048}):\n datasets = []\n with rio.Env(aws_session):\n for band in bands:\n print(row[band]['href'])\n url = row[band]['href']\n #url = url.replace('usgs-landsat', 'usgs-landsat-ard')\n #da = xr.open_rasterio(url, chunks = chunks)\n da = xr.open_rasterio(url)\n daSub=da\n# daSub = da.sel(x=slice(ll_corner[0], ur_corner[0]), y=slice(ur_corner[1], ll_corner[1]))\n daSub = daSub.squeeze().drop(labels='band')\n DS = daSub.to_dataset(name = band)\n datasets.append(DS)\n DS = xr.merge(datasets)\n return DS",

"_____no_output_____"

],

[

"def asset_gdf(my_gdf,bands):\n #print(my_gdf.keys)\n i_dict_array = []\n for i,item in my_gdf.iterrows():\n i_dict ={}\n print(item.id)\n i_dict['id'] = item.id\n for band in bands:\n href = f'assets.{band}.href'\n #print(item[href])\n i_dict[band] = {'band': band,\n 'href': item[href]\n }\n i_dict_array.append(i_dict)\n print(i_dict_array)\n new_gdf = pd.DataFrame(i_dict_array)\n return new_gdf",

"_____no_output_____"

],

[

"gdf3",

"_____no_output_____"

],

[

"bands=['blue','green','red','nir08','swir16','swir22','qa_pixel']\ngdf4=asset_gdf(gdf3,bands)",

"_____no_output_____"

],

[

"gdf4.id",

"_____no_output_____"

],

[

"datasets = []\nfor i,row in gdf4.iterrows():\n try:\n print('loading....', row.id)\n \n ds = create_dataset(row,bands)\n datasets.append(ds)\n except Exception as e:\n print('Error loading, skipping')\n print(e)",

"_____no_output_____"

],

[

"! aws s3 ls --request-payer requester s3://usgs-landsat/collection02/oli-tirs/2020/CU/029/003/LC08_CU_029003_20200419_20210504_02/LC08_CU_029003_20200419_20210504_02_SR_B2.TIF",

"_____no_output_____"

],

[

"! aws s3 ls --request-payer requester s3://usgs-landsat/collection02/oli-tirs/2020/CU/029/003/",

"_____no_output_____"

],

[

"! aws s3 ls --request-payer requester s3://usgs-landsat-ard/collection02/oli-tirs/2020/CU/029/003/",

"_____no_output_____"

],

[

"datasets",

"_____no_output_____"

],

[

"! date",

"_____no_output_____"

],

[

"gdf3",

"_____no_output_____"

],

[

"gdf3.keys()",

"_____no_output_____"

],

[

"gdf3['properties.start_datetime'].tolist()",

"_____no_output_____"

],

[

"len(gdf3)",

"_____no_output_____"

],

[

"gdf3.index.tolist()",

"_____no_output_____"

],

[

"from datetime import datetime\nmy_date_list = gdf3.index.tolist()\nmy_str_date_list=[]\nfor dt in my_date_list:\n print(dt)\n str_dt = dt.strftime('%Y-%m-%d')\n print(str_dt)\n my_str_date_list.append(str_dt)",

"_____no_output_____"

],

[

"DS = xr.concat(datasets, dim= pd.DatetimeIndex(my_str_date_list, name='time'))",

"_____no_output_____"

],

[

"print('Dataset Size (Gb): ', DS.nbytes/1e9)",

"_____no_output_____"

],

[

"DS",

"_____no_output_____"

],

[

"DS['red'].isel(time=0).plot()",

"_____no_output_____"

],

[

"DS['red'][1].plot()",

"_____no_output_____"

],

[

"DS['red'][15].plot()",

"_____no_output_____"

],

[

"ds_mini = DS.isel(x=slice(0,5000,10), y=slice(0,5000,10))",

"_____no_output_____"

],

[

"ds_mini",

"_____no_output_____"

],

[

"%matplotlib inline\n\ndisplay_color = 'blue'\n# \nds_mini[display_color].plot.imshow('x','y', col='time', col_wrap=6, cmap='viridis')\n#ds_mini[display_color].plot.imshow('x','y', col='time', col_wrap=6, cmap='viridis', vmin=7000, vmax=19000)",

"_____no_output_____"

],

[

"ds_mini.hvplot()",

"_____no_output_____"

],

[

"ds_mini['red'][0].hvplot.image(rasterize=True)",

"_____no_output_____"

],

[

"ds_mini['red'][0].plot()",

"_____no_output_____"

],

[

"d2 = ds_mini.transpose('time', 'y', 'x')",

"_____no_output_____"

],

[

"d2['red'].hvplot.image(rasterize=True)",

"_____no_output_____"

],

[

"d2",

"_____no_output_____"

],

[

"dir(d2)",

"_____no_output_____"

],

[

"#d2.swap_dims({'time')",

"_____no_output_____"

],

[

"#help(d2.swap_dims)",

"_____no_output_____"

],

[

"#help(d2.rename)",

"_____no_output_____"

],

[

"#d2.swap_dims({'time':'x'})",

"_____no_output_____"

],

[

"#d2.dims",

"_____no_output_____"

],

[

"#d2.drop_dims()",

"_____no_output_____"

],

[

"help(d2.drop_dims)",

"_____no_output_____"

],

[

"d2['red'].hvplot.image(rasterize=True, x='x', y='y', width=600, height=400, cmap='viridis', clim=(4000,20000))",

"_____no_output_____"

],

[

"help(d2['red'].hvplot.image)",

"_____no_output_____"

],

[

"d2['qa_pixel'].hvplot.image(rasterize=True, x='x', y='y', width=600, height=400, cmap='viridis')",

"_____no_output_____"

],

[

"DS.time.attrs = {} #this allowed the nc to be written\n#ds.SCL.attrs = {}\n\nDS.to_netcdf('~/maine_one_tile_swir_also.nc')",

"_____no_output_____"

],

[

"! ls -lh ~/*.nc",

"_____no_output_____"

]

]

] |

[

"code"

] |

[

[

"code",

"code",

"code",

"code",

"code",

"code",

"code",

"code",

"code",

"code",

"code",

"code",

"code",

"code",

"code",

"code",

"code",

"code",

"code",

"code",

"code",

"code",

"code",

"code",

"code",

"code",

"code",

"code",

"code",

"code",

"code",

"code",

"code",

"code",

"code",

"code",

"code",

"code",

"code",

"code",

"code",

"code",

"code",

"code",

"code",

"code",

"code",

"code",

"code",

"code",

"code",

"code",

"code",

"code",

"code",

"code",

"code",

"code",

"code",

"code",

"code",

"code",

"code",

"code",

"code",

"code",

"code",

"code",

"code",

"code",

"code",

"code",

"code"

]

] |

4a9dbbb8480f87c44abe72c7e961fd245760a9a4

| 141,906 |

ipynb

|

Jupyter Notebook

|

notebooks/Troubleshooting.ipynb

|

granttremblay/hyperscreen

|

f39029cdd835ffefb9a9a483f264f5cd76b7f58c

|

[

"MIT"

] | 2 |

2020-07-01T12:24:38.000Z

|

2021-05-16T23:49:07.000Z

|

notebooks/Troubleshooting.ipynb

|

granttremblay/hyperscreen

|

f39029cdd835ffefb9a9a483f264f5cd76b7f58c

|

[

"MIT"

] | 15 |

2019-10-12T02:38:16.000Z

|

2020-05-21T01:20:31.000Z

|

notebooks/Troubleshooting.ipynb

|

granttremblay/hyperscreen

|

f39029cdd835ffefb9a9a483f264f5cd76b7f58c

|

[

"MIT"

] | null | null | null | 94.983936 | 23,740 | 0.605048 |

[

[

[

"\nimport warnings\n\nimport matplotlib as mpl\nimport matplotlib.pyplot as plt\nfrom matplotlib.colors import LogNorm\n\nfrom astropy.io import fits\nfrom astropy.table import Table\n\nimport pandas as pd\nimport numpy as np\nnp.seterr(divide='ignore')\n\n\nwarnings.filterwarnings(\"ignore\", category=RuntimeWarning)\n\n\nclass HRCevt1:\n '''\n A more robust HRC EVT1 file. Includes explicit\n columns for every status bit, as well as calculated\n columns for the f_p, f_b plane for your boomerangs.\n Check out that cool new filtering algorithm!\n '''\n\n def __init__(self, evt1file):\n\n # Do a standard read in of the EVT1 fits table\n self.filename = evt1file\n self.hdulist = fits.open(evt1file)\n self.data = Table(self.hdulist[1].data)\n self.header = self.hdulist[1].header\n self.gti = self.hdulist[2].data\n self.hdulist.close() # Don't forget to close your fits file!\n\n fp_u, fb_u, fp_v, fb_v = self.calculate_fp_fb()\n\n self.gti.starts = self.gti['START']\n self.gti.stops = self.gti['STOP']\n\n self.gtimask = []\n # for start, stop in zip(self.gti.starts, self.gti.stops):\n # self.gtimask = (self.data[\"time\"] > start) & (self.data[\"time\"] < stop)\n\n self.gtimask = (self.data[\"time\"] > self.gti.starts[0]) & (\n self.data[\"time\"] < self.gti.stops[-1])\n\n self.data[\"fp_u\"] = fp_u\n self.data[\"fb_u\"] = fb_u\n self.data[\"fp_v\"] = fp_v\n self.data[\"fb_v\"] = fb_v\n\n # Make individual status bit columns with legible names\n self.data[\"AV3 corrected for ringing\"] = self.data[\"status\"][:, 0]\n self.data[\"AU3 corrected for ringing\"] = self.data[\"status\"][:, 1]\n self.data[\"Event impacted by prior event (piled up)\"] = self.data[\"status\"][:, 2]\n # Bit 4 (Python 3) is spare\n self.data[\"Shifted event time\"] = self.data[\"status\"][:, 4]\n self.data[\"Event telemetered in NIL mode\"] = self.data[\"status\"][:, 5]\n self.data[\"V axis not triggered\"] = self.data[\"status\"][:, 6]\n self.data[\"U axis not triggered\"] = self.data[\"status\"][:, 7]\n self.data[\"V axis center blank event\"] = self.data[\"status\"][:, 8]\n self.data[\"U axis center blank event\"] = self.data[\"status\"][:, 9]\n self.data[\"V axis width exceeded\"] = self.data[\"status\"][:, 10]\n self.data[\"U axis width exceeded\"] = self.data[\"status\"][:, 11]\n self.data[\"Shield PMT active\"] = self.data[\"status\"][:, 12]\n # Bit 14 (Python 13) is hardware spare\n self.data[\"Upper level discriminator not exceeded\"] = self.data[\"status\"][:, 14]\n self.data[\"Lower level discriminator not exceeded\"] = self.data[\"status\"][:, 15]\n self.data[\"Event in bad region\"] = self.data[\"status\"][:, 16]\n self.data[\"Amp total on V or U = 0\"] = self.data[\"status\"][:, 17]\n self.data[\"Incorrect V center\"] = self.data[\"status\"][:, 18]\n self.data[\"Incorrect U center\"] = self.data[\"status\"][:, 19]\n self.data[\"PHA ratio test failed\"] = self.data[\"status\"][:, 20]\n self.data[\"Sum of 6 taps = 0\"] = self.data[\"status\"][:, 21]\n self.data[\"Grid ratio test failed\"] = self.data[\"status\"][:, 22]\n self.data[\"ADC sum on V or U = 0\"] = self.data[\"status\"][:, 23]\n self.data[\"PI exceeding 255\"] = self.data[\"status\"][:, 24]\n self.data[\"Event time tag is out of sequence\"] = self.data[\"status\"][:, 25]\n self.data[\"V amp flatness test failed\"] = self.data[\"status\"][:, 26]\n self.data[\"U amp flatness test failed\"] = self.data[\"status\"][:, 27]\n self.data[\"V amp saturation test failed\"] = self.data[\"status\"][:, 28]\n self.data[\"U amp saturation test failed\"] = self.data[\"status\"][:, 29]\n self.data[\"V hyperbolic test failed\"] = self.data[\"status\"][:, 30]\n self.data[\"U hyperbolic test failed\"] = self.data[\"status\"][:, 31]\n self.data[\"Hyperbola test passed\"] = np.logical_not(np.logical_or(\n self.data['U hyperbolic test failed'], self.data['V hyperbolic test failed']))\n self.data[\"Hyperbola test failed\"] = np.logical_or(\n self.data['U hyperbolic test failed'], self.data['V hyperbolic test failed'])\n\n self.obsid = self.header[\"OBS_ID\"]\n self.obs_date = self.header[\"DATE\"]\n self.target = self.header[\"OBJECT\"]\n self.detector = self.header[\"DETNAM\"]\n self.grating = self.header[\"GRATING\"]\n self.exptime = self.header[\"EXPOSURE\"]\n\n self.numevents = len(self.data[\"time\"])\n self.goodtimeevents = len(self.data[\"time\"][self.gtimask])\n self.badtimeevents = self.numevents - self.goodtimeevents\n\n self.hyperbola_passes = np.sum(np.logical_or(\n self.data['U hyperbolic test failed'], self.data['V hyperbolic test failed']))\n self.hyperbola_failures = np.sum(np.logical_not(np.logical_or(\n self.data['U hyperbolic test failed'], self.data['V hyperbolic test failed'])))\n\n if self.hyperbola_passes + self.hyperbola_failures != self.numevents:\n print(\"Warning: Number of Hyperbola Test Failures and Passes ({}) does not equal total number of events ({}).\".format(\n self.hyperbola_passes + self.hyperbola_failures, self.numevents))\n\n # Multidimensional columns don't grok with Pandas\n self.data.remove_column('status')\n self.data = self.data.to_pandas()\n\n def __str__(self):\n return \"HRC EVT1 object with {} events. Data is packaged as a Pandas Dataframe\".format(self.numevents)\n\n def calculate_fp_fb(self):\n '''\n Calculate the Fine Position (fp) and normalized central tap\n amplitude (fb) for the HRC U- and V- axes.\n\n Parameters\n ----------\n data : Astropy Table\n Table object made from an HRC evt1 event list. Must include the\n au1, au2, au3 and av1, av2, av3 columns.\n\n Returns\n -------\n fp_u, fb_u, fp_v, fb_v: float\n Calculated fine positions and normalized central tap amplitudes\n for the HRC U- and V- axes\n '''\n a_u = self.data[\"au1\"] # otherwise known as \"a1\"\n b_u = self.data[\"au2\"] # \"a2\"\n c_u = self.data[\"au3\"] # \"a3\"\n\n a_v = self.data[\"av1\"]\n b_v = self.data[\"av2\"]\n c_v = self.data[\"av3\"]\n\n with np.errstate(invalid='ignore'):\n # Do the U axis\n fp_u = ((c_u - a_u) / (a_u + b_u + c_u))\n fb_u = b_u / (a_u + b_u + c_u)\n\n # Do the V axis\n fp_v = ((c_v - a_v) / (a_v + b_v + c_v))\n fb_v = b_v / (a_v + b_v + c_v)\n\n return fp_u, fb_u, fp_v, fb_v\n\n def threshold(self, img, bins):\n nozero_img = img.copy()\n nozero_img[img == 0] = np.nan\n\n # This is a really stupid way to threshold\n median = np.nanmedian(nozero_img)\n thresh = median*5\n\n thresh_img = nozero_img\n thresh_img[thresh_img < thresh] = np.nan\n thresh_img[:int(bins[1]/2), :] = np.nan\n # thresh_img[:,int(bins[1]-5):] = np.nan\n return thresh_img\n\n\n def hyperscreen(self):\n '''\n Grant Tremblay's new algorithm. Screens events on a tap-by-tap basis.\n '''\n\n data = self.data\n\n #taprange = range(data['crsu'].min(), data['crsu'].max() + 1)\n taprange_u = range(data['crsu'].min() -1 , data['crsu'].max() + 1)\n taprange_v = range(data['crsv'].min() - 1, data['crsv'].max() + 1)\n\n bins = [200, 200] # number of bins\n\n # Instantiate these empty dictionaries to hold our results\n u_axis_survivals = {}\n v_axis_survivals = {}\n\n for tap in taprange_u:\n # Do the U axis\n tapmask_u = data[data['crsu'] == tap].index.values\n if len(tapmask_u) < 2:\n continue\n keep_u = np.isfinite(data['fb_u'][tapmask_u])\n\n hist_u, xbounds_u, ybounds_u = np.histogram2d(\n data['fb_u'][tapmask_u][keep_u], data['fp_u'][tapmask_u][keep_u], bins=bins)\n thresh_hist_u = self.threshold(hist_u, bins=bins)\n\n posx_u = np.digitize(data['fb_u'][tapmask_u], xbounds_u)\n posy_u = np.digitize(data['fp_u'][tapmask_u], ybounds_u)\n hist_mask_u = (posx_u > 0) & (posx_u <= bins[0]) & (\n posy_u > -1) & (posy_u <= bins[1])\n\n # Values of the histogram where the points are\n hhsub_u = thresh_hist_u[posx_u[hist_mask_u] -\n 1, posy_u[hist_mask_u] - 1]\n pass_fb_u = data['fb_u'][tapmask_u][hist_mask_u][np.isfinite(\n hhsub_u)]\n\n u_axis_survivals[\"U Axis Tap {:02d}\".format(\n tap)] = pass_fb_u.index.values\n\n for tap in taprange_v:\n # Now do the V axis:\n tapmask_v = data[data['crsv'] == tap].index.values\n if len(tapmask_v) < 2:\n continue\n keep_v = np.isfinite(data['fb_v'][tapmask_v])\n\n hist_v, xbounds_v, ybounds_v = np.histogram2d(\n data['fb_v'][tapmask_v][keep_v], data['fp_v'][tapmask_v][keep_v], bins=bins)\n thresh_hist_v = self.threshold(hist_v, bins=bins)\n\n posx_v = np.digitize(data['fb_v'][tapmask_v], xbounds_v)\n posy_v = np.digitize(data['fp_v'][tapmask_v], ybounds_v)\n hist_mask_v = (posx_v > 0) & (posx_v <= bins[0]) & (\n posy_v > -1) & (posy_v <= bins[1])\n\n # Values of the histogram where the points are\n hhsub_v = thresh_hist_v[posx_v[hist_mask_v] -\n 1, posy_v[hist_mask_v] - 1]\n pass_fb_v = data['fb_v'][tapmask_v][hist_mask_v][np.isfinite(\n hhsub_v)]\n\n v_axis_survivals[\"V Axis Tap {:02d}\".format(\n tap)] = pass_fb_v.index.values\n\n # Done looping over taps\n\n u_all_survivals = np.concatenate(\n [x for x in u_axis_survivals.values()])\n v_all_survivals = np.concatenate(\n [x for x in v_axis_survivals.values()])\n\n # If the event passes both U- and V-axis tests, it survives\n all_survivals = np.intersect1d(u_all_survivals, v_all_survivals)\n survival_mask = np.isin(self.data.index.values, all_survivals)\n failure_mask = np.logical_not(survival_mask)\n\n num_survivals = sum(survival_mask)\n num_failures = sum(failure_mask)\n\n percent_tapscreen_rejected = round(\n ((num_failures / self.numevents) * 100), 2)\n\n # Do a sanity check to look for lost events. Shouldn't be any.\n if num_survivals + num_failures != self.numevents:\n print(\"WARNING: Total Number of survivals and failures does \\\n not equal total events in the EVT1 file. Something is wrong!\")\n\n legacy_hyperbola_test_survivals = sum(\n self.data['Hyperbola test passed'])\n legacy_hyperbola_test_failures = sum(\n self.data['Hyperbola test failed'])\n percent_legacy_hyperbola_test_rejected = round(\n ((legacy_hyperbola_test_failures / self.goodtimeevents) * 100), 2)\n\n percent_improvement_over_legacy_test = round(\n (percent_tapscreen_rejected - percent_legacy_hyperbola_test_rejected), 2)\n\n hyperscreen_results_dict = {\"ObsID\": self.obsid,\n \"Target\": self.target,\n \"Exposure Time\": self.exptime,\n \"Detector\": self.detector,\n \"Number of Events\": self.numevents,\n \"Number of Good Time Events\": self.goodtimeevents,\n \"U Axis Survivals by Tap\": u_axis_survivals,\n \"V Axis Survivals by Tap\": v_axis_survivals,\n \"U Axis All Survivals\": u_all_survivals,\n \"V Axis All Survivals\": v_all_survivals,\n \"All Survivals (event indices)\": all_survivals,\n \"All Survivals (boolean mask)\": survival_mask,\n \"All Failures (boolean mask)\": failure_mask,\n \"Percent rejected by Tapscreen\": percent_tapscreen_rejected,\n \"Percent rejected by Hyperbola\": percent_legacy_hyperbola_test_rejected,\n \"Percent improvement\": percent_improvement_over_legacy_test\n }\n\n return hyperscreen_results_dict\n\n def hyperbola(self, fb, a, b, h):\n '''Given the normalized central tap amplitude, a, b, and h,\n return an array of length len(fb) that gives a hyperbola.'''\n hyperbola = b * np.sqrt(((fb - h)**2 / a**2) - 1)\n\n return hyperbola\n\n def legacy_hyperbola_test(self, tolerance=0.035):\n '''\n Apply the hyperbolic test.\n '''\n\n # Remind the user what tolerance they're using\n # print(\"{0: <25}| Using tolerance = {1}\".format(\" \", tolerance))\n\n # Set hyperbolic coefficients, depending on whether this is HRC-I or -S\n if self.detector == \"HRC-I\":\n a_u = 0.3110\n b_u = 0.3030\n h_u = 1.0580\n\n a_v = 0.3050\n b_v = 0.2730\n h_v = 1.1\n # print(\"{0: <25}| Using HRC-I hyperbolic coefficients: \".format(\" \"))\n # print(\"{0: <25}| Au={1}, Bu={2}, Hu={3}\".format(\" \", a_u, b_u, h_u))\n # print(\"{0: <25}| Av={1}, Bv={2}, Hv={3}\".format(\" \", a_v, b_v, h_v))\n\n if self.detector == \"HRC-S\":\n a_u = 0.2706\n b_u = 0.2620\n h_u = 1.0180\n\n a_v = 0.2706\n b_v = 0.2480\n h_v = 1.0710\n # print(\"{0: <25}| Using HRC-S hyperbolic coefficients: \".format(\" \"))\n # print(\"{0: <25}| Au={1}, Bu={2}, Hu={3}\".format(\" \", a_u, b_u, h_u))\n # print(\"{0: <25}| Av={1}, Bv={2}, Hv={3}\".format(\" \", a_v, b_v, h_v))\n\n # Set the tolerance boundary (\"width\" of the hyperbolic region)\n\n h_u_lowerbound = h_u * (1 + tolerance)\n h_u_upperbound = h_u * (1 - tolerance)\n\n h_v_lowerbound = h_v * (1 + tolerance)\n h_v_upperbound = h_v * (1 - tolerance)\n\n # Compute the Hyperbolae\n with np.errstate(invalid='ignore'):\n zone_u_fit = self.hyperbola(self.data[\"fb_u\"], a_u, b_u, h_u)\n zone_u_lowerbound = self.hyperbola(\n self.data[\"fb_u\"], a_u, b_u, h_u_lowerbound)\n zone_u_upperbound = self.hyperbola(\n self.data[\"fb_u\"], a_u, b_u, h_u_upperbound)\n\n zone_v_fit = self.hyperbola(self.data[\"fb_v\"], a_v, b_v, h_v)\n zone_v_lowerbound = self.hyperbola(\n self.data[\"fb_v\"], a_v, b_v, h_v_lowerbound)\n zone_v_upperbound = self.hyperbola(\n self.data[\"fb_v\"], a_v, b_v, h_v_upperbound)\n\n zone_u = [zone_u_lowerbound, zone_u_upperbound]\n zone_v = [zone_v_lowerbound, zone_v_upperbound]\n\n # Apply the masks\n # print(\"{0: <25}| Hyperbolic masks for U and V axes computed\".format(\"\"))\n\n with np.errstate(invalid='ignore'):\n # print(\"{0: <25}| Creating U-axis mask\".format(\"\"), end=\" |\")\n between_u = np.logical_not(np.logical_and(\n self.data[\"fp_u\"] < zone_u[1], self.data[\"fp_u\"] > -1 * zone_u[1]))\n not_beyond_u = np.logical_and(\n self.data[\"fp_u\"] < zone_u[0], self.data[\"fp_u\"] > -1 * zone_u[0])\n condition_u_final = np.logical_and(between_u, not_beyond_u)\n\n # print(\" Creating V-axis mask\")\n between_v = np.logical_not(np.logical_and(\n self.data[\"fp_v\"] < zone_v[1], self.data[\"fp_v\"] > -1 * zone_v[1]))\n not_beyond_v = np.logical_and(\n self.data[\"fp_v\"] < zone_v[0], self.data[\"fp_v\"] > -1 * zone_v[0])\n condition_v_final = np.logical_and(between_v, not_beyond_v)\n\n mask_u = condition_u_final\n mask_v = condition_v_final\n\n hyperzones = {\"zone_u_fit\": zone_u_fit,\n \"zone_u_lowerbound\": zone_u_lowerbound,\n \"zone_u_upperbound\": zone_u_upperbound,\n \"zone_v_fit\": zone_v_fit,\n \"zone_v_lowerbound\": zone_v_lowerbound,\n \"zone_v_upperbound\": zone_v_upperbound}\n\n hypermasks = {\"mask_u\": mask_u, \"mask_v\": mask_v}\n\n # print(\"{0: <25}| Hyperbolic masks created\".format(\"\"))\n # print(\"{0: <25}| \".format(\"\"))\n return hyperzones, hypermasks\n\n\n def boomerang(self, mask=None, show=True, plot_legacy_zone=True, title=None, cmap=None, savepath=None, create_subplot=False, ax=None, rasterized=True):\n\n # You can plot the image on axes of a subplot by passing\n # that axis to this function. Here are some switches to enable that.\n\n if create_subplot is False:\n self.fig, self.ax = plt.subplots(figsize=(12, 8))\n elif create_subplot is True:\n if ax is None:\n self.ax = plt.gca()\n else:\n self.ax = ax\n\n if cmap is None:\n cmap = 'plasma'\n\n if mask is not None:\n self.ax.scatter(self.data['fb_u'], self.data['fp_u'],\n c=self.data['sumamps'], cmap='bone', s=0.3, alpha=0.8, rasterized=rasterized)\n\n frame = self.ax.scatter(self.data['fb_u'][mask], self.data['fp_u'][mask],\n c=self.data['sumamps'][mask], cmap=cmap, s=0.5, rasterized=rasterized)\n\n else:\n frame = self.ax.scatter(self.data['fb_u'], self.data['fp_u'],\n c=self.data['sumamps'], cmap=cmap, s=0.5, rasterized=rasterized)\n\n if plot_legacy_zone is True:\n hyperzones, hypermasks = self.legacy_hyperbola_test(\n tolerance=0.035)\n self.ax.plot(self.data[\"fb_u\"], hyperzones[\"zone_u_lowerbound\"],\n 'o', markersize=0.3, color='black', alpha=0.8, rasterized=rasterized)\n self.ax.plot(self.data[\"fb_u\"], -1 * hyperzones[\"zone_u_lowerbound\"],\n 'o', markersize=0.3, color='black', alpha=0.8, rasterized=rasterized)\n\n self.ax.plot(self.data[\"fb_u\"], hyperzones[\"zone_u_upperbound\"],\n 'o', markersize=0.3, color='black', alpha=0.8, rasterized=rasterized)\n self.ax.plot(self.data[\"fb_u\"], -1 * hyperzones[\"zone_u_upperbound\"],\n 'o', markersize=0.3, color='black', alpha=0.8, rasterized=rasterized)\n\n self.ax.grid(False)\n\n if title is None:\n self.ax.set_title('{} | {} | ObsID {} | {} ksec | {} counts'.format(\n self.target, self.detector, self.obsid, round(self.exptime / 1000, 1), self.numevents))\n else:\n self.ax.set_title(title)\n\n self.ax.set_ylim(-1.1, 1.1)\n self.ax.set_xlim(-0.1, 1.1)\n\n self.ax.set_ylabel(r'Fine Position $f_p$ $(C-A)/(A + B + C)$')\n self.ax.set_xlabel(\n r'Normalized Central Tap Amplitude $f_b$ $B / (A+B+C)$')\n\n if create_subplot is False:\n self.cbar = plt.colorbar(frame, pad=-0.005)\n self.cbar.set_label(\"SUMAMPS\")\n\n if show is True:\n plt.show()\n\n if savepath is not None:\n plt.savefig(savepath, dpi=150, bbox_inches='tight')\n print('Saved boomerang figure to: {}'.format(savepath))\n\n\n def image(self, masked_x=None, masked_y=None, xlim=None, ylim=None, detcoords=False, title=None, cmap=None, show=True, savepath=None, create_subplot=False, ax=None):\n '''\n Create a quicklook image, in detector or sky coordinates, of the \n observation. The image will be binned to 400x400. \n '''\n\n # Create the 2D histogram\n nbins = (400, 400)\n\n if masked_x is not None and masked_y is not None:\n x = masked_x\n y = masked_y\n img_data, yedges, xedges = np.histogram2d(y, x, nbins)\n else:\n if detcoords is False:\n x = self.data['x'][self.gtimask]\n y = self.data['y'][self.gtimask]\n elif detcoords is True:\n x = self.data['detx'][self.gtimask]\n y = self.data['dety'][self.gtimask]\n img_data, yedges, xedges = np.histogram2d(y, x, nbins)\n\n extent = [xedges[0], xedges[-1], yedges[0], yedges[-1]]\n\n # Create the Figure\n styleplots()\n\n # You can plot the image on axes of a subplot by passing\n # that axis to this function. Here are some switches to enable that.\n if create_subplot is False:\n self.fig, self.ax = plt.subplots()\n elif create_subplot is True:\n if ax is None:\n self.ax = plt.gca()\n else:\n self.ax = ax\n\n self.ax.grid(False)\n\n if cmap is None:\n cmap = 'viridis'\n\n self.ax.imshow(img_data, extent=extent, norm=LogNorm(),\n interpolation=None, cmap=cmap, origin='lower')\n\n if title is None:\n self.ax.set_title(\"ObsID {} | {} | {} | {:,} events\".format(\n self.obsid, self.target, self.detector, self.goodtimeevents))\n else:\n self.ax.set_title(\"{}\".format(title))\n if detcoords is False:\n self.ax.set_xlabel(\"Sky X\")\n self.ax.set_ylabel(\"Sky Y\")\n elif detcoords is True:\n self.ax.set_xlabel(\"Detector X\")\n self.ax.set_ylabel(\"Detector Y\")\n \n if xlim is not None:\n self.ax.set_xlim(xlim)\n if ylim is not None:\n self.ax.set_ylim(ylim)\n\n if show is True:\n plt.show(block=True)\n\n if savepath is not None:\n plt.savefig('{}'.format(savepath))\n print(\"Saved image to {}\".format(savepath))\n\n\ndef styleplots():\n\n mpl.rcParams['agg.path.chunksize'] = 10000\n\n # Make things pretty\n plt.style.use('ggplot')\n\n labelsizes = 10\n\n plt.rcParams['font.size'] = labelsizes\n plt.rcParams['axes.titlesize'] = 12\n plt.rcParams['axes.labelsize'] = labelsizes\n plt.rcParams['xtick.labelsize'] = labelsizes\n plt.rcParams['ytick.labelsize'] = labelsizes\n",

"_____no_output_____"

],

[

"from astropy.io import fits\nimport os",

"_____no_output_____"

],

[

"os.listdir('../tests/data/')",

"_____no_output_____"

],

[

"fitsfile = '../tests/data/hrcS_evt1_testfile.fits.gz'\nobs = HRCevt1(fitsfile)",

"_____no_output_____"

],

[

"obs.image(obs.data['detx'][obs.gtimask], obs.data['dety'][obs.gtimask], xlim=(26000, 41000), ylim=(31500, 34000))",

"_____no_output_____"

],

[

"results = obs.hyperscreen()",

"_____no_output_____"

],

[

"obs.image(obs.data['detx'][results['All Failures (boolean mask)']], obs.data['dety'][results['All Failures (boolean mask)']], xlim=(26000, 41000), ylim=(31500, 34000))",

"_____no_output_____"

],

[

"obs.data['crsv'].min()",

"_____no_output_____"

],

[

"obs.data['crsv'].max()",

"_____no_output_____"

],

[

"obs.data['crsv']",

"_____no_output_____"

],

[

"obs.numevents",

"_____no_output_____"

],

[

"from astropy.io import fits",

"_____no_output_____"

],

[

"header = fits.getheader(fitsfile, 1)",

"_____no_output_____"

],

[

"header",

"_____no_output_____"

],

[

"from hyperscreen import hypercore",

"_____no_output_____"

]

]

] |

[

"code"

] |

[

[

"code",

"code",

"code",

"code",

"code",

"code",

"code",

"code",

"code",

"code",

"code",

"code",

"code",

"code",

"code"

]

] |

4a9dee80986eec1f155aa0cb04810f40729468be

| 21,775 |

ipynb

|

Jupyter Notebook

|

notebooks/7. Parameterizing with Continuous Variables.ipynb

|

sean578/pgmpy_notebook

|

102549516322f47bd955fa165c8ae1d6786bfa77

|

[

"MIT"

] | 326 |

2015-03-12T15:14:17.000Z

|

2022-03-24T15:02:44.000Z

|

notebooks/7. Parameterizing with Continuous Variables.ipynb

|

sean578/pgmpy_notebook

|

102549516322f47bd955fa165c8ae1d6786bfa77

|

[

"MIT"

] | 48 |

2015-03-06T09:42:17.000Z

|

2022-03-18T13:22:40.000Z

|

notebooks/7. Parameterizing with Continuous Variables.ipynb

|

sean578/pgmpy_notebook

|

102549516322f47bd955fa165c8ae1d6786bfa77

|

[

"MIT"

] | 215 |

2015-02-11T13:28:22.000Z

|

2022-01-17T08:58:17.000Z

| 26.171875 | 691 | 0.53341 |

[

[

[

"# Parameterizing with Continuous Variables",

"_____no_output_____"

]

],

[

[

"from IPython.display import Image",

"_____no_output_____"

]

],

[

[

"## Continuous Factors",

"_____no_output_____"

],

[

"1. Base Class for Continuous Factors\n2. Joint Gaussian Distributions\n3. Canonical Factors\n4. Linear Gaussian CPD",

"_____no_output_____"

],

[

"In many situations, some variables are best modeled as taking values in some continuous space. Examples include variables such as position, velocity, temperature, and pressure. Clearly, we cannot use a table representation in this case. \n\nNothing in the formulation of a Bayesian network requires that we restrict attention to discrete variables. The only requirement is that the CPD, P(X | Y1, Y2, ... Yn) represent, for every assignment of values y1 ∈ Val(Y1), y2 ∈ Val(Y2), .....yn ∈ val(Yn), a distribution over X. In this case, X might be continuous, in which case the CPD would need to represent distributions over a continuum of values; we might also have X’s parents continuous, so that the CPD would also need to represent a continuum of different probability distributions. There exists implicit representations for CPDs of this type, allowing us to apply all the network machinery for the continuous case as well.",

"_____no_output_____"

],

[

"### Base Class for Continuous Factors",

"_____no_output_____"

],

[

"This class will behave as a base class for the continuous factor representations. All the present and future factor classes will be derived from this base class. We need to specify the variable names and a pdf function to initialize this class.",

"_____no_output_____"

]

],

[

[

"import numpy as np\nfrom scipy.special import beta\n\n# Two variable drichlet ditribution with alpha = (1,2)\ndef drichlet_pdf(x, y):\n return (np.power(x, 1)*np.power(y, 2))/beta(x, y)\n\nfrom pgmpy.factors.continuous import ContinuousFactor\ndrichlet_factor = ContinuousFactor(['x', 'y'], drichlet_pdf)",

"_____no_output_____"

],

[

"drichlet_factor.scope(), drichlet_factor.assignment(5,6)",

"_____no_output_____"

]

],

[

[

"This class supports methods like **marginalize, reduce, product and divide** just like what we have with discrete classes. One caveat is that when there are a number of variables involved, these methods prove to be inefficient and hence we resort to certain Gaussian or some other approximations which are discussed later.",

"_____no_output_____"

]

],

[

[

"def custom_pdf(x, y, z):\n return z*(np.power(x, 1)*np.power(y, 2))/beta(x, y)\n\ncustom_factor = ContinuousFactor(['x', 'y', 'z'], custom_pdf)",

"_____no_output_____"

],

[

"custom_factor.scope(), custom_factor.assignment(1, 2, 3)",

"_____no_output_____"

],

[

"custom_factor.reduce([('y', 2)])\ncustom_factor.scope(), custom_factor.assignment(1, 3)",

"_____no_output_____"

],

[

"from scipy.stats import multivariate_normal\n\nstd_normal_pdf = lambda *x: multivariate_normal.pdf(x, [0, 0], [[1, 0], [0, 1]])\nstd_normal = ContinuousFactor(['x1', 'x2'], std_normal_pdf)\nstd_normal.scope(), std_normal.assignment([1, 1])",

"_____no_output_____"

],

[

"std_normal.marginalize(['x2'])\nstd_normal.scope(), std_normal.assignment(1)",

"_____no_output_____"

],

[

"sn_pdf1 = lambda x: multivariate_normal.pdf([x], [0], [[1]])\nsn_pdf2 = lambda x1,x2: multivariate_normal.pdf([x1, x2], [0, 0], [[1, 0], [0, 1]])\nsn1 = ContinuousFactor(['x2'], sn_pdf1)\nsn2 = ContinuousFactor(['x1', 'x2'], sn_pdf2)\nsn3 = sn1 * sn2\nsn4 = sn2 / sn1\nsn3.assignment(0, 0), sn4.assignment(0, 0)",

"_____no_output_____"

]

],

[

[

"The ContinuousFactor class also has a method **discretize** that takes a pgmpy Discretizer class as input. It will output a list of discrete probability masses or a Factor or TabularCPD object depending upon the discretization method used. Although, we do not have inbuilt discretization algorithms for multivariate distributions for now, the users can always define their own Discretizer class by subclassing the pgmpy.BaseDiscretizer class.",

"_____no_output_____"

],

[

"### Joint Gaussian Distributions",

"_____no_output_____"

],

[

"In its most common representation, a multivariate Gaussian distribution over X1………..Xn is characterized by an n-dimensional mean vector μ, and a symmetric n x n covariance matrix Σ. The density function is most defined as -",

"_____no_output_____"

],

[

"$$\np(x) = \\dfrac{1}{(2\\pi)^{n/2}|Σ|^{1/2}} exp[-0.5*(x-μ)^TΣ^{-1}(x-μ)]\n$$\n",

"_____no_output_____"

],

[

"The class pgmpy.JointGaussianDistribution provides its representation. This is derived from the class pgmpy.ContinuousFactor. We need to specify the variable names, a mean vector and a covariance matrix for its inialization. It will automatically comute the pdf function given these parameters.",

"_____no_output_____"

]

],

[

[

"from pgmpy.factors.distributions import GaussianDistribution as JGD\ndis = JGD(['x1', 'x2', 'x3'], np.array([[1], [-3], [4]]),\n np.array([[4, 2, -2], [2, 5, -5], [-2, -5, 8]]))\ndis.variables",

"_____no_output_____"

],

[

"dis.mean",

"_____no_output_____"

],

[

"dis.covariance",

"_____no_output_____"

],

[

"dis.pdf([0,0,0])",

"_____no_output_____"

]

],

[

[

"This class overrides the basic operation methods **(marginalize, reduce, normalize, product and divide)** as these operations here are more efficient than the ones in its parent class. Most of these operation involve a matrix inversion which is O(n^3) with repect to the number of variables.",

"_____no_output_____"

]

],

[

[

"dis1 = JGD(['x1', 'x2', 'x3'], np.array([[1], [-3], [4]]),\n np.array([[4, 2, -2], [2, 5, -5], [-2, -5, 8]]))\ndis2 = JGD(['x3', 'x4'], [1, 2], [[2, 3], [5, 6]])\ndis3 = dis1 * dis2\ndis3.variables",

"_____no_output_____"

],

[

"dis3.mean",

"_____no_output_____"

],

[

"dis3.covariance",

"_____no_output_____"

]

],

[

[

"The others methods can also be used in a similar fashion.",

"_____no_output_____"

],

[

"### Canonical Factors",

"_____no_output_____"

],

[

"While the Joint Gaussian representation is useful for certain sampling algorithms, a closer look reveals that it can also not be used directly in the sum-product algorithms. Why? Because operations like product and reduce, as mentioned above involve matrix inversions at each step. \n\nSo, in order to compactly describe the intermediate factors in a Gaussian network without the costly matrix inversions at each step, a simple parametric representation is used known as the Canonical Factor. This representation is closed under the basic operations used in inference: factor product, factor division, factor reduction, and marginalization. Thus, we can define a set of simple data structures that allow the inference process to be performed. Moreover, the integration operation required by marginalization is always well defined, and it is guaranteed to produce a finite integral under certain conditions; when it is well defined, it has a simple analytical solution.\n\nA canonical form C (X; K,h, g) is defined as:",

"_____no_output_____"

],

[

"$$C(X; K,h,g) = exp(-0.5X^TKX + h^TX + g)$$",

"_____no_output_____"

],

[

"We can represent every Gaussian as a canonical form. Rewriting the joint Gaussian pdf we obtain,",

"_____no_output_____"

],

[

"N (μ; Σ) = C (K, h, g) where:",

"_____no_output_____"

],

[

"$$\nK = Σ^{-1}\n$$\n$$\nh = Σ^{-1}μ\n$$\n$$\ng = -0.5μ^TΣ^{-1}μ - log((2π)^{n/2}|Σ|^{1/2}\n$$",

"_____no_output_____"

],

[

"Similar to the JointGaussainDistribution class, the CanonicalFactor class is also derived from the ContinuousFactor class but with its own implementations of the methods required for the sum-product algorithms that are much more efficient than its parent class methods. Let us have a look at the API of a few methods in this class.",

"_____no_output_____"

]

],

[

[

"from pgmpy.factors.continuous import CanonicalDistribution\n\nphi1 = CanonicalDistribution(['x1', 'x2', 'x3'],\n np.array([[1, -1, 0], [-1, 4, -2], [0, -2, 4]]),\n np.array([[1], [4], [-1]]), -2)\nphi2 = CanonicalDistribution(['x1', 'x2'], np.array([[3, -2], [-2, 4]]),\n np.array([[5], [-1]]), 1)\n\nphi3 = phi1 * phi2\nphi3.variables",

"_____no_output_____"

],

[

"phi3.h",

"_____no_output_____"

],

[

"phi3.K",

"_____no_output_____"

],

[

"phi3.g",

"_____no_output_____"

]

],

[

[

"This class also has a method, to_joint_gaussian to convert the canoncial representation back into the joint gaussian distribution.",

"_____no_output_____"

]

],

[

[

"phi = CanonicalDistribution(['x1', 'x2'], np.array([[3, -2], [-2, 4]]),\n np.array([[5], [-1]]), 1)\njgd = phi.to_joint_gaussian()\njgd.variables",

"_____no_output_____"

],

[

"jgd.covariance",

"_____no_output_____"

],

[

"jgd.mean",

"_____no_output_____"

]

],

[

[

"### Linear Gaussian CPD",

"_____no_output_____"

],

[

"A linear gaussian conditional probability distribution is defined on a continuous variable. All the parents of this variable are also continuous. The mean of this variable, is linearly dependent on the mean of its parent variables and the variance is independent.\n\nFor example,\n$$\nP(Y ; x1, x2, x3) = N(β_1x_1 + β_2x_2 + β_3x_3 + β_0 ; σ^2)\n$$\n\nLet Y be a linear Gaussian of its parents X1,...,Xk:\n$$\np(Y | x) = N(β_0 + β^T x ; σ^2)\n$$\n\nThe distribution of Y is a normal distribution p(Y) where:\n$$\nμ_Y = β_0 + β^Tμ\n$$\n$$\n{{σ^2}_Y = σ^2 + β^TΣβ}\n$$\n\nThe joint distribution over {X, Y} is a normal distribution where:\n\n$$Cov[X_i; Y] = {\\sum_{j=1}^{k} β_jΣ_{i,j}}$$\n\nAssume that X1,...,Xk are jointly Gaussian with distribution N (μ; Σ). Then:\nFor its representation pgmpy has a class named LinearGaussianCPD in the module pgmpy.factors.continuous. To instantiate an object of this class, one needs to provide a variable name, the value of the beta_0 term, the variance, a list of the parent variable names and a list of the coefficient values of the linear equation (beta_vector), where the list of parent variable names and beta_vector list is optional and defaults to None.",

"_____no_output_____"

]

],

[

[

"# For P(Y| X1, X2, X3) = N(-2x1 + 3x2 + 7x3 + 0.2; 9.6)\nfrom pgmpy.factors.continuous import LinearGaussianCPD\ncpd = LinearGaussianCPD('Y', [0.2, -2, 3, 7], 9.6, ['X1', 'X2', 'X3'])\nprint(cpd)",

"P(Y | X1, X2, X3) = N(-2*X1 + 3*X2 + 7*X3 + 0.2; 9.6)\n"

]

],

[

[

"A Gaussian Bayesian is defined as a network all of whose variables are continuous, and where all of the CPDs are linear Gaussians. These networks are of particular interest as these are an alternate form of representaion of the Joint Gaussian distribution.\n\nThese networks are implemented as the LinearGaussianBayesianNetwork class in the module, pgmpy.models.continuous. This class is a subclass of the BayesianModel class in pgmpy.models and will inherit most of the methods from it. It will have a special method known as to_joint_gaussian that will return an equivalent JointGuassianDistribution object for the model.",

"_____no_output_____"

]

],

[

[

"from pgmpy.models import LinearGaussianBayesianNetwork\n\nmodel = LinearGaussianBayesianNetwork([('x1', 'x2'), ('x2', 'x3')])\ncpd1 = LinearGaussianCPD('x1', [1], 4)\ncpd2 = LinearGaussianCPD('x2', [-5, 0.5], 4, ['x1'])\ncpd3 = LinearGaussianCPD('x3', [4, -1], 3, ['x2'])\n# This is a hack due to a bug in pgmpy (LinearGaussianCPD\n# doesn't have `variables` attribute but `add_cpds` function\n# wants to check that...)\ncpd1.variables = [*cpd1.evidence, cpd1.variable]\ncpd2.variables = [*cpd2.evidence, cpd2.variable]\ncpd3.variables = [*cpd3.evidence, cpd3.variable]\nmodel.add_cpds(cpd1, cpd2, cpd3)\njgd = model.to_joint_gaussian()\njgd.variables",

"_____no_output_____"

],

[

"jgd.mean",

"_____no_output_____"

],

[

"jgd.covariance",

"_____no_output_____"

]

]

] |

[

"markdown",

"code",

"markdown",

"code",

"markdown",

"code",

"markdown",

"code",

"markdown",

"code",

"markdown",

"code",

"markdown",

"code",

"markdown",

"code",

"markdown",

"code"

] |

[

[

"markdown"

],

[

"code"

],

[

"markdown",

"markdown",

"markdown",

"markdown",

"markdown"

],

[

"code",

"code"

],

[

"markdown"

],

[

"code",

"code",

"code",

"code",

"code",

"code"

],

[

"markdown",

"markdown",

"markdown",

"markdown",

"markdown"

],

[

"code",

"code",

"code",

"code"

],

[

"markdown"

],

[

"code",

"code",

"code"

],

[

"markdown",

"markdown",

"markdown",

"markdown",

"markdown",

"markdown",

"markdown",

"markdown"

],

[

"code",

"code",

"code",

"code"

],

[

"markdown"

],

[

"code",

"code",

"code"

],

[

"markdown",

"markdown"

],

[

"code"

],

[

"markdown"

],

[

"code",

"code",

"code"

]

] |

4a9defcfefcaba64a116ec59dd62154fe9545681

| 208,737 |

ipynb

|

Jupyter Notebook

|

examples/paper_examples/hyperopt-fashion-lgb.ipynb

|

jpjuvo/wideboost

|

ba2ec3bc62d70aecce3c2728197ac6abd011a434

|

[

"MIT"

] | 11 |

2020-07-22T18:40:03.000Z

|

2022-02-18T13:43:02.000Z

|

examples/paper_examples/hyperopt-fashion-lgb.ipynb

|

jpjuvo/wideboost

|

ba2ec3bc62d70aecce3c2728197ac6abd011a434

|

[

"MIT"

] | 3 |

2020-10-31T01:45:27.000Z

|

2021-04-02T07:37:21.000Z

|

examples/paper_examples/hyperopt-fashion-lgb.ipynb

|

jpjuvo/wideboost

|

ba2ec3bc62d70aecce3c2728197ac6abd011a434

|

[

"MIT"

] | 2 |

2020-09-15T15:13:36.000Z

|

2020-10-28T06:11:19.000Z

| 87.337657 | 366 | 0.401663 |

[

[

[

"import numpy as np\nimport lightgbm as lgb\nfrom wideboost.wrappers import wlgb\n\nimport tensorflow_datasets as tfds\nfrom matplotlib import pyplot as plt\n\n(ds_train, ds_test), ds_info = tfds.load(\n 'fashion_mnist',\n split=['train', 'test'],\n shuffle_files=True,\n as_supervised=True,\n with_info=True,\n)\n\nfor i in ds_train.batch(60000):\n a = i\n break\n \nfor i in ds_test.batch(60000):\n b = i\n break",

"_____no_output_____"

],

[

"xtrain = a[0].numpy().reshape([-1,28*28])\nytrain = a[1].numpy()\n\nxtest = b[0].numpy().reshape([-1,28*28])\nytest = b[1].numpy()\n\n#dtrain = xgb.DMatrix(xtrain,label=ytrain)\n#dtest = xgb.DMatrix(xtest,label=ytest)\n\ntrain_data = lgb.Dataset(xtrain, label=ytrain)\ntest_data = lgb.Dataset(xtest, label=ytest)",

"_____no_output_____"

],

[

"from hyperopt import fmin, tpe, hp, STATUS_OK, space_eval\n\nbest_val = 1.0\n\ndef objective(param):\n global best_val\n #watchlist = [(dtrain,'train'),(dtest,'test')]\n ed1_results = dict()\n print(param)\n param['num_leaves'] = round(param['num_leaves']+1)\n param['min_data_in_leaf'] = round(param['min_data_in_leaf'])\n wbst = lgb.train(param,\n train_data,\n num_boost_round=20,\n valid_sets=test_data,\n evals_result=ed1_results)\n output = min(ed1_results['valid_0']['multi_error'])\n \n if output < best_val:\n print(\"NEW BEST VALUE!\")\n best_val = output\n \n return {'loss': output, 'status': STATUS_OK }\n\nspc = {\n 'objective': hp.choice('objective',['multiclass']),\n 'metric':hp.choice('metric',['multi_error']),\n 'num_class':hp.choice('num_class',[10]),\n 'learning_rate': hp.loguniform('learning_rate', -7, 0),\n 'num_leaves' : hp.qloguniform('num_leaves', 0, 7, 1),\n 'feature_fraction': hp.uniform('feature_fraction', 0.5, 1),\n 'bagging_fraction': hp.uniform('bagging_fraction', 0.5, 1),\n 'min_data_in_leaf': hp.qloguniform('min_data_in_leaf', 0, 6, 1),\n 'min_sum_hessian_in_leaf': hp.loguniform('min_sum_hessian_in_leaf', -16, 5),\n 'lambda_l1': hp.choice('lambda_l1', [0, hp.loguniform('lambda_l1_positive', -16, 2)]),\n 'lambda_l2': hp.choice('lambda_l2', [0, hp.loguniform('lambda_l2_positive', -16, 2)])\n}\n\n\nbest = fmin(objective,\n space=spc,\n algo=tpe.suggest,\n max_evals=100)",

"{'bagging_fraction': 0.7251796751234882, 'feature_fraction': 0.5933005337725001, 'lambda_l1': 1.3372979090365175e-06, 'lambda_l2': 0, 'learning_rate': 0.533315059142865, 'metric': 'multi_error', 'min_data_in_leaf': 1.0, 'min_sum_hessian_in_leaf': 0.00026298877164697437, 'num_class': 10, 'num_leaves': 33.0, 'objective': 'multiclass'}\n[1]\tvalid_0's multi_error: 0.2029 \n[2]\tvalid_0's multi_error: 0.1716 \n[3]\tvalid_0's multi_error: 0.1623 \n[4]\tvalid_0's multi_error: 0.1593 \n[5]\tvalid_0's multi_error: 0.155 \n[6]\tvalid_0's multi_error: 0.152 \n[7]\tvalid_0's multi_error: 0.1489 \n[8]\tvalid_0's multi_error: 0.1466 \n[9]\tvalid_0's multi_error: 0.1444 \n[10]\tvalid_0's multi_error: 0.1431 \n[11]\tvalid_0's multi_error: 0.1416 \n[12]\tvalid_0's multi_error: 0.1377 \n[13]\tvalid_0's multi_error: 0.1379 \n[14]\tvalid_0's multi_error: 0.1354 \n[15]\tvalid_0's multi_error: 0.1354 \n[16]\tvalid_0's multi_error: 0.1334 \n[17]\tvalid_0's multi_error: 0.1318 \n[18]\tvalid_0's multi_error: 0.1309 \n[19]\tvalid_0's multi_error: 0.1297 \n[20]\tvalid_0's multi_error: 0.1292 \nNEW BEST VALUE! \n{'bagging_fraction': 0.9133916565984483, 'feature_fraction': 0.687387924389055, 'lambda_l1': 0, 'lambda_l2': 0, 'learning_rate': 0.0013938906142289271, 'metric': 'multi_error', 'min_data_in_leaf': 12.0, 'min_sum_hessian_in_leaf': 0.054411653079058195, 'num_class': 10, 'num_leaves': 187.0, 'objective': 'multiclass'}\n[1]\tvalid_0's multi_error: 0.1746 \n[2]\tvalid_0's multi_error: 0.1573 \n[3]\tvalid_0's multi_error: 0.1493 \n[4]\tvalid_0's multi_error: 0.1444 \n[5]\tvalid_0's multi_error: 0.1414 \n[6]\tvalid_0's multi_error: 0.1399 \n[7]\tvalid_0's multi_error: 0.1387 \n[8]\tvalid_0's multi_error: 0.1391 \n[9]\tvalid_0's multi_error: 0.1376 \n[10]\tvalid_0's multi_error: 0.1378 \n[11]\tvalid_0's multi_error: 0.1375 \n[12]\tvalid_0's multi_error: 0.1368 \n[13]\tvalid_0's multi_error: 0.1359 \n[14]\tvalid_0's multi_error: 0.1356 \n[15]\tvalid_0's multi_error: 0.1341 \n[16]\tvalid_0's multi_error: 0.1346 \n[17]\tvalid_0's multi_error: 0.1338 \n[18]\tvalid_0's multi_error: 0.1339 \n[19]\tvalid_0's multi_error: 0.1324 \n[20]\tvalid_0's multi_error: 0.1332 \n{'bagging_fraction': 0.8784490298751432, 'feature_fraction': 0.5754265828265155, 'lambda_l1': 0, 'lambda_l2': 0, 'learning_rate': 0.001544459969705883, 'metric': 'multi_error', 'min_data_in_leaf': 350.0, 'min_sum_hessian_in_leaf': 5.973742769148399e-06, 'num_class': 10, 'num_leaves': 9.0, 'objective': 'multiclass'}\n[1]\tvalid_0's multi_error: 0.24 \n[2]\tvalid_0's multi_error: 0.2234 \n[3]\tvalid_0's multi_error: 0.2158 \n[4]\tvalid_0's multi_error: 0.2095 \n[5]\tvalid_0's multi_error: 0.206 \n[6]\tvalid_0's multi_error: 0.2031 \n[7]\tvalid_0's multi_error: 0.2034 \n[8]\tvalid_0's multi_error: 0.2006 \n[9]\tvalid_0's multi_error: 0.1986 \n[10]\tvalid_0's multi_error: 0.1996 \n[11]\tvalid_0's multi_error: 0.1998 \n[12]\tvalid_0's multi_error: 0.1981 \n[13]\tvalid_0's multi_error: 0.1984 \n[14]\tvalid_0's multi_error: 0.1988 \n[15]\tvalid_0's multi_error: 0.2 \n[16]\tvalid_0's multi_error: 0.1992 \n[17]\tvalid_0's multi_error: 0.1977 \n[18]\tvalid_0's multi_error: 0.1989 \n[19]\tvalid_0's multi_error: 0.1984 \n[20]\tvalid_0's multi_error: 0.1973 \n{'bagging_fraction': 0.933326869280898, 'feature_fraction': 0.8920093644678171, 'lambda_l1': 3.1562199243279184e-07, 'lambda_l2': 0, 'learning_rate': 0.8471486601007923, 'metric': 'multi_error', 'min_data_in_leaf': 92.0, 'min_sum_hessian_in_leaf': 0.018226830691217735, 'num_class': 10, 'num_leaves': 395.0, 'objective': 'multiclass'}\n[1]\tvalid_0's multi_error: 0.1827 \n[2]\tvalid_0's multi_error: 0.166 \n[3]\tvalid_0's multi_error: 0.1538 \n[4]\tvalid_0's multi_error: 0.1448 \n[5]\tvalid_0's multi_error: 0.142 \n[6]\tvalid_0's multi_error: 0.1393 \n[7]\tvalid_0's multi_error: 0.1376 \n[8]\tvalid_0's multi_error: 0.1346 \n[9]\tvalid_0's multi_error: 0.1314 \n[10]\tvalid_0's multi_error: 0.13 \n[11]\tvalid_0's multi_error: 0.1285 \n[12]\tvalid_0's multi_error: 0.1258 \n[13]\tvalid_0's multi_error: 0.1264 \n[14]\tvalid_0's multi_error: 0.1254 \n[15]\tvalid_0's multi_error: 0.1231 \n[16]\tvalid_0's multi_error: 0.1209 \n[17]\tvalid_0's multi_error: 0.1211 \n[18]\tvalid_0's multi_error: 0.1187 \n[19]\tvalid_0's multi_error: 0.1205 \n[20]\tvalid_0's multi_error: 0.1192 \nNEW BEST VALUE! \n{'bagging_fraction': 0.9280201314348633, 'feature_fraction': 0.9516881941592565, 'lambda_l1': 0, 'lambda_l2': 0, 'learning_rate': 0.061443689698515325, 'metric': 'multi_error', 'min_data_in_leaf': 266.0, 'min_sum_hessian_in_leaf': 0.019968563796704895, 'num_class': 10, 'num_leaves': 3.0, 'objective': 'multiclass'}\n[1]\tvalid_0's multi_error: 0.324 \n[2]\tvalid_0's multi_error: 0.3025 \n[3]\tvalid_0's multi_error: 0.2738 \n[4]\tvalid_0's multi_error: 0.2664 \n[5]\tvalid_0's multi_error: 0.2588 \n[6]\tvalid_0's multi_error: 0.253 \n[7]\tvalid_0's multi_error: 0.2522 \n[8]\tvalid_0's multi_error: 0.2509 \n[9]\tvalid_0's multi_error: 0.249 \n[10]\tvalid_0's multi_error: 0.2475 \n[11]\tvalid_0's multi_error: 0.2426 \n[12]\tvalid_0's multi_error: 0.2398 \n[13]\tvalid_0's multi_error: 0.2382 \n"

],

[

"print(best_val)\nprint(space_eval(spc, best))",

"0.112\n{'bagging_fraction': 0.6169757023287703, 'feature_fraction': 0.8932740248835392, 'lambda_l1': 0.09422621802317309, 'lambda_l2': 0, 'learning_rate': 0.832326366852246, 'metric': 'multi_error', 'min_data_in_leaf': 51.0, 'min_sum_hessian_in_leaf': 8.927831603309363e-07, 'num_class': 10, 'num_leaves': 682.0, 'objective': 'multiclass'}\n"

]

]

] |

[

"code"

] |

[

[

"code",

"code",

"code",

"code"

]

] |

4a9dfc38d9b6acf114cd523b590b198d4599fa54

| 14,673 |

ipynb

|

Jupyter Notebook

|

intro-to-pytorch/Part 1 - Tensors in PyTorch (Exercises).ipynb

|

nduas77/deep-learning-v2-pytorch

|

0f78d219a9a4728a4e3c6d00f79e89606f682f82

|

[

"MIT"

] | 2 |

2018-12-30T13:55:06.000Z

|

2019-05-31T06:51:17.000Z

|

intro-to-pytorch/Part 1 - Tensors in PyTorch (Exercises).ipynb

|

nduas77/deep-learning-v2-pytorch

|

0f78d219a9a4728a4e3c6d00f79e89606f682f82

|

[

"MIT"

] | null | null | null |

intro-to-pytorch/Part 1 - Tensors in PyTorch (Exercises).ipynb

|

nduas77/deep-learning-v2-pytorch

|

0f78d219a9a4728a4e3c6d00f79e89606f682f82

|

[

"MIT"

] | null | null | null | 42.407514 | 674 | 0.624617 |

[

[

[

"empty"

]

]

] |

[

"empty"

] |

[

[

"empty"

]

] |

4a9e0cc0b381c7fafe0d699a40cc827c1d127e9a

| 50,527 |

ipynb

|

Jupyter Notebook

|

site/ja/tutorials/load_data/text.ipynb

|

phoenix-fork-tensorflow/docs-l10n

|

2287738c22e3e67177555e8a41a0904edfcf1544

|

[

"Apache-2.0"

] | 491 |

2020-01-27T19:05:32.000Z

|

2022-03-31T08:50:44.000Z

|

site/ja/tutorials/load_data/text.ipynb

|

phoenix-fork-tensorflow/docs-l10n

|

2287738c22e3e67177555e8a41a0904edfcf1544

|

[

"Apache-2.0"

] | 511 |

2020-01-27T22:40:05.000Z

|

2022-03-21T08:40:55.000Z

|

site/ja/tutorials/load_data/text.ipynb

|

phoenix-fork-tensorflow/docs-l10n

|

2287738c22e3e67177555e8a41a0904edfcf1544

|

[

"Apache-2.0"

] | 627 |

2020-01-27T21:49:52.000Z

|

2022-03-28T18:11:50.000Z

| 28.988526 | 408 | 0.526075 |

[

[

[

"##### Copyright 2018 The TensorFlow Authors.\n",

"_____no_output_____"

]

],

[

[

"#@title Licensed under the Apache License, Version 2.0 (the \"License\");\n# you may not use this file except in compliance with the License.\n# You may obtain a copy of the License at\n#\n# https://www.apache.org/licenses/LICENSE-2.0\n#\n# Unless required by applicable law or agreed to in writing, software\n# distributed under the License is distributed on an \"AS IS\" BASIS,\n# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.\n# See the License for the specific language governing permissions and\n# limitations under the License.",

"_____no_output_____"

]

],

[

[

"# tf.data を使ったテキストの読み込み",

"_____no_output_____"

],

[

"<table class=\"tfo-notebook-buttons\" align=\"left\">\n <td><a target=\"_blank\" href=\"https://www.tensorflow.org/tutorials/load_data/text\"><img src=\"https://www.tensorflow.org/images/tf_logo_32px.png\">TensorFlow.org で表示</a></td>\n <td> <a target=\"_blank\" href=\"https://colab.research.google.com/github/tensorflow/docs-l10n/blob/master/site/ja/tutorials/load_data/text.ipynb\"><img src=\"https://www.tensorflow.org/images/colab_logo_32px.png\">Google Colab で実行</a> </td>\n <td> <a target=\"_blank\" href=\"https://github.com/tensorflow/docs-l10n/blob/master/site/ja/tutorials/load_data/text.ipynb\"><img src=\"https://www.tensorflow.org/images/GitHub-Mark-32px.png\">GitHub でソースを表示</a> </td>\n <td> <a href=\"https://storage.googleapis.com/tensorflow_docs/docs-l10n/site/ja/tutorials/load_data/text.ipynb\"><img src=\"https://www.tensorflow.org/images/download_logo_32px.png\">ノートブックをダウンロード</a> </td>\n</table>",

"_____no_output_____"

],

[

"このチュートリアルでは、テキストを読み込んで前処理する 2 つの方法を紹介します。\n\n- まず、Keras ユーティリティとレイヤーを使用します。 TensorFlow を初めて使用する場合は、これらから始める必要があります。\n\n- このチュートリアルでは、`tf.data.TextLineDataset` を使ってテキストファイルからサンプルを読み込む方法を例示します。`TextLineDataset` は、テキストファイルからデータセットを作成するために設計されています。この中では、元のテキストファイルの一行一行がサンプルです。これは、(たとえば、詩やエラーログのような) 基本的に行ベースのテキストデータを扱うのに便利でしょう。",

"_____no_output_____"

]

],

[

[

"# Be sure you're using the stable versions of both tf and tf-text, for binary compatibility.\n!pip uninstall -y tensorflow tf-nightly keras\n\n!pip install -q -U tf-nightly\n!pip install -q -U tensorflow-text-nightly",

"_____no_output_____"

],

[

"import collections\nimport pathlib\nimport re\nimport string\n\nimport tensorflow as tf\n\nfrom tensorflow.keras import layers\nfrom tensorflow.keras import losses\nfrom tensorflow.keras import preprocessing\nfrom tensorflow.keras import utils\nfrom tensorflow.keras.layers.experimental.preprocessing import TextVectorization\n\nimport tensorflow_datasets as tfds\nimport tensorflow_text as tf_text",

"_____no_output_____"

]

],

[

[

"## 例 1: StackOverflow の質問のタグを予測する\n\n最初の例として、StackOverflow からプログラミングの質問のデータセットをダウンロードします。それぞれの質問 (「ディクショナリを値で並べ替えるにはどうすればよいですか?」) は、1 つのタグ (`Python`、`CSharp`、`JavaScript`、または`Java`) でラベルされています。このタスクでは、質問のタグを予測するモデルを開発します。これは、マルチクラス分類の例です。マルチクラス分類は、重要で広く適用できる機械学習の問題です。",

"_____no_output_____"

],

[

"### データセットをダウンロードして調査する\n\n次に、データセットをダウンロードして、ディレクトリ構造を調べます。",

"_____no_output_____"

]

],

[

[

"data_url = 'https://storage.googleapis.com/download.tensorflow.org/data/stack_overflow_16k.tar.gz'\ndataset_dir = utils.get_file(\n origin=data_url,\n untar=True,\n cache_dir='stack_overflow',\n cache_subdir='')\n\ndataset_dir = pathlib.Path(dataset_dir).parent",

"_____no_output_____"

],

[

"list(dataset_dir.iterdir())",

"_____no_output_____"

],

[

"train_dir = dataset_dir/'train'\nlist(train_dir.iterdir())",

"_____no_output_____"

]

],

[

[

"`train/csharp`、`train/java`, `train/python` および `train/javascript` ディレクトリには、多くのテキストファイルが含まれています。それぞれが Stack Overflow の質問です。ファイルを出力してデータを調べます。",

"_____no_output_____"

]

],

[

[

"sample_file = train_dir/'python/1755.txt'\nwith open(sample_file) as f:\n print(f.read())",

"_____no_output_____"

]

],

[

[

"### データセットを読み込む\n\n次に、データをディスクから読み込み、トレーニングに適した形式に準備します。これを行うには、[text_dataset_from_directory](https://www.tensorflow.org/api_docs/python/tf/keras/preprocessing/text_dataset_from_directory) ユーティリティを使用して、ラベル付きの `tf.data.Dataset` を作成します。これは、入力パイプラインを構築するための強力なツールのコレクションです。\n\n`preprocessing.text_dataset_from_directory` は、次のようなディレクトリ構造を想定しています。\n\n```\ntrain/\n...csharp/\n......1.txt\n......2.txt\n...java/\n......1.txt\n......2.txt\n...javascript/\n......1.txt\n......2.txt\n...python/\n......1.txt\n......2.txt\n```",

"_____no_output_____"

],

[

"機械学習実験を実行するときは、データセットを[トレーニング](https://developers.google.com/machine-learning/glossary#training_set)、[検証](https://developers.google.com/machine-learning/glossary#validation_set)、および、[テスト](https://developers.google.com/machine-learning/glossary#test-set)の 3 つに分割することをお勧めします。Stack Overflow データセットはすでにトレーニングとテストに分割されていますが、検証セットがありません。以下の `validation_split` 引数を使用して、トレーニングデータの 80:20 分割を使用して検証セットを作成します。",

"_____no_output_____"

]

],

[

[

"batch_size = 32\nseed = 42\n\nraw_train_ds = preprocessing.text_dataset_from_directory(\n train_dir,\n batch_size=batch_size,\n validation_split=0.2,\n subset='training',\n seed=seed)",

"_____no_output_____"

]

],

[

[

"上記のように、トレーニングフォルダには 8,000 の例があり、そのうち 80% (6,400 件) をトレーニングに使用します。この後で見ていきますが、`tf.data.Dataset` を直接 `model.fit` に渡すことでモデルをトレーニングできます。まず、データセットを繰り返し処理し、いくつかの例を出力します。\n\n注意: 分類問題の難易度を上げるために、データセットの作成者は、プログラミングの質問で、*Python*、*CSharp*、*JavaScript*、*Java* という単語を *blank* に置き換えました。",

"_____no_output_____"

]

],

[

[

"for text_batch, label_batch in raw_train_ds.take(1):\n for i in range(10):\n print(\"Question: \", text_batch.numpy()[i])\n print(\"Label:\", label_batch.numpy()[i])",

"_____no_output_____"

]

],

[

[

"ラベルは、`0`、`1`、`2` または `3` です。これらのどれがどの文字列ラベルに対応するかを確認するには、データセットの `class_names` プロパティを確認します。\n",

"_____no_output_____"

]

],

[

[

"for i, label in enumerate(raw_train_ds.class_names):\n print(\"Label\", i, \"corresponds to\", label)",

"_____no_output_____"

]

],

[

[

"次に、検証およびテスト用データセットを作成します。トレーニング用セットの残りの 1,600 件のレビューを検証に使用します。\n\n注意: `validation_split` および `subset` 引数を使用する場合は、必ずランダムシードを指定するか、`shuffle=False`を渡して、検証とトレーニング分割に重複がないようにします。",

"_____no_output_____"

]

],

[

[

"raw_val_ds = preprocessing.text_dataset_from_directory(\n train_dir,\n batch_size=batch_size,\n validation_split=0.2,\n subset='validation',\n seed=seed)",

"_____no_output_____"

],

[

"test_dir = dataset_dir/'test'\nraw_test_ds = preprocessing.text_dataset_from_directory(\n test_dir, batch_size=batch_size)",

"_____no_output_____"

]

],

[

[

"### トレーニング用データセットを準備する",

"_____no_output_____"

],

[

"注意: このセクションで使用される前処理 API は、TensorFlow 2.3 では実験的なものであり、変更される可能性があります。",

"_____no_output_____"

],

[

"次に、`preprocessing.TextVectorization` レイヤーを使用して、データを標準化、トークン化、およびベクトル化します。\n\n- 標準化とは、テキストを前処理することを指します。通常、句読点や HTML 要素を削除して、データセットを簡素化します。\n\n- トークン化とは、文字列をトークンに分割することです(たとえば、空白で分割することにより、文を個々の単語に分割します)。\n\n- ベクトル化とは、トークンを数値に変換して、ニューラルネットワークに入力できるようにすることです。\n\nこれらのタスクはすべて、このレイヤーで実行できます。これらの詳細については、[API doc](https://www.tensorflow.org/api_docs/python/tf/keras/layers/experimental/preprocessing/TextVectorization) をご覧ください。\n\n- デフォルトの標準化では、テキストが小文字に変換され、句読点が削除されます。\n\n- デフォルトのトークナイザーは空白で分割されます。\n\n- デフォルトのベクトル化モードは `int` です。これは整数インデックスを出力します(トークンごとに1つ)。このモードは、語順を考慮したモデルを構築するために使用できます。`binary` などの他のモードを使用して、bag-of-word モデルを構築することもできます。\n\nこれらについてさらに学ぶために、2 つのモードを構築します。まず、`binary` モデルを使用して、bag-of-words モデルを構築します。次に、1D ConvNet で `int` モードを使用します。",

"_____no_output_____"

]

],

[

[

"VOCAB_SIZE = 10000\n\nbinary_vectorize_layer = TextVectorization(\n max_tokens=VOCAB_SIZE,\n output_mode='binary')",

"_____no_output_____"

]

],

[

[

"`int` の場合、最大語彙サイズに加えて、明示的な最大シーケンス長を設定する必要があります。これにより、レイヤーはシーケンスを正確に sequence_length 値にパディングまたは切り捨てます。",

"_____no_output_____"

]

],

[

[

"MAX_SEQUENCE_LENGTH = 250\n\nint_vectorize_layer = TextVectorization(\n max_tokens=VOCAB_SIZE,\n output_mode='int',\n output_sequence_length=MAX_SEQUENCE_LENGTH)",

"_____no_output_____"

]

],

[

[