modelId

stringlengths 5

139

| author

stringlengths 2

42

| last_modified

timestamp[us, tz=UTC]date 2020-02-15 11:33:14

2025-09-23 12:32:37

| downloads

int64 0

223M

| likes

int64 0

11.7k

| library_name

stringclasses 571

values | tags

listlengths 1

4.05k

| pipeline_tag

stringclasses 55

values | createdAt

timestamp[us, tz=UTC]date 2022-03-02 23:29:04

2025-09-23 12:31:07

| card

stringlengths 11

1.01M

|

|---|---|---|---|---|---|---|---|---|---|

huggingtweets/423zb

|

huggingtweets

| 2021-05-21T16:38:25Z | 4 | 0 |

transformers

|

[

"transformers",

"pytorch",

"jax",

"gpt2",

"text-generation",

"huggingtweets",

"en",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2022-03-02T23:29:05Z |

---

language: en

thumbnail: https://www.huggingtweets.com/423zb/1612221398403/predictions.png

tags:

- huggingtweets

widget:

- text: "My dream is"

---

<link rel="stylesheet" href="https://unpkg.com/@tailwindcss/[email protected]/dist/typography.min.css">

<style>

@media (prefers-color-scheme: dark) {

.prose { color: #E2E8F0 !important; }

.prose h2, .prose h3, .prose a, .prose thead { color: #F7FAFC !important; }

}

</style>

<section class='prose'>

<div>

<div style="width: 132px; height:132px; border-radius: 50%; background-size: cover; background-image: url('https://pbs.twimg.com/profile_images/1277051021064392706/wuQS0nyO_400x400.jpg')">

</div>

<div style="margin-top: 8px; font-size: 19px; font-weight: 800">423ZB 🤖 AI Bot </div>

<div style="font-size: 15px; color: #657786">@423zb bot</div>

</div>

I was made with [huggingtweets](https://github.com/borisdayma/huggingtweets).

Create your own bot based on your favorite user with [the demo](https://colab.research.google.com/github/borisdayma/huggingtweets/blob/master/huggingtweets-demo.ipynb)!

## How does it work?

The model uses the following pipeline.

To understand how the model was developed, check the [W&B report](https://app.wandb.ai/wandb/huggingtweets/reports/HuggingTweets-Train-a-model-to-generate-tweets--VmlldzoxMTY5MjI).

## Training data

The model was trained on [@423zb's tweets](https://twitter.com/423zb).

<table style='border-width:0'>

<thead style='border-width:0'>

<tr style='border-width:0 0 1px 0; border-color: #CBD5E0'>

<th style='border-width:0'>Data</th>

<th style='border-width:0'>Quantity</th>

</tr>

</thead>

<tbody style='border-width:0'>

<tr style='border-width:0 0 1px 0; border-color: #E2E8F0'>

<td style='border-width:0'>Tweets downloaded</td>

<td style='border-width:0'>3166</td>

</tr>

<tr style='border-width:0 0 1px 0; border-color: #E2E8F0'>

<td style='border-width:0'>Retweets</td>

<td style='border-width:0'>2425</td>

</tr>

<tr style='border-width:0 0 1px 0; border-color: #E2E8F0'>

<td style='border-width:0'>Short tweets</td>

<td style='border-width:0'>144</td>

</tr>

<tr style='border-width:0'>

<td style='border-width:0'>Tweets kept</td>

<td style='border-width:0'>597</td>

</tr>

</tbody>

</table>

[Explore the data](https://wandb.ai/wandb/huggingtweets/runs/jnwkepoo/artifacts), which is tracked with [W&B artifacts](https://docs.wandb.com/artifacts) at every step of the pipeline.

## Training procedure

The model is based on a pre-trained [GPT-2](https://huggingface.co/gpt2) which is fine-tuned on @423zb's tweets.

Hyperparameters and metrics are recorded in the [W&B training run](https://wandb.ai/wandb/huggingtweets/runs/29x1ggo7) for full transparency and reproducibility.

At the end of training, [the final model](https://wandb.ai/wandb/huggingtweets/runs/29x1ggo7/artifacts) is logged and versioned.

## Intended uses & limitations

### How to use

You can use this model directly with a pipeline for text generation:

<pre><code><span style="color:#03A9F4">from</span> transformers <span style="color:#03A9F4">import</span> pipeline

generator = pipeline(<span style="color:#FF9800">'text-generation'</span>,

model=<span style="color:#FF9800">'huggingtweets/423zb'</span>)

generator(<span style="color:#FF9800">"My dream is"</span>, num_return_sequences=<span style="color:#8BC34A">5</span>)</code></pre>

### Limitations and bias

The model suffers from [the same limitations and bias as GPT-2](https://huggingface.co/gpt2#limitations-and-bias).

In addition, the data present in the user's tweets further affects the text generated by the model.

## About

*Built by Boris Dayma*

</section>

[](https://twitter.com/intent/follow?screen_name=borisdayma)

<section class='prose'>

For more details, visit the project repository.

</section>

[](https://github.com/borisdayma/huggingtweets)

|

huggingtweets/3thyr3al

|

huggingtweets

| 2021-05-21T16:37:14Z | 11 | 0 |

transformers

|

[

"transformers",

"pytorch",

"jax",

"gpt2",

"text-generation",

"huggingtweets",

"en",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2022-03-02T23:29:05Z |

---

language: en

thumbnail: https://www.huggingtweets.com/3thyr3al/1617942034431/predictions.png

tags:

- huggingtweets

widget:

- text: "My dream is"

---

<div>

<div style="width: 132px; height:132px; border-radius: 50%; background-size: cover; background-image: url('https://pbs.twimg.com/profile_images/1362160113247793153/VEYzwQTI_400x400.jpg')">

</div>

<div style="margin-top: 8px; font-size: 19px; font-weight: 800">ethy (3thyreඞl)🏺 🤖 AI Bot </div>

<div style="font-size: 15px">@3thyr3al bot</div>

</div>

I was made with [huggingtweets](https://github.com/borisdayma/huggingtweets).

Create your own bot based on your favorite user with [the demo](https://colab.research.google.com/github/borisdayma/huggingtweets/blob/master/huggingtweets-demo.ipynb)!

## How does it work?

The model uses the following pipeline.

To understand how the model was developed, check the [W&B report](https://wandb.ai/wandb/huggingtweets/reports/HuggingTweets-Train-a-Model-to-Generate-Tweets--VmlldzoxMTY5MjI).

## Training data

The model was trained on [@3thyr3al's tweets](https://twitter.com/3thyr3al).

| Data | Quantity |

| --- | --- |

| Tweets downloaded | 1727 |

| Retweets | 360 |

| Short tweets | 539 |

| Tweets kept | 828 |

[Explore the data](https://wandb.ai/wandb/huggingtweets/runs/2tr059nk/artifacts), which is tracked with [W&B artifacts](https://docs.wandb.com/artifacts) at every step of the pipeline.

## Training procedure

The model is based on a pre-trained [GPT-2](https://huggingface.co/gpt2) which is fine-tuned on @3thyr3al's tweets.

Hyperparameters and metrics are recorded in the [W&B training run](https://wandb.ai/wandb/huggingtweets/runs/m9xvw9pq) for full transparency and reproducibility.

At the end of training, [the final model](https://wandb.ai/wandb/huggingtweets/runs/m9xvw9pq/artifacts) is logged and versioned.

## How to use

You can use this model directly with a pipeline for text generation:

```python

from transformers import pipeline

generator = pipeline('text-generation',

model='huggingtweets/3thyr3al')

generator("My dream is", num_return_sequences=5)

```

## Limitations and bias

The model suffers from [the same limitations and bias as GPT-2](https://huggingface.co/gpt2#limitations-and-bias).

In addition, the data present in the user's tweets further affects the text generated by the model.

## About

*Built by Boris Dayma*

[](https://twitter.com/intent/follow?screen_name=borisdayma)

For more details, visit the project repository.

[](https://github.com/borisdayma/huggingtweets)

|

huggingtweets/3rbunn1nja

|

huggingtweets

| 2021-05-21T16:32:07Z | 4 | 0 |

transformers

|

[

"transformers",

"pytorch",

"jax",

"gpt2",

"text-generation",

"huggingtweets",

"en",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2022-03-02T23:29:05Z |

---

language: en

thumbnail: https://www.huggingtweets.com/3rbunn1nja/1616808238654/predictions.png

tags:

- huggingtweets

widget:

- text: "My dream is"

---

<div>

<div style="width: 132px; height:132px; border-radius: 50%; background-size: cover; background-image: url('https://pbs.twimg.com/profile_images/1371476407767957505/xfhZ00Hv_400x400.jpg')">

</div>

<div style="margin-top: 8px; font-size: 19px; font-weight: 800">Jeremy Spradlin 🤖 AI Bot </div>

<div style="font-size: 15px">@3rbunn1nja bot</div>

</div>

I was made with [huggingtweets](https://github.com/borisdayma/huggingtweets).

Create your own bot based on your favorite user with [the demo](https://colab.research.google.com/github/borisdayma/huggingtweets/blob/master/huggingtweets-demo.ipynb)!

## How does it work?

The model uses the following pipeline.

To understand how the model was developed, check the [W&B report](https://wandb.ai/wandb/huggingtweets/reports/HuggingTweets-Train-a-Model-to-Generate-Tweets--VmlldzoxMTY5MjI).

## Training data

The model was trained on [@3rbunn1nja's tweets](https://twitter.com/3rbunn1nja).

| Data | Quantity |

| --- | --- |

| Tweets downloaded | 3251 |

| Retweets | 121 |

| Short tweets | 252 |

| Tweets kept | 2878 |

[Explore the data](https://wandb.ai/wandb/huggingtweets/runs/2fqh91fk/artifacts), which is tracked with [W&B artifacts](https://docs.wandb.com/artifacts) at every step of the pipeline.

## Training procedure

The model is based on a pre-trained [GPT-2](https://huggingface.co/gpt2) which is fine-tuned on @3rbunn1nja's tweets.

Hyperparameters and metrics are recorded in the [W&B training run](https://wandb.ai/wandb/huggingtweets/runs/3lk04zqn) for full transparency and reproducibility.

At the end of training, [the final model](https://wandb.ai/wandb/huggingtweets/runs/3lk04zqn/artifacts) is logged and versioned.

## How to use

You can use this model directly with a pipeline for text generation:

```python

from transformers import pipeline

generator = pipeline('text-generation',

model='huggingtweets/3rbunn1nja')

generator("My dream is", num_return_sequences=5)

```

## Limitations and bias

The model suffers from [the same limitations and bias as GPT-2](https://huggingface.co/gpt2#limitations-and-bias).

In addition, the data present in the user's tweets further affects the text generated by the model.

## About

*Built by Boris Dayma*

[](https://twitter.com/intent/follow?screen_name=borisdayma)

For more details, visit the project repository.

[](https://github.com/borisdayma/huggingtweets)

|

huggingtweets/178kakapo

|

huggingtweets

| 2021-05-21T16:29:51Z | 6 | 1 |

transformers

|

[

"transformers",

"pytorch",

"jax",

"gpt2",

"text-generation",

"huggingtweets",

"en",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2022-03-02T23:29:05Z |

---

language: en

thumbnail: https://www.huggingtweets.com/178kakapo/1603720462678/predictions.png

tags:

- huggingtweets

widget:

- text: "My dream is"

---

<link rel="stylesheet" href="https://unpkg.com/@tailwindcss/[email protected]/dist/typography.min.css">

<style>

@media (prefers-color-scheme: dark) {

.prose { color: #E2E8F0 !important; }

.prose h2, .prose h3, .prose a, .prose thead { color: #F7FAFC !important; }

}

</style>

<section class='prose'>

<div>

<div style="width: 132px; height:132px; border-radius: 50%; background-size: cover; background-image: url('https://pbs.twimg.com/profile_images/2476808798/p6cqc9mvgsdlhya7nb6p_400x400.jpeg')">

</div>

<div style="margin-top: 8px; font-size: 19px; font-weight: 800">KAKAPO➤Endangered 🤖 AI Bot </div>

<div style="font-size: 15px; color: #657786">@178kakapo bot</div>

</div>

I was made with [huggingtweets](https://github.com/borisdayma/huggingtweets).

Create your own bot based on your favorite user with [the demo](https://colab.research.google.com/github/borisdayma/huggingtweets/blob/master/huggingtweets-demo.ipynb)!

## How does it work?

The model uses the following pipeline.

To understand how the model was developed, check the [W&B report](https://app.wandb.ai/wandb/huggingtweets/reports/HuggingTweets-Train-a-model-to-generate-tweets--VmlldzoxMTY5MjI).

## Training data

The model was trained on [@178kakapo's tweets](https://twitter.com/178kakapo).

<table style='border-width:0'>

<thead style='border-width:0'>

<tr style='border-width:0 0 1px 0; border-color: #CBD5E0'>

<th style='border-width:0'>Data</th>

<th style='border-width:0'>Quantity</th>

</tr>

</thead>

<tbody style='border-width:0'>

<tr style='border-width:0 0 1px 0; border-color: #E2E8F0'>

<td style='border-width:0'>Tweets downloaded</td>

<td style='border-width:0'>3140</td>

</tr>

<tr style='border-width:0 0 1px 0; border-color: #E2E8F0'>

<td style='border-width:0'>Retweets</td>

<td style='border-width:0'>2196</td>

</tr>

<tr style='border-width:0 0 1px 0; border-color: #E2E8F0'>

<td style='border-width:0'>Short tweets</td>

<td style='border-width:0'>56</td>

</tr>

<tr style='border-width:0'>

<td style='border-width:0'>Tweets kept</td>

<td style='border-width:0'>888</td>

</tr>

</tbody>

</table>

[Explore the data](https://app.wandb.ai/wandb/huggingtweets/runs/1r7z36ek/artifacts), which is tracked with [W&B artifacts](https://docs.wandb.com/artifacts) at every step of the pipeline.

## Training procedure

The model is based on a pre-trained [GPT-2](https://huggingface.co/gpt2) which is fine-tuned on @178kakapo's tweets.

Hyperparameters and metrics are recorded in the [W&B training run](https://app.wandb.ai/wandb/huggingtweets/runs/2tp7xvh0) for full transparency and reproducibility.

At the end of training, [the final model](https://app.wandb.ai/wandb/huggingtweets/runs/2tp7xvh0/artifacts) is logged and versioned.

## Intended uses & limitations

### How to use

You can use this model directly with a pipeline for text generation:

<pre><code><span style="color:#03A9F4">from</span> transformers <span style="color:#03A9F4">import</span> pipeline

generator = pipeline(<span style="color:#FF9800">'text-generation'</span>,

model=<span style="color:#FF9800">'huggingtweets/178kakapo'</span>)

generator(<span style="color:#FF9800">"My dream is"</span>, num_return_sequences=<span style="color:#8BC34A">5</span>)</code></pre>

### Limitations and bias

The model suffers from [the same limitations and bias as GPT-2](https://huggingface.co/gpt2#limitations-and-bias).

In addition, the data present in the user's tweets further affects the text generated by the model.

## About

*Built by Boris Dayma*

</section>

[](https://twitter.com/intent/follow?screen_name=borisdayma)

<section class='prose'>

For more details, visit the project repository.

</section>

[](https://github.com/borisdayma/huggingtweets)

<!--- random size file -->

|

huggingtweets/14jun1995

|

huggingtweets

| 2021-05-21T16:23:35Z | 4 | 0 |

transformers

|

[

"transformers",

"pytorch",

"jax",

"gpt2",

"text-generation",

"huggingtweets",

"en",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2022-03-02T23:29:05Z |

---

language: en

thumbnail: https://www.huggingtweets.com/14jun1995/1616669363048/predictions.png

tags:

- huggingtweets

widget:

- text: "My dream is"

---

<div>

<div style="width: 132px; height:132px; border-radius: 50%; background-size: cover; background-image: url('https://pbs.twimg.com/profile_images/1236431647576330246/GGaeVBZJ_400x400.jpg')">

</div>

<div style="margin-top: 8px; font-size: 19px; font-weight: 800">mon nom non-mo 🤖 AI Bot </div>

<div style="font-size: 15px">@14jun1995 bot</div>

</div>

I was made with [huggingtweets](https://github.com/borisdayma/huggingtweets).

Create your own bot based on your favorite user with [the demo](https://colab.research.google.com/github/borisdayma/huggingtweets/blob/master/huggingtweets-demo.ipynb)!

## How does it work?

The model uses the following pipeline.

To understand how the model was developed, check the [W&B report](https://wandb.ai/wandb/huggingtweets/reports/HuggingTweets-Train-a-Model-to-Generate-Tweets--VmlldzoxMTY5MjI).

## Training data

The model was trained on [@14jun1995's tweets](https://twitter.com/14jun1995).

| Data | Quantity |

| --- | --- |

| Tweets downloaded | 3249 |

| Retweets | 20 |

| Short tweets | 213 |

| Tweets kept | 3016 |

[Explore the data](https://wandb.ai/wandb/huggingtweets/runs/1ppb6sp7/artifacts), which is tracked with [W&B artifacts](https://docs.wandb.com/artifacts) at every step of the pipeline.

## Training procedure

The model is based on a pre-trained [GPT-2](https://huggingface.co/gpt2) which is fine-tuned on @14jun1995's tweets.

Hyperparameters and metrics are recorded in the [W&B training run](https://wandb.ai/wandb/huggingtweets/runs/25pt100s) for full transparency and reproducibility.

At the end of training, [the final model](https://wandb.ai/wandb/huggingtweets/runs/25pt100s/artifacts) is logged and versioned.

## How to use

You can use this model directly with a pipeline for text generation:

```python

from transformers import pipeline

generator = pipeline('text-generation',

model='huggingtweets/14jun1995')

generator("My dream is", num_return_sequences=5)

```

## Limitations and bias

The model suffers from [the same limitations and bias as GPT-2](https://huggingface.co/gpt2#limitations-and-bias).

In addition, the data present in the user's tweets further affects the text generated by the model.

## About

*Built by Boris Dayma*

[](https://twitter.com/intent/follow?screen_name=borisdayma)

For more details, visit the project repository.

[](https://github.com/borisdayma/huggingtweets)

|

huggingtweets/09indierock

|

huggingtweets

| 2021-05-21T16:21:05Z | 6 | 0 |

transformers

|

[

"transformers",

"pytorch",

"jax",

"gpt2",

"text-generation",

"huggingtweets",

"en",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2022-03-02T23:29:05Z |

---

language: en

thumbnail: https://www.huggingtweets.com/09indierock/1616791178582/predictions.png

tags:

- huggingtweets

widget:

- text: "My dream is"

---

<div>

<div style="width: 132px; height:132px; border-radius: 50%; background-size: cover; background-image: url('https://pbs.twimg.com/profile_images/1363688455352553473/nfQUoTBH_400x400.jpg')">

</div>

<div style="margin-top: 8px; font-size: 19px; font-weight: 800">kn 🤖 AI Bot </div>

<div style="font-size: 15px">@09indierock bot</div>

</div>

I was made with [huggingtweets](https://github.com/borisdayma/huggingtweets).

Create your own bot based on your favorite user with [the demo](https://colab.research.google.com/github/borisdayma/huggingtweets/blob/master/huggingtweets-demo.ipynb)!

## How does it work?

The model uses the following pipeline.

To understand how the model was developed, check the [W&B report](https://wandb.ai/wandb/huggingtweets/reports/HuggingTweets-Train-a-Model-to-Generate-Tweets--VmlldzoxMTY5MjI).

## Training data

The model was trained on [@09indierock's tweets](https://twitter.com/09indierock).

| Data | Quantity |

| --- | --- |

| Tweets downloaded | 3126 |

| Retweets | 1094 |

| Short tweets | 428 |

| Tweets kept | 1604 |

[Explore the data](https://wandb.ai/wandb/huggingtweets/runs/39findw6/artifacts), which is tracked with [W&B artifacts](https://docs.wandb.com/artifacts) at every step of the pipeline.

## Training procedure

The model is based on a pre-trained [GPT-2](https://huggingface.co/gpt2) which is fine-tuned on @09indierock's tweets.

Hyperparameters and metrics are recorded in the [W&B training run](https://wandb.ai/wandb/huggingtweets/runs/33xy9nxb) for full transparency and reproducibility.

At the end of training, [the final model](https://wandb.ai/wandb/huggingtweets/runs/33xy9nxb/artifacts) is logged and versioned.

## How to use

You can use this model directly with a pipeline for text generation:

```python

from transformers import pipeline

generator = pipeline('text-generation',

model='huggingtweets/09indierock')

generator("My dream is", num_return_sequences=5)

```

## Limitations and bias

The model suffers from [the same limitations and bias as GPT-2](https://huggingface.co/gpt2#limitations-and-bias).

In addition, the data present in the user's tweets further affects the text generated by the model.

## About

*Built by Boris Dayma*

[](https://twitter.com/intent/follow?screen_name=borisdayma)

For more details, visit the project repository.

[](https://github.com/borisdayma/huggingtweets)

|

gagan3012/project-code-py-small

|

gagan3012

| 2021-05-21T16:06:24Z | 11 | 1 |

transformers

|

[

"transformers",

"pytorch",

"jax",

"gpt2",

"text-generation",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2022-03-02T23:29:05Z |

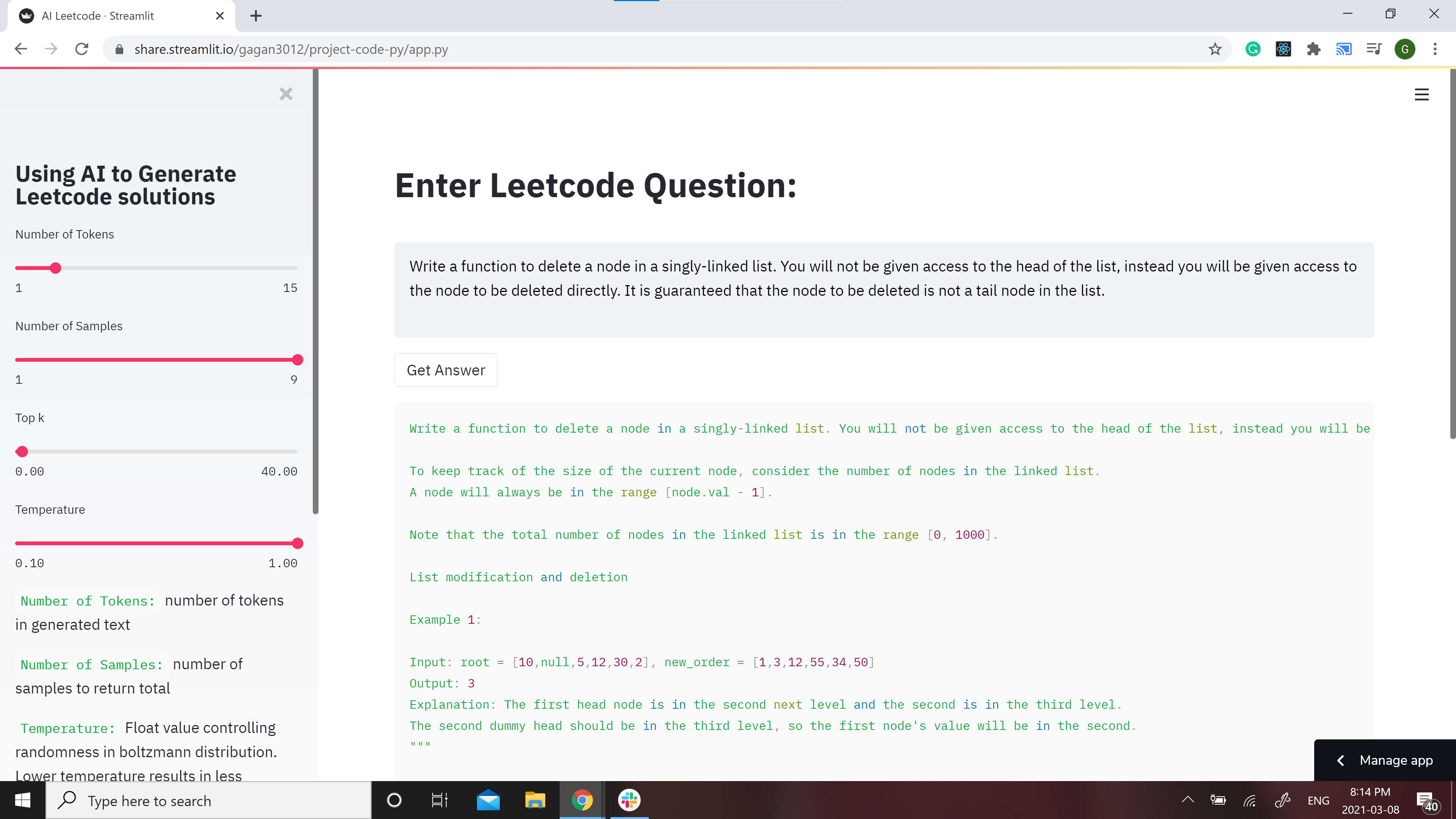

# Leetcode using AI :robot:

GPT-2 Model for Leetcode Questions in python

**Note**: the Answers might not make sense in some cases because of the bias in GPT-2

**Contribtuions:** If you would like to make the model better contributions are welcome Check out [CONTRIBUTIONS.md](https://github.com/gagan3012/project-code-py/blob/master/CONTRIBUTIONS.md)

### 📢 Favour:

It would be highly motivating, if you can STAR⭐ this repo if you find it helpful.

## Model

Two models have been developed for different use cases and they can be found at https://huggingface.co/gagan3012

The model weights can be found here: [GPT-2](https://huggingface.co/gagan3012/project-code-py) and [DistilGPT-2](https://huggingface.co/gagan3012/project-code-py-small)

### Example usage:

```python

from transformers import AutoTokenizer, AutoModelWithLMHead

tokenizer = AutoTokenizer.from_pretrained("gagan3012/project-code-py")

model = AutoModelWithLMHead.from_pretrained("gagan3012/project-code-py")

```

## Demo

[](https://share.streamlit.io/gagan3012/project-code-py/app.py)

A streamlit webapp has been setup to use the model: https://share.streamlit.io/gagan3012/project-code-py/app.py

## Example results:

### Question:

```

Write a function to delete a node in a singly-linked list. You will not be given access to the head of the list, instead you will be given access to the node to be deleted directly. It is guaranteed that the node to be deleted is not a tail node in the list.

```

### Answer:

```python

""" Write a function to delete a node in a singly-linked list. You will not be given access to the head of the list, instead you will be given access to the node to be deleted directly. It is guaranteed that the node to be deleted is not a tail node in the list.

For example,

a = 1->2->3

b = 3->1->2

t = ListNode(-1, 1)

Note: The lexicographic ordering of the nodes in a tree matters. Do not assign values to nodes in a tree.

Example 1:

Input: [1,2,3]

Output: 1->2->5

Explanation: 1->2->3->3->4, then 1->2->5[2] and then 5->1->3->4.

Note:

The length of a linked list will be in the range [1, 1000].

Node.val must be a valid LinkedListNode type.

Both the length and the value of the nodes in a linked list will be in the range [-1000, 1000].

All nodes are distinct.

"""

# Definition for singly-linked list.

# class ListNode:

# def __init__(self, x):

# self.val = x

# self.next = None

class Solution:

def deleteNode(self, head: ListNode, val: int) -> None:

"""

BFS

Linked List

:param head: ListNode

:param val: int

:return: ListNode

"""

if head is not None:

return head

dummy = ListNode(-1, 1)

dummy.next = head

dummy.next.val = val

dummy.next.next = head

dummy.val = ""

s1 = Solution()

print(s1.deleteNode(head))

print(s1.deleteNode(-1))

print(s1.deleteNode(-1))

```

|

elgeish/gpt2-medium-arabic-poetry

|

elgeish

| 2021-05-21T15:45:14Z | 13 | 7 |

transformers

|

[

"transformers",

"pytorch",

"jax",

"gpt2",

"text-generation",

"poetry",

"ar",

"license:apache-2.0",

"model-index",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2022-03-02T23:29:05Z |

---

language: ar

datasets:

- Arabic Poetry Dataset (6th - 21st century)

metrics:

- perplexity

tags:

- text-generation

- poetry

license: apache-2.0

widget:

- text: "للوهلة الأولى قرأت في عينيه"

model-index:

- name: elgeish Arabic GPT2 Medium

results:

- task:

name: Text Generation

type: text-generation

dataset:

name: Arabic Poetry Dataset (6th - 21st century)

type: poetry

args: ar

metrics:

- name: Validation Perplexity

type: perplexity

value: 282.09

---

# GPT2-Medium-Arabic-Poetry

Fine-tuned [aubmindlab/aragpt2-medium](https://huggingface.co/aubmindlab/aragpt2-medium) on

the [Arabic Poetry Dataset (6th - 21st century)](https://www.kaggle.com/fahd09/arabic-poetry-dataset-478-2017)

using 41,922 lines of poetry as the train split and 9,007 (by poets not in the train split) for validation.

## Usage

```python

from transformers import AutoModelForCausalLM, AutoTokenizer, set_seed

set_seed(42)

model_name = "elgeish/gpt2-medium-arabic-poetry"

model = AutoModelForCausalLM.from_pretrained(model_name).to("cuda")

tokenizer = AutoTokenizer.from_pretrained(model_name)

prompt = "للوهلة الأولى قرأت في عينيه"

input_ids = tokenizer.encode(prompt, return_tensors="pt")

samples = model.generate(

input_ids.to("cuda"),

do_sample=True,

early_stopping=True,

max_length=32,

min_length=16,

num_return_sequences=3,

pad_token_id=50256,

repetition_penalty=1.5,

top_k=32,

top_p=0.95,

)

for sample in samples:

print(tokenizer.decode(sample.tolist()))

print("--")

```

Here's the output:

```

للوهلة الأولى قرأت في عينيه عن تلك النسم لم تذكر شيءا فلربما نامت علي كتفيها العصافير وتناثرت اوراق التوت عليها وغابت الوردة من

--

للوهلة الأولى قرأت في عينيه اية نشوة من ناره وهي تنظر الي المستقبل بعيون خلاقة ورسمت خطوطه العريضة علي جبينك العاري رسمت الخطوط الحمر فوق شعرك

--

للوهلة الأولى قرأت في عينيه كل ما كان وما سيكون غدا اذا لم تكن امراة ستكبر كثيرا علي الورق الابيض او لا تري مثلا خطوطا رفيعة فوق صفحة الماء

--

```

|

DebateLabKIT/cript-large

|

DebateLabKIT

| 2021-05-21T15:31:48Z | 7 | 0 |

transformers

|

[

"transformers",

"pytorch",

"jax",

"gpt2",

"text-generation",

"en",

"arxiv:2009.07185",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2022-03-02T23:29:05Z |

---

language: en

tags:

- gpt2

---

# CRiPT Model Large (Critical Thinking Intermediarily Pretrained Transformer)

Large version of the trained model (`SYL01-2020-10-24-72K/gpt2-large-train03-72K`) presented in the paper "Critical Thinking for Language Models" (Betz, Voigt and Richardson 2020). See also:

* [blog entry](https://debatelab.github.io/journal/critical-thinking-language-models.html)

* [GitHub repo](https://github.com/debatelab/aacorpus)

* [paper](https://arxiv.org/pdf/2009.07185)

|

ceostroff/harry-potter-gpt2-fanfiction

|

ceostroff

| 2021-05-21T14:51:47Z | 10 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tf",

"jax",

"gpt2",

"text-generation",

"harry-potter",

"en",

"license:mit",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2022-03-02T23:29:05Z |

---

language:

- en

tags:

- harry-potter

license: mit

---

# Harry Potter Fanfiction Generator

This is a pre-trained GPT-2 generative text model that allows you to generate your own Harry Potter fanfiction, trained off of the top 100 rated fanficition stories. We intend for this to be used for individual fun and experimentation and not as a commercial product.

|

bigjoedata/rockbot355M

|

bigjoedata

| 2021-05-21T14:17:25Z | 6 | 1 |

transformers

|

[

"transformers",

"pytorch",

"jax",

"gpt2",

"text-generation",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2022-03-02T23:29:05Z |

# 🎸 🥁 Rockbot 🎤 🎧

A [GPT-2](https://openai.com/blog/better-language-models/) based lyrics generator fine-tuned on the writing styles of 16000 songs by 270 artists across MANY genres (not just rock).

**Instructions:** Type in a fake song title, pick an artist, click "Generate".

Most language models are imprecise and Rockbot is no exception. You may see NSFW lyrics unexpectedly. I have made no attempts to censor. Generated lyrics may be repetitive and/or incoherent at times, but hopefully you'll encounter something interesting or memorable.

Oh, and generation is resource intense and can be slow. I set governors on song length to keep generation time somewhat reasonable. You may adjust song length and other parameters on the left or check out [Github](https://github.com/bigjoedata/rockbot) to spin up your own Rockbot.

Just have fun.

[Demo](https://share.streamlit.io/bigjoedata/rockbot/main/src/main.py) Adjust settings to increase speed

[Github](https://github.com/bigjoedata/rockbot)

[GPT-2 124M version Model page on Hugging Face](https://huggingface.co/bigjoedata/rockbot)

[DistilGPT2 version Model page on Hugging Face](https://huggingface.co/bigjoedata/rockbot-distilgpt2/) This is leaner with the tradeoff being that the lyrics are more simplistic.

🎹 🪘 🎷 🎺 🪗 🪕 🎻

## Background

With the shutdown of [Google Play Music](https://en.wikipedia.org/wiki/Google_Play_Music) I used Google's takeout function to gather the metadata from artists I've listened to over the past several years. I wanted to take advantage of this bounty to build something fun. I scraped the top 50 lyrics for artists I'd listened to at least once from [Genius](https://genius.com/), then fine tuned [GPT-2's](https://openai.com/blog/better-language-models/) 124M token model using the [AITextGen](https://github.com/minimaxir/aitextgen) framework after considerable post-processing. For more on generation, see [here.](https://huggingface.co/blog/how-to-generate)

### Full Tech Stack

[Google Play Music](https://en.wikipedia.org/wiki/Google_Play_Music) (R.I.P.).

[Python](https://www.python.org/).

[Streamlit](https://www.streamlit.io/).

[GPT-2](https://openai.com/blog/better-language-models/).

[AITextGen](https://github.com/minimaxir/aitextgen).

[Pandas](https://pandas.pydata.org/).

[LyricsGenius](https://lyricsgenius.readthedocs.io/en/master/).

[Google Colab](https://colab.research.google.com/) (GPU based Training).

[Knime](https://www.knime.com/) (data cleaning).

## How to Use The Model

Please refer to [AITextGen](https://github.com/minimaxir/aitextgen) for much better documentation.

### Training Parameters Used

ai.train("lyrics.txt",

line_by_line=False,

from_cache=False,

num_steps=10000,

generate_every=2000,

save_every=2000,

save_gdrive=False,

learning_rate=1e-3,

batch_size=3,

eos_token="<|endoftext|>",

#fp16=True

)

### To Use

Generate With Prompt (Use Title Case):

Song Name

BY

Artist Name

|

Dongjae/mrc2reader

|

Dongjae

| 2021-05-21T13:25:57Z | 14 | 0 |

transformers

|

[

"transformers",

"pytorch",

"xlm-roberta",

"question-answering",

"endpoints_compatible",

"region:us"

] |

question-answering

| 2022-03-02T23:29:04Z |

The Reader model is for Korean Question Answering

The backbone model is deepset/xlm-roberta-large-squad2.

It is a finetuned model with KorQuAD-v1 dataset.

As a result of verification using KorQuAD evaluation dataset, it showed approximately 87% and 92% respectively for the EM score and F1 score.

Thank you

|

anonymous-german-nlp/german-gpt2

|

anonymous-german-nlp

| 2021-05-21T13:20:42Z | 338 | 1 |

transformers

|

[

"transformers",

"pytorch",

"tf",

"jax",

"gpt2",

"text-generation",

"de",

"license:mit",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2022-03-02T23:29:05Z |

---

language: de

widget:

- text: "Heute ist sehr schönes Wetter in"

license: mit

---

# German GPT-2 model

**Note**: This model was de-anonymized and now lives at:

https://huggingface.co/dbmdz/german-gpt2

Please use the new model name instead!

|

aliosm/ComVE-gpt2

|

aliosm

| 2021-05-21T13:19:25Z | 7 | 0 |

transformers

|

[

"transformers",

"pytorch",

"jax",

"gpt2",

"text-generation",

"exbert",

"commonsense",

"semeval2020",

"comve",

"en",

"dataset:ComVE",

"license:mit",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2022-03-02T23:29:05Z |

---

language: "en"

tags:

- exbert

- commonsense

- semeval2020

- comve

license: "mit"

datasets:

- ComVE

metrics:

- bleu

widget:

- text: "Chicken can swim in water. <|continue|>"

---

# ComVE-gpt2

## Model description

Finetuned model on Commonsense Validation and Explanation (ComVE) dataset introduced in [SemEval2020 Task4](https://competitions.codalab.org/competitions/21080) using a causal language modeling (CLM) objective.

The model is able to generate a reason why a given natural language statement is against commonsense.

## Intended uses & limitations

You can use the raw model for text generation to generate reasons why natural language statements are against commonsense.

#### How to use

You can use this model directly to generate reasons why the given statement is against commonsense using [`generate.sh`](https://github.com/AliOsm/SemEval2020-Task4-ComVE/tree/master/TaskC-Generation) script.

*Note:* make sure that you are using version `2.4.1` of `transformers` package. Newer versions has some issue in text generation and the model repeats the last token generated again and again.

#### Limitations and bias

The model biased to negate the entered sentence usually instead of producing a factual reason.

## Training data

The model is initialized from the [gpt2](https://github.com/huggingface/transformers/blob/master/model_cards/gpt2-README.md) model and finetuned using [ComVE](https://github.com/wangcunxiang/SemEval2020-Task4-Commonsense-Validation-and-Explanation) dataset which contains 10K against commonsense sentences, each of them is paired with three reference reasons.

## Training procedure

Each natural language statement that against commonsense is concatenated with its reference reason with `<|continue|>` as a separator, then the model finetuned using CLM objective.

The model trained on Nvidia Tesla P100 GPU from Google Colab platform with 5e-5 learning rate, 5 epochs, 128 maximum sequence length and 64 batch size.

<center>

<img src="https://i.imgur.com/xKbrwBC.png">

</center>

## Eval results

The model achieved 14.0547/13.6534 BLEU scores on SemEval2020 Task4: Commonsense Validation and Explanation development and testing dataset.

### BibTeX entry and citation info

```bibtex

@article{fadel2020justers,

title={JUSTers at SemEval-2020 Task 4: Evaluating Transformer Models Against Commonsense Validation and Explanation},

author={Fadel, Ali and Al-Ayyoub, Mahmoud and Cambria, Erik},

year={2020}

}

```

<a href="https://huggingface.co/exbert/?model=aliosm/ComVE-gpt2">

<img width="300px" src="https://cdn-media.huggingface.co/exbert/button.png">

</a>

|

aliosm/ComVE-gpt2-medium

|

aliosm

| 2021-05-21T13:17:55Z | 8 | 0 |

transformers

|

[

"transformers",

"pytorch",

"jax",

"gpt2",

"feature-extraction",

"exbert",

"commonsense",

"semeval2020",

"comve",

"en",

"dataset:ComVE",

"license:mit",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

feature-extraction

| 2022-03-02T23:29:05Z |

---

language: "en"

tags:

- gpt2

- exbert

- commonsense

- semeval2020

- comve

license: "mit"

datasets:

- ComVE

metrics:

- bleu

widget:

- text: "Chicken can swim in water. <|continue|>"

---

# ComVE-gpt2-medium

## Model description

Finetuned model on Commonsense Validation and Explanation (ComVE) dataset introduced in [SemEval2020 Task4](https://competitions.codalab.org/competitions/21080) using a causal language modeling (CLM) objective.

The model is able to generate a reason why a given natural language statement is against commonsense.

## Intended uses & limitations

You can use the raw model for text generation to generate reasons why natural language statements are against commonsense.

#### How to use

You can use this model directly to generate reasons why the given statement is against commonsense using [`generate.sh`](https://github.com/AliOsm/SemEval2020-Task4-ComVE/tree/master/TaskC-Generation) script.

*Note:* make sure that you are using version `2.4.1` of `transformers` package. Newer versions has some issue in text generation and the model repeats the last token generated again and again.

#### Limitations and bias

The model biased to negate the entered sentence usually instead of producing a factual reason.

## Training data

The model is initialized from the [gpt2-medium](https://github.com/huggingface/transformers/blob/master/model_cards/gpt2-README.md) model and finetuned using [ComVE](https://github.com/wangcunxiang/SemEval2020-Task4-Commonsense-Validation-and-Explanation) dataset which contains 10K against commonsense sentences, each of them is paired with three reference reasons.

## Training procedure

Each natural language statement that against commonsense is concatenated with its reference reason with `<|continue|>` as a separator, then the model finetuned using CLM objective.

The model trained on Nvidia Tesla P100 GPU from Google Colab platform with 5e-5 learning rate, 5 epochs, 128 maximum sequence length and 64 batch size.

<center>

<img src="https://i.imgur.com/xKbrwBC.png">

</center>

## Eval results

The model achieved fifth place with 16.7153/16.1187 BLEU scores and third place with 1.94 Human Evaluation score on SemEval2020 Task4: Commonsense Validation and Explanation development and testing dataset.

These are some examples generated by the model:

| Against Commonsense Statement | Generated Reason |

|:-----------------------------------------------------:|:--------------------------------------------:|

| Chicken can swim in water. | Chicken can't swim. |

| shoes can fly | Shoes are not able to fly. |

| Chocolate can be used to make a coffee pot | Chocolate is not used to make coffee pots. |

| you can also buy tickets online with an identity card | You can't buy tickets with an identity card. |

| a ball is square and can roll | A ball is round and cannot roll. |

| You can use detergent to dye your hair. | Detergent is used to wash clothes. |

| you can eat mercury | mercury is poisonous |

| A gardener can follow a suspect | gardener is not a police officer |

| cars can float in the ocean just like a boat | Cars are too heavy to float in the ocean. |

| I am going to work so I can lose money. | Working is not a way to lose money. |

### BibTeX entry and citation info

```bibtex

@article{fadel2020justers,

title={JUSTers at SemEval-2020 Task 4: Evaluating Transformer Models Against Commonsense Validation and Explanation},

author={Fadel, Ali and Al-Ayyoub, Mahmoud and Cambria, Erik},

year={2020}

}

```

<a href="https://huggingface.co/exbert/?model=aliosm/ComVE-gpt2-medium">

<img width="300px" src="https://cdn-media.huggingface.co/exbert/button.png">

</a>

|

aliosm/ComVE-gpt2-large

|

aliosm

| 2021-05-21T13:12:02Z | 13 | 0 |

transformers

|

[

"transformers",

"pytorch",

"jax",

"gpt2",

"text-generation",

"exbert",

"commonsense",

"semeval2020",

"comve",

"en",

"license:mit",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2022-03-02T23:29:05Z |

---

language: "en"

tags:

- gpt2

- exbert

- commonsense

- semeval2020

- comve

license: "mit"

datasets:

- https://github.com/wangcunxiang/SemEval2020-Task4-Commonsense-Validation-and-Explanation

metrics:

- bleu

widget:

- text: "Chicken can swim in water. <|continue|>"

---

# ComVE-gpt2-large

## Model description

Finetuned model on Commonsense Validation and Explanation (ComVE) dataset introduced in [SemEval2020 Task4](https://competitions.codalab.org/competitions/21080) using a causal language modeling (CLM) objective.

The model is able to generate a reason why a given natural language statement is against commonsense.

## Intended uses & limitations

You can use the raw model for text generation to generate reasons why natural language statements are against commonsense.

#### How to use

You can use this model directly to generate reasons why the given statement is against commonsense using [`generate.sh`](https://github.com/AliOsm/SemEval2020-Task4-ComVE/tree/master/TaskC-Generation) script.

*Note:* make sure that you are using version `2.4.1` of `transformers` package. Newer versions has some issue in text generation and the model repeats the last token generated again and again.

#### Limitations and bias

The model biased to negate the entered sentence usually instead of producing a factual reason.

## Training data

The model is initialized from the [gpt2-large](https://github.com/huggingface/transformers/blob/master/model_cards/gpt2-README.md) model and finetuned using [ComVE](https://github.com/wangcunxiang/SemEval2020-Task4-Commonsense-Validation-and-Explanation) dataset which contains 10K against commonsense sentences, each of them is paired with three reference reasons.

## Training procedure

Each natural language statement that against commonsense is concatenated with its reference reason with `<|conteniue|>` as a separator, then the model finetuned using CLM objective.

The model trained on Nvidia Tesla P100 GPU from Google Colab platform with 5e-5 learning rate, 5 epochs, 128 maximum sequence length and 64 batch size.

<center>

<img src="https://i.imgur.com/xKbrwBC.png">

</center>

## Eval results

The model achieved 16.5110/15.9299 BLEU scores on SemEval2020 Task4: Commonsense Validation and Explanation development and testing dataset.

### BibTeX entry and citation info

```bibtex

@article{fadel2020justers,

title={JUSTers at SemEval-2020 Task 4: Evaluating Transformer Models Against Commonsense Validation and Explanation},

author={Fadel, Ali and Al-Ayyoub, Mahmoud and Cambria, Erik},

year={2020}

}

```

<a href="https://huggingface.co/exbert/?model=aliosm/ComVE-gpt2-large">

<img width="300px" src="https://cdn-media.huggingface.co/exbert/button.png">

</a>

|

aliosm/ComVE-distilgpt2

|

aliosm

| 2021-05-21T13:07:30Z | 13 | 0 |

transformers

|

[

"transformers",

"pytorch",

"jax",

"gpt2",

"text-generation",

"exbert",

"commonsense",

"semeval2020",

"comve",

"en",

"dataset:ComVE",

"license:mit",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2022-03-02T23:29:05Z |

---

language: "en"

tags:

- exbert

- commonsense

- semeval2020

- comve

license: "mit"

datasets:

- ComVE

metrics:

- bleu

widget:

- text: "Chicken can swim in water. <|continue|>"

---

# ComVE-distilgpt2

## Model description

Finetuned model on Commonsense Validation and Explanation (ComVE) dataset introduced in [SemEval2020 Task4](https://competitions.codalab.org/competitions/21080) using a causal language modeling (CLM) objective.

The model is able to generate a reason why a given natural language statement is against commonsense.

## Intended uses & limitations

You can use the raw model for text generation to generate reasons why natural language statements are against commonsense.

#### How to use

You can use this model directly to generate reasons why the given statement is against commonsense using [`generate.sh`](https://github.com/AliOsm/SemEval2020-Task4-ComVE/tree/master/TaskC-Generation) script.

*Note:* make sure that you are using version `2.4.1` of `transformers` package. Newer versions has some issue in text generation and the model repeats the last token generated again and again.

#### Limitations and bias

The model biased to negate the entered sentence usually instead of producing a factual reason.

## Training data

The model is initialized from the [distilgpt2](https://github.com/huggingface/transformers/blob/master/model_cards/distilgpt2-README.md) model and finetuned using [ComVE](https://github.com/wangcunxiang/SemEval2020-Task4-Commonsense-Validation-and-Explanation) dataset which contains 10K against commonsense sentences, each of them is paired with three reference reasons.

## Training procedure

Each natural language statement that against commonsense is concatenated with its reference reason with `<|continue|>` as a separator, then the model finetuned using CLM objective.

The model trained on Nvidia Tesla P100 GPU from Google Colab platform with 5e-5 learning rate, 15 epochs, 128 maximum sequence length and 64 batch size.

<center>

<img src="https://i.imgur.com/xKbrwBC.png">

</center>

## Eval results

The model achieved 13.7582/13.8026 BLEU scores on SemEval2020 Task4: Commonsense Validation and Explanation development and testing dataset.

### BibTeX entry and citation info

```bibtex

@article{fadel2020justers,

title={JUSTers at SemEval-2020 Task 4: Evaluating Transformer Models Against Commonsense Validation and Explanation},

author={Fadel, Ali and Al-Ayyoub, Mahmoud and Cambria, Erik},

year={2020}

}

```

<a href="https://huggingface.co/exbert/?model=aliosm/ComVE-distilgpt2">

<img width="300px" src="https://cdn-media.huggingface.co/exbert/button.png">

</a>

|

ainize/gpt2-spongebob-script-large

|

ainize

| 2021-05-21T12:18:42Z | 7 | 1 |

transformers

|

[

"transformers",

"pytorch",

"jax",

"gpt2",

"text-generation",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2022-03-02T23:29:05Z |

### Model information

Fine tuning data: https://www.kaggle.com/mikhailgaerlan/spongebob-squarepants-completed-transcripts

License: CC-BY-SA

Base model: gpt-2 large

Epoch: 50

Train runtime: 14723.0716 secs

Loss: 0.0268

API page: [Ainize](https://ainize.ai/fpem123/GPT2-Spongebob?branch=master)

Demo page: [End-point](https://master-gpt2-spongebob-fpem123.endpoint.ainize.ai/)

### ===Teachable NLP=== ###

To train a GPT-2 model, write code and require GPU resources, but can easily fine-tune and get an API to use the model here for free.

Teachable NLP: [Teachable NLP](https://ainize.ai/teachable-nlp)

Tutorial: [Tutorial](https://forum.ainetwork.ai/t/teachable-nlp-how-to-use-teachable-nlp/65?utm_source=community&utm_medium=huggingface&utm_campaign=model&utm_content=teachable%20nlp)

|

ainize/gpt2-rnm-with-spongebob

|

ainize

| 2021-05-21T12:09:02Z | 9 | 0 |

transformers

|

[

"transformers",

"pytorch",

"jax",

"gpt2",

"text-generation",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2022-03-02T23:29:05Z |

### Model information

Fine tuning data 1: https://www.kaggle.com/andradaolteanu/rickmorty-scripts

Fine tuning data 2: https://www.kaggle.com/mikhailgaerlan/spongebob-squarepants-completed-transcripts

Base model: e-tony/gpt2-rnm

Epoch: 2

Train runtime: 790.0612 secs

Loss: 2.8569

API page: [Ainize](https://ainize.ai/fpem123/GPT2-Rick-N-Morty-with-SpongeBob?branch=master)

Demo page: [End-point](https://master-gpt2-rick-n-morty-with-sponge-bob-fpem123.endpoint.ainize.ai/)

### ===Teachable NLP=== ###

To train a GPT-2 model, write code and require GPU resources, but can easily fine-tune and get an API to use the model here for free.

Teachable NLP: [Teachable NLP](https://ainize.ai/teachable-nlp)

Tutorial: [Tutorial](https://forum.ainetwork.ai/t/teachable-nlp-how-to-use-teachable-nlp/65?utm_source=community&utm_medium=huggingface&utm_campaign=model&utm_content=teachable%20nlp)

|

SIC98/GPT2-python-code-generator

|

SIC98

| 2021-05-21T11:13:58Z | 17 | 9 |

transformers

|

[

"transformers",

"pytorch",

"jax",

"gpt2",

"text-generation",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2022-03-02T23:29:04Z |

Github

- https://github.com/SIC98/GPT2-python-code-generator

|

HooshvareLab/gpt2-fa-comment

|

HooshvareLab

| 2021-05-21T10:47:25Z | 30 | 2 |

transformers

|

[

"transformers",

"pytorch",

"tf",

"jax",

"gpt2",

"text-generation",

"fa",

"license:apache-2.0",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2022-03-02T23:29:04Z |

---

language: fa

license: apache-2.0

widget:

- text: "<s>نمونه دیدگاه هم خوب هم بد به طور کلی <sep>"

- text: "<s>نمونه دیدگاه خیلی منفی از نظر کیفیت و طعم <sep>"

- text: "<s>نمونه دیدگاه خوب از نظر بازی و کارگردانی <sep>"

- text: "<s>نمونه دیدگاه خیلی خوب از نظر بازی و صحنه و داستان <sep>"

- text: "<s>نمونه دیدگاه خیلی منفی از نظر ارزش خرید و طعم و کیفیت <sep>"

---

# Persian Comment Generator

The model can generate comments based on your aspects, and the model was fine-tuned on [persiannlp/parsinlu](https://github.com/persiannlp/parsinlu). Currently, the model only supports aspects in the food and movie scope. You can see the whole aspects in the following section.

## Comments Aspects

```text

<s>نمونه دیدگاه هم خوب هم بد به طور کلی <sep>

<s>نمونه دیدگاه خوب به طور کلی <sep>

<s>نمونه دیدگاه خیلی خوب از نظر طعم <sep>

<s>نمونه دیدگاه خیلی منفی از نظر طعم و کیفیت <sep>

<s>نمونه دیدگاه خوب از نظر ارزش غذایی و ارزش خرید <sep>

<s>نمونه دیدگاه هم خوب هم بد از نظر طعم و بسته بندی <sep>

<s>نمونه دیدگاه خوب از نظر کیفیت <sep>

<s>نمونه دیدگاه خیلی خوب از نظر طعم و کیفیت <sep>

<s>نمونه دیدگاه هم خوب هم بد از نظر کیفیت و ارزش خرید <sep>

<s>نمونه دیدگاه خیلی منفی از نظر کیفیت <sep>

<s>نمونه دیدگاه منفی از نظر کیفیت <sep>

<s>نمونه دیدگاه خوب از نظر طعم <sep>

<s>نمونه دیدگاه خیلی خوب به طور کلی <sep>

<s>نمونه دیدگاه خوب از نظر بسته بندی <sep>

<s>نمونه دیدگاه منفی از نظر کیفیت و طعم <sep>

<s>نمونه دیدگاه خیلی منفی از نظر ارسال و طعم <sep>

<s>نمونه دیدگاه خیلی منفی از نظر کیفیت و طعم <sep>

<s>نمونه دیدگاه منفی به طور کلی <sep>

<s>نمونه دیدگاه خوب از نظر ارزش خرید <sep>

<s>نمونه دیدگاه خوب از نظر کیفیت و بسته بندی و طعم <sep>

<s>نمونه دیدگاه خیلی منفی از نظر ارزش خرید و کیفیت <sep>

<s>نمونه دیدگاه هم خوب هم بد از نظر طعم و ارزش خرید <sep>

<s>نمونه دیدگاه خیلی خوب از نظر طعم و ارزش خرید <sep>

<s>نمونه دیدگاه منفی از نظر ارسال <sep>

<s>نمونه دیدگاه منفی از نظر طعم <sep>

<s>نمونه دیدگاه هم خوب هم بد از نظر ارزش خرید و طعم <sep>

<s>نمونه دیدگاه خیلی منفی از نظر طعم و ارزش خرید <sep>

<s>نمونه دیدگاه نظری ندارم به طور کلی <sep>

<s>نمونه دیدگاه خیلی منفی از نظر طعم <sep>

<s>نمونه دیدگاه خیلی منفی به طور کلی <sep>

<s>نمونه دیدگاه هم خوب هم بد از نظر بسته بندی <sep>

<s>نمونه دیدگاه خیلی منفی از نظر ارزش خرید و کیفیت و طعم <sep>

<s>نمونه دیدگاه خیلی خوب از نظر ارزش خرید <sep>

<s>نمونه دیدگاه منفی از نظر کیفیت و ارزش خرید <sep>

<s>نمونه دیدگاه خیلی منفی از نظر کیفیت و بسته بندی <sep>

<s>نمونه دیدگاه خیلی خوب از نظر کیفیت <sep>

<s>نمونه دیدگاه منفی از نظر طعم و کیفیت <sep>

<s>نمونه دیدگاه خوب از نظر طعم و کیفیت و ارزش خرید <sep>

<s>نمونه دیدگاه خیلی منفی از نظر ارسال <sep>

<s>نمونه دیدگاه خیلی منفی از نظر ارزش خرید و طعم <sep>

<s>نمونه دیدگاه هم خوب هم بد از نظر طعم <sep>

<s>نمونه دیدگاه خیلی خوب از نظر بسته بندی و طعم <sep>

<s>نمونه دیدگاه خیلی خوب از نظر ارزش خرید و کیفیت و بسته بندی <sep>

<s>نمونه دیدگاه هم خوب هم بد از نظر بسته بندی و طعم و ارزش خرید <sep>

<s>نمونه دیدگاه هم خوب هم بد از نظر کیفیت و طعم <sep>

<s>نمونه دیدگاه خیلی خوب از نظر طعم و بسته بندی <sep>

<s>نمونه دیدگاه خیلی منفی از نظر طعم و کیفیت و بسته بندی <sep>

<s>نمونه دیدگاه خوب از نظر ارزش خرید و بسته بندی و کیفیت <sep>

<s>نمونه دیدگاه هم خوب هم بد از نظر طعم و کیفیت <sep>

<s>نمونه دیدگاه خیلی خوب از نظر بسته بندی <sep>

<s>نمونه دیدگاه خیلی خوب از نظر ارزش خرید و کیفیت <sep>

<s>نمونه دیدگاه خیلی خوب از نظر کیفیت و ارزش خرید و طعم <sep>

<s>نمونه دیدگاه خیلی خوب از نظر ارزش خرید و طعم <sep>

<s>نمونه دیدگاه خیلی منفی از نظر کیفیت و بسته بندی و ارسال <sep>

<s>نمونه دیدگاه خوب از نظر کیفیت و ارزش خرید <sep>

<s>نمونه دیدگاه خیلی منفی از نظر کیفیت و ارزش غذایی <sep>

<s>نمونه دیدگاه خیلی خوب از نظر کیفیت و ارزش خرید <sep>

<s>نمونه دیدگاه هم خوب هم بد از نظر کیفیت <sep>

<s>نمونه دیدگاه منفی از نظر بسته بندی <sep>

<s>نمونه دیدگاه خوب از نظر طعم و کیفیت <sep>

<s>نمونه دیدگاه خوب از نظر کیفیت و ارزش غذایی <sep>

<s>نمونه دیدگاه خیلی منفی از نظر کیفیت و ارزش خرید <sep>

<s>نمونه دیدگاه خوب از نظر طعم و کیفیت و بسته بندی <sep>

<s>نمونه دیدگاه خیلی منفی از نظر ارزش خرید <sep>

<s>نمونه دیدگاه منفی از نظر ارسال و کیفیت <sep>

<s>نمونه دیدگاه هم خوب هم بد از نظر ارزش خرید <sep>

<s>نمونه دیدگاه خیلی منفی از نظر بسته بندی <sep>

<s>نمونه دیدگاه خیلی منفی از نظر کیفیت و بسته بندی و ارزش خرید <sep>

<s>نمونه دیدگاه خوب از نظر طعم و ارزش غذایی <sep>

<s>نمونه دیدگاه منفی از نظر ارزش خرید <sep>

<s>نمونه دیدگاه خیلی خوب از نظر کیفیت و طعم <sep>

<s>نمونه دیدگاه خوب از نظر کیفیت و بسته بندی <sep>

<s>نمونه دیدگاه خیلی منفی از نظر بسته بندی و طعم <sep>

<s>نمونه دیدگاه خیلی منفی از نظر طعم و ارزش غذایی <sep>

<s>نمونه دیدگاه خوب از نظر کیفیت و طعم <sep>

<s>نمونه دیدگاه هم خوب هم بد از نظر طعم و ارسال <sep>

<s>نمونه دیدگاه خیلی خوب از نظر ارزش غذایی <sep>

<s>نمونه دیدگاه خوب از نظر ارزش خرید و کیفیت <sep>

<s>نمونه دیدگاه خوب از نظر ارزش غذایی <sep>

<s>نمونه دیدگاه خوب از نظر طعم و ارزش خرید <sep>

<s>نمونه دیدگاه منفی از نظر طعم و ارزش خرید <sep>

<s>نمونه دیدگاه منفی از نظر ارزش خرید و کیفیت <sep>

<s>نمونه دیدگاه خوب از نظر کیفیت و ارزش خرید و طعم <sep>

<s>نمونه دیدگاه خیلی خوب از نظر بسته بندی و ارسال و طعم و ارزش خرید <sep>

<s>نمونه دیدگاه خوب از نظر کیفیت و طعم و ارزش خرید <sep>

<s>نمونه دیدگاه خوب از نظر کیفیت و بسته بندی و ارزش خرید <sep>

<s>نمونه دیدگاه خیلی خوب از نظر بسته بندی و کیفیت و ارزش خرید <sep>

<s>نمونه دیدگاه منفی از نظر ارزش خرید و طعم <sep>

<s>نمونه دیدگاه خیلی منفی از نظر طعم و بسته بندی <sep>

<s>نمونه دیدگاه خیلی منفی از نظر طعم و کیفیت و ارزش خرید <sep>

<s>نمونه دیدگاه منفی از نظر بسته بندی و کیفیت و طعم <sep>

<s>نمونه دیدگاه خوب از نظر ارسال <sep>

<s>نمونه دیدگاه خیلی خوب از نظر کیفیت و بسته بندی و ارزش غذایی و ارزش خرید <sep>

<s>نمونه دیدگاه خیلی خوب از نظر ارزش غذایی و کیفیت <sep>

<s>نمونه دیدگاه خیلی خوب از نظر کیفیت و طعم و ارزش خرید <sep>

<s>نمونه دیدگاه خوب از نظر طعم و ارسال <sep>

<s>نمونه دیدگاه خیلی خوب از نظر طعم و کیفیت و ارزش خرید <sep>

<s>نمونه دیدگاه خوب از نظر بسته بندی و ارزش خرید <sep>

<s>نمونه دیدگاه هم خوب هم بد از نظر ارزش غذایی و طعم <sep>

<s>نمونه دیدگاه هم خوب هم بد از نظر کیفیت و ارزش خرید و طعم <sep>

<s>نمونه دیدگاه خیلی منفی از نظر ارزش غذایی <sep>

<s>نمونه دیدگاه هم خوب هم بد از نظر ارزش خرید و کیفیت <sep>

<s>نمونه دیدگاه هم خوب هم بد از نظر ارزش غذایی و ارزش خرید <sep>

<s>نمونه دیدگاه منفی از نظر طعم و ارزش غذایی <sep>

<s>نمونه دیدگاه خیلی خوب از نظر کیفیت و ارسال <sep>

<s>نمونه دیدگاه خوب از نظر ارزش خرید و طعم <sep>

<s>نمونه دیدگاه هم خوب هم بد از نظر ارزش غذایی و بسته بندی <sep>

<s>نمونه دیدگاه هم خوب هم بد از نظر طعم و ارزش غذایی <sep>

<s>نمونه دیدگاه هم خوب هم بد از نظر طعم و کیفیت و ارسال <sep>

<s>نمونه دیدگاه خیلی خوب از نظر کیفیت و بسته بندی و طعم و ارزش خرید <sep>

<s>نمونه دیدگاه خیلی خوب از نظر طعم و ارزش غذایی <sep>

<s>نمونه دیدگاه خوب از نظر بسته بندی و طعم و کیفیت <sep>

<s>نمونه دیدگاه خیلی خوب از نظر ارزش خرید و ارزش غذایی <sep>

<s>نمونه دیدگاه خوب از نظر ارسال و طعم <sep>

<s>نمونه دیدگاه خوب از نظر ارزش خرید و ارسال <sep>

<s>نمونه دیدگاه هم خوب هم بد از نظر ارزش غذایی و کیفیت <sep>

<s>نمونه دیدگاه خوب از نظر ارزش خرید و بسته بندی <sep>

<s>نمونه دیدگاه خیلی خوب از نظر کیفیت و طعم و بسته بندی <sep>

<s>نمونه دیدگاه خیلی خوب از نظر ارزش خرید و طعم و کیفیت <sep>

<s>نمونه دیدگاه خیلی منفی از نظر بسته بندی و کیفیت <sep>

<s>نمونه دیدگاه خیلی خوب از نظر ارزش خرید و کیفیت و طعم <sep>

<s>نمونه دیدگاه خیلی منفی از نظر طعم و ارزش خرید و کیفیت <sep>

<s>نمونه دیدگاه منفی از نظر بسته بندی و کیفیت و ارزش خرید <sep>

<s>نمونه دیدگاه خیلی منفی از نظر طعم و کیفیت و ارزش خرید و بسته بندی <sep>

<s>نمونه دیدگاه خوب از نظر ارزش غذایی و ارسال <sep>

<s>نمونه دیدگاه خوب از نظر کیفیت و طعم و ارزش خرید و ارسال <sep>

<s>نمونه دیدگاه هم خوب هم بد از نظر ارسال و طعم <sep>

<s>نمونه دیدگاه خیلی منفی از نظر ارزش خرید و بسته بندی و طعم <sep>

<s>نمونه دیدگاه خیلی خوب از نظر ارسال و بسته بندی <sep>

<s>نمونه دیدگاه خیلی خوب از نظر طعم و ارزش خرید و ارسال <sep>

<s>نمونه دیدگاه خیلی منفی از نظر کیفیت و ارزش خرید و طعم <sep>

<s>نمونه دیدگاه خوب از نظر بسته بندی و کیفیت <sep>

<s>نمونه دیدگاه هم خوب هم بد از نظر بسته بندی و کیفیت <sep>

<s>نمونه دیدگاه خوب از نظر ارزش خرید و بسته بندی و ارسال <sep>

<s>نمونه دیدگاه خیلی منفی از نظر بسته بندی و طعم و ارزش خرید <sep>

<s>نمونه دیدگاه نظری ندارم از نظر بسته بندی <sep>

<s>نمونه دیدگاه هم خوب هم بد از نظر کیفیت و بسته بندی و طعم <sep>

<s>نمونه دیدگاه خوب از نظر طعم و بسته بندی <sep>

<s>نمونه دیدگاه خیلی منفی از نظر طعم و ارزش خرید و بسته بندی <sep>

<s>نمونه دیدگاه خیلی خوب از نظر ارزش خرید و بسته بندی <sep>

<s>نمونه دیدگاه خوب از نظر ارزش خرید و ارزش غذایی <sep>

<s>نمونه دیدگاه منفی از نظر طعم و بسته بندی <sep>

<s>نمونه دیدگاه منفی از نظر کیفیت و بسته بندی <sep>

<s>نمونه دیدگاه خیلی خوب از نظر کیفیت و ارزش غذایی و بسته بندی <sep>

<s>نمونه دیدگاه خوب از نظر ارسال و بسته بندی <sep>

<s>نمونه دیدگاه هم خوب هم بد از نظر ارسال <sep>

<s>نمونه دیدگاه نظری ندارم از نظر طعم <sep>

<s>نمونه دیدگاه خیلی خوب از نظر کیفیت و بسته بندی <sep>

<s>نمونه دیدگاه منفی از نظر ارزش غذایی <sep>

<s>نمونه دیدگاه خوب از نظر بسته بندی و طعم <sep>

<s>نمونه دیدگاه خیلی منفی از نظر ارسال و کیفیت <sep>

<s>نمونه دیدگاه خیلی خوب از نظر طعم و کیفیت و بسته بندی <sep>

<s>نمونه دیدگاه خیلی خوب از نظر طعم و کیفیت و بسته بندی و ارزش غذایی <sep>

<s>نمونه دیدگاه خوب از نظر طعم و بسته بندی و ارزش خرید <sep>

<s>نمونه دیدگاه هم خوب هم بد از نظر کیفیت و ارسال <sep>

<s>نمونه دیدگاه خیلی خوب از نظر طعم و کیفیت و ارزش غذایی <sep>

<s>نمونه دیدگاه خیلی خوب از نظر کیفیت و طعم و ارزش غذایی <sep>

<s>نمونه دیدگاه خیلی خوب از نظر کیفیت و ارسال و ارزش خرید <sep>

<s>نمونه دیدگاه نظری ندارم از نظر ارزش غذایی <sep>

<s>نمونه دیدگاه خیلی خوب از نظر ارسال و ارزش خرید و کیفیت <sep>

<s>نمونه دیدگاه خیلی خوب از نظر بسته بندی و طعم و ارزش خرید <sep>

<s>نمونه دیدگاه خیلی خوب از نظر کیفیت و ارسال و بسته بندی <sep>

<s>نمونه دیدگاه منفی از نظر بسته بندی و طعم و کیفیت <sep>

<s>نمونه دیدگاه خیلی خوب از نظر بسته بندی و ارسال <sep>

<s>نمونه دیدگاه خیلی خوب از نظر ارسال و کیفیت <sep>

<s>نمونه دیدگاه خوب از نظر کیفیت و ارسال <sep>

<s>نمونه دیدگاه هم خوب هم بد از نظر ارزش خرید و ارزش غذایی <sep>

<s>نمونه دیدگاه خوب از نظر ارزش غذایی و طعم <sep>

<s>نمونه دیدگاه خیلی خوب از نظر ارزش خرید و ارزش غذایی و طعم <sep>

<s>نمونه دیدگاه خیلی خوب از نظر ارسال و بسته بندی و کیفیت <sep>

<s>نمونه دیدگاه منفی از نظر بسته بندی و طعم <sep>

<s>نمونه دیدگاه منفی از نظر بسته بندی و ارزش غذایی <sep>

<s>نمونه دیدگاه منفی از نظر طعم و کیفیت و ارزش خرید <sep>

<s>نمونه دیدگاه هم خوب هم بد از نظر بسته بندی و طعم <sep>

<s>نمونه دیدگاه خیلی خوب از نظر طعم و ارزش غذایی و ارزش خرید <sep>

<s>نمونه دیدگاه خیلی خوب از نظر ارزش غذایی و ارزش خرید <sep>

<s>نمونه دیدگاه خیلی خوب از نظر ارزش خرید و طعم و بسته بندی <sep>

<s>نمونه دیدگاه هم خوب هم بد از نظر کیفیت و بسته بندی <sep>

<s>نمونه دیدگاه هم خوب هم بد از نظر ارزش خرید و کیفیت و طعم <sep>

<s>نمونه دیدگاه منفی از نظر ارزش خرید و کیفیت و طعم <sep>

<s>نمونه دیدگاه منفی از نظر کیفیت و طعم و ارزش غذایی <sep>

<s>نمونه دیدگاه خیلی منفی از نظر ارسال و کیفیت و طعم <sep>

<s>نمونه دیدگاه خیلی خوب از نظر ارزش غذایی و طعم <sep>

<s>نمونه دیدگاه خیلی خوب از نظر طعم و بسته بندی و ارسال <sep>

<s>نمونه دیدگاه خیلی منفی از نظر کیفیت و بسته بندی و طعم <sep>

<s>نمونه دیدگاه خیلی خوب از نظر ارزش غذایی و طعم و کیفیت <sep>

<s>نمونه دیدگاه خیلی منفی از نظر ارزش غذایی و کیفیت <sep>

<s>نمونه دیدگاه منفی از نظر ارزش خرید و طعم و کیفیت <sep>

<s>نمونه دیدگاه خیلی منفی از نظر کیفیت و طعم و بسته بندی <sep>

<s>نمونه دیدگاه هم خوب هم بد از نظر ارسال و ارزش خرید <sep>

<s>نمونه دیدگاه خیلی منفی از نظر ارزش خرید و طعم و کیفیت <sep>

<s>نمونه دیدگاه خیلی منفی از نظر طعم و ارسال <sep>

<s>نمونه دیدگاه منفی از نظر موسیقی و بازی <sep>

<s>نمونه دیدگاه منفی از نظر داستان <sep>

<s>نمونه دیدگاه خیلی خوب از نظر صدا <sep>

<s>نمونه دیدگاه خیلی منفی از نظر داستان <sep>

<s>نمونه دیدگاه هم خوب هم بد از نظر داستان و فیلمبرداری و کارگردانی و بازی <sep>

<s>نمونه دیدگاه هم خوب هم بد از نظر بازی <sep>

<s>نمونه دیدگاه منفی از نظر داستان و بازی <sep>

<s>نمونه دیدگاه منفی از نظر بازی <sep>

<s>نمونه دیدگاه خیلی خوب از نظر داستان و کارگردانی و بازی <sep>

<s>نمونه دیدگاه خیلی منفی از نظر داستان و بازی <sep>

<s>نمونه دیدگاه خوب از نظر بازی <sep>

<s>نمونه دیدگاه خیلی منفی از نظر بازی و داستان و کارگردانی <sep>

<s>نمونه دیدگاه خیلی خوب از نظر بازی <sep>

<s>نمونه دیدگاه خوب از نظر بازی و داستان <sep>

<s>نمونه دیدگاه خوب از نظر داستان و بازی <sep>

<s>نمونه دیدگاه خوب از نظر داستان <sep>

<s>نمونه دیدگاه خیلی خوب از نظر داستان <sep>

<s>نمونه دیدگاه خیلی خوب از نظر داستان و بازی <sep>

<s>نمونه دیدگاه خیلی خوب از نظر بازی و داستان <sep>

<s>نمونه دیدگاه خیلی منفی از نظر داستان و کارگردانی و فیلمبرداری <sep>

<s>نمونه دیدگاه خیلی منفی از نظر بازی <sep>

<s>نمونه دیدگاه خیلی منفی از نظر کارگردانی <sep>

<s>نمونه دیدگاه منفی از نظر کارگردانی و داستان <sep>

<s>نمونه دیدگاه خیلی خوب از نظر کارگردانی و بازی <sep>

<s>نمونه دیدگاه خوب از نظر کارگردانی و بازی <sep>

<s>نمونه دیدگاه خیلی خوب از نظر صحنه و کارگردانی <sep>

<s>نمونه دیدگاه منفی از نظر بازی و کارگردانی <sep>

<s>نمونه دیدگاه خیلی خوب از نظر بازی و داستان و کارگردانی <sep>

<s>نمونه دیدگاه خیلی خوب از نظر کارگردانی <sep>

<s>نمونه دیدگاه هم خوب هم بد از نظر فیلمبرداری <sep>

<s>نمونه دیدگاه خیلی خوب از نظر بازی و کارگردانی و فیلمبرداری و داستان <sep>

<s>نمونه دیدگاه خیلی خوب از نظر کارگردانی و بازی و موسیقی <sep>

<s>نمونه دیدگاه خوب از نظر صحنه و بازی <sep>

<s>نمونه دیدگاه خیلی خوب از نظر بازی و موسیقی و کارگردانی <sep>

<s>نمونه دیدگاه خوب از نظر داستان و کارگردانی <sep>

<s>نمونه دیدگاه خوب از نظر بازی و کارگردانی <sep>

<s>نمونه دیدگاه خیلی منفی از نظر بازی و کارگردانی <sep>

<s>نمونه دیدگاه منفی از نظر کارگردانی و موسیقی <sep>

<s>نمونه دیدگاه هم خوب هم بد از نظر بازی و داستان <sep>

<s>نمونه دیدگاه خوب از نظر کارگردانی <sep>

<s>نمونه دیدگاه هم خوب هم بد از نظر بازی و کارگردانی <sep>

<s>نمونه دیدگاه خیلی خوب از نظر کارگردانی و داستان <sep>

<s>نمونه دیدگاه خیلی منفی از نظر داستان و کارگردانی <sep>

<s>نمونه دیدگاه خیلی خوب از نظر داستان و کارگردانی <sep>

<s>نمونه دیدگاه هم خوب هم بد از نظر داستان <sep>

<s>نمونه دیدگاه خوب از نظر بازی و داستان و موسیقی و کارگردانی و فیلمبرداری <sep>

<s>نمونه دیدگاه خیلی منفی از نظر داستان و بازی و کارگردانی <sep>

<s>نمونه دیدگاه خیلی منفی از نظر بازی و داستان <sep>

<s>نمونه دیدگاه هم خوب هم بد از نظر داستان و بازی <sep>

<s>نمونه دیدگاه هم خوب هم بد از نظر داستان و بازی و کارگردانی <sep>

<s>نمونه دیدگاه منفی از نظر بازی و داستان <sep>

<s>نمونه دیدگاه خوب از نظر فیلمبرداری و صحنه و موسیقی <sep>

<s>نمونه دیدگاه هم خوب هم بد از نظر داستان و کارگردانی <sep>

<s>نمونه دیدگاه هم خوب هم بد از نظر داستان و کارگردانی و بازی <sep>

<s>نمونه دیدگاه نظری ندارم از نظر بازی <sep>

<s>نمونه دیدگاه منفی از نظر داستان و کارگردانی <sep>

<s>نمونه دیدگاه هم خوب هم بد از نظر داستان و بازی و صحنه <sep>

<s>نمونه دیدگاه خوب از نظر کارگردانی و داستان و بازی و فیلمبرداری <sep>

<s>نمونه دیدگاه خوب از نظر بازی و صحنه و داستان <sep>

<s>نمونه دیدگاه خیلی خوب از نظر بازی و صحنه و داستان <sep>

<s>نمونه دیدگاه خیلی خوب از نظر بازی و موسیقی و فیلمبرداری <sep>

<s>نمونه دیدگاه خیلی خوب از نظر کارگردانی و صحنه <sep>

<s>نمونه دیدگاه خیلی خوب از نظر فیلمبرداری و صحنه و داستان و کارگردانی <sep>

<s>نمونه دیدگاه منفی از نظر کارگردانی و بازی <sep>

<s>نمونه دیدگاه منفی از نظر کارگردانی <sep>

<s>نمونه دیدگاه هم خوب هم بد از نظر داستان و فیلمبرداری <sep>

<s>نمونه دیدگاه خیلی خوب از نظر کارگردانی و بازی و داستان <sep>

<s>نمونه دیدگاه خیلی خوب از نظر فیلمبرداری و بازی و داستان <sep>

<s>نمونه دیدگاه خیلی خوب از نظر کارگردانی و بازی و داستان و صحنه <sep>

<s>نمونه دیدگاه هم خوب هم بد از نظر موسیقی و کارگردانی <sep>

<s>نمونه دیدگاه هم خوب هم بد از نظر کارگردانی و داستان <sep>

<s>نمونه دیدگاه خیلی خوب از نظر موسیقی و صحنه <sep>

<s>نمونه دیدگاه هم خوب هم بد از نظر صحنه و فیلمبرداری و داستان و بازی <sep>

<s>نمونه دیدگاه خیلی خوب از نظر بازی و داستان و موسیقی و فیلمبرداری <sep>

<s>نمونه دیدگاه خیلی خوب از نظر بازی و فیلمبرداری <sep>

<s>نمونه دیدگاه خیلی منفی از نظر کارگردانی و صدا و صحنه و داستان <sep>

<s>نمونه دیدگاه خوب از نظر داستان و کارگردانی و بازی <sep>

<s>نمونه دیدگاه منفی از نظر داستان و بازی و کارگردانی <sep>

<s>نمونه دیدگاه خوب از نظر داستان و بازی و موسیقی <sep>

<s>نمونه دیدگاه خیلی خوب از نظر بازی و کارگردانی <sep>

<s>نمونه دیدگاه هم خوب هم بد از نظر کارگردانی <sep>

<s>نمونه دیدگاه خیلی منفی از نظر کارگردانی و بازی و صحنه <sep>

<s>نمونه دیدگاه هم خوب هم بد از نظر کارگردانی و بازی <sep>

<s>نمونه دیدگاه هم خوب هم بد از نظر صحنه و فیلمبرداری و داستان <sep>

<s>نمونه دیدگاه خوب از نظر موسیقی و داستان <sep>

<s>نمونه دیدگاه منفی از نظر موسیقی و بازی و داستان <sep>

<s>نمونه دیدگاه خیلی خوب از نظر صدا و بازی <sep>

<s>نمونه دیدگاه خیلی خوب از نظر بازی و صحنه و فیلمبرداری <sep>

<s>نمونه دیدگاه خیلی منفی از نظر بازی و فیلمبرداری و داستان و کارگردانی <sep>

<s>نمونه دیدگاه خیلی منفی از نظر صحنه <sep>

<s>نمونه دیدگاه منفی از نظر داستان و صحنه <sep>

<s>نمونه دیدگاه منفی از نظر بازی و صحنه و صدا <sep>

<s>نمونه دیدگاه خیلی منفی از نظر فیلمبرداری و صدا <sep>

<s>نمونه دیدگاه خیلی خوب از نظر موسیقی <sep>

<s>نمونه دیدگاه خوب از نظر بازی و کارگردانی و داستان <sep>

<s>نمونه دیدگاه خیلی خوب از نظر بازی و فیلمبرداری و موسیقی و کارگردانی و داستان <sep>

<s>نمونه دیدگاه هم خوب هم بد از نظر فیلمبرداری و داستان و بازی <sep>

<s>نمونه دیدگاه منفی از نظر صحنه و فیلمبرداری و داستان <sep>

<s>نمونه دیدگاه خیلی خوب از نظر بازی و کارگردانی و داستان <sep>

```

## Questions?

Post a Github issue on the [ParsGPT2 Issues](https://github.com/hooshvare/parsgpt/issues) repo.

|

HScomcom/gpt2-fairytales

|

HScomcom

| 2021-05-21T10:16:43Z | 11 | 0 |

transformers

|

[

"transformers",

"pytorch",

"jax",

"gpt2",

"text-generation",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2022-03-02T23:29:04Z |

### Model information

Fine tuning data: https://www.kaggle.com/cuddlefish/fairy-tales

License: CC0: Public Domain

Base model: gpt-2 large

Epoch: 30

Train runtime: 17861.6048 secs

Loss: 0.0412

API page: [Ainize](https://ainize.ai/fpem123/GPT2-FairyTales?branch=master)

Demo page: [End-point](https://master-gpt2-fairy-tales-fpem123.endpoint.ainize.ai/)

### ===Teachable NLP=== ###

To train a GPT-2 model, write code and require GPU resources, but can easily fine-tune and get an API to use the model here for free.

Teachable NLP: [Teachable NLP](https://ainize.ai/teachable-nlp)

Tutorial: [Tutorial](https://forum.ainetwork.ai/t/teachable-nlp-how-to-use-teachable-nlp/65?utm_source=community&utm_medium=huggingface&utm_campaign=model&utm_content=teachable%20nlp)

And my other fairytale model: [showcase](https://forum.ainetwork.ai/t/teachable-nlp-gpt-2-fairy-tales/68)

|

HScomcom/gpt2-MyLittlePony

|

HScomcom

| 2021-05-21T10:09:36Z | 12 | 1 |

transformers

|

[

"transformers",

"pytorch",

"jax",

"gpt2",

"text-generation",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2022-03-02T23:29:04Z |

The model that generates the My little pony script

Fine tuning data: [Kaggle](https://www.kaggle.com/liury123/my-little-pony-transcript?select=clean_dialog.csv)

API page: [Ainize](https://ainize.ai/fpem123/GPT2-MyLittlePony)

Demo page: [End point](https://master-gpt2-my-little-pony-fpem123.endpoint.ainize.ai/)

### Model information

Base model: gpt-2 large

Epoch: 30

Train runtime: 4943.9641 secs

Loss: 0.0291

###===Teachable NLP===

To train a GPT-2 model, write code and require GPU resources, but can easily fine-tune and get an API to use the model here for free.

Teachable NLP: [Teachable NLP](https://ainize.ai/teachable-nlp)

Tutorial: [Tutorial](https://forum.ainetwork.ai/t/teachable-nlp-how-to-use-teachable-nlp/65?utm_source=community&utm_medium=huggingface&utm_campaign=model&utm_content=teachable%20nlp)

|

Ferch423/gpt2-small-portuguese-wikipediabio

|

Ferch423

| 2021-05-21T09:42:53Z | 20 | 0 |

transformers

|

[

"transformers",

"pytorch",

"jax",

"gpt2",

"text-generation",

"pt",

"wikipedia",

"finetuning",

"dataset:wikipedia",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2022-03-02T23:29:04Z |

---

language: "pt"

tags:

- pt

- wikipedia

- gpt2

- finetuning

datasets:

- wikipedia

widget:

- "André Um"

- "Maria do Santos"

- "Roberto Carlos"

licence: "mit"

---

# GPT2-SMALL-PORTUGUESE-WIKIPEDIABIO

This is a finetuned model version of gpt2-small-portuguese(https://huggingface.co/pierreguillou/gpt2-small-portuguese) by pierreguillou.

It was trained on a person abstract dataset extracted from DBPEDIA (over 100000 people's abstracts). The model is intended as a simple and fun experiment for generating texts abstracts based on ordinary people's names.

|

lg/ghpy_40k

|

lg

| 2021-05-20T23:37:47Z | 3 | 0 |

transformers

|

[

"transformers",

"pytorch",

"endpoints_compatible",

"region:us"

] | null | 2022-03-02T23:29:05Z |

# This model is probably not what you're looking for.

|

urduhack/roberta-urdu-small

|

urduhack

| 2021-05-20T22:52:23Z | 884 | 8 |

transformers

|

[

"transformers",

"pytorch",

"jax",

"roberta",

"fill-mask",

"roberta-urdu-small",

"urdu",

"ur",

"license:mit",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

fill-mask

| 2022-03-02T23:29:05Z |

---

language: ur

thumbnail: https://raw.githubusercontent.com/urduhack/urduhack/master/docs/_static/urduhack.png

tags:

- roberta-urdu-small

- urdu

- transformers

license: mit

---

## roberta-urdu-small

[](https://github.com/urduhack/urduhack/blob/master/LICENSE)

### Overview

**Language model:** roberta-urdu-small

**Model size:** 125M

**Language:** Urdu

**Training data:** News data from urdu news resources in Pakistan

### About roberta-urdu-small

roberta-urdu-small is a language model for urdu language.

```

from transformers import pipeline

fill_mask = pipeline("fill-mask", model="urduhack/roberta-urdu-small", tokenizer="urduhack/roberta-urdu-small")

```

## Training procedure

roberta-urdu-small was trained on urdu news corpus. Training data was normalized using normalization module from

urduhack to eliminate characters from other languages like arabic.

### About Urduhack