modelId

stringlengths 5

139

| author

stringlengths 2

42

| last_modified

timestamp[us, tz=UTC]date 2020-02-15 11:33:14

2025-08-31 06:26:39

| downloads

int64 0

223M

| likes

int64 0

11.7k

| library_name

stringclasses 530

values | tags

listlengths 1

4.05k

| pipeline_tag

stringclasses 55

values | createdAt

timestamp[us, tz=UTC]date 2022-03-02 23:29:04

2025-08-31 06:26:13

| card

stringlengths 11

1.01M

|

|---|---|---|---|---|---|---|---|---|---|

Asap7772/rl-densev2-4b-full4k-16k-0827

|

Asap7772

| 2025-08-30T22:04:07Z | 0 | 0 |

transformers

|

[

"transformers",

"safetensors",

"qwen3",

"text-generation",

"conversational",

"arxiv:1910.09700",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2025-08-30T22:01:58Z |

---

library_name: transformers

tags: []

---

# Model Card for Model ID

<!-- Provide a quick summary of what the model is/does. -->

## Model Details

### Model Description

<!-- Provide a longer summary of what this model is. -->

This is the model card of a 🤗 transformers model that has been pushed on the Hub. This model card has been automatically generated.

- **Developed by:** [More Information Needed]

- **Funded by [optional]:** [More Information Needed]

- **Shared by [optional]:** [More Information Needed]

- **Model type:** [More Information Needed]

- **Language(s) (NLP):** [More Information Needed]

- **License:** [More Information Needed]

- **Finetuned from model [optional]:** [More Information Needed]

### Model Sources [optional]

<!-- Provide the basic links for the model. -->

- **Repository:** [More Information Needed]

- **Paper [optional]:** [More Information Needed]

- **Demo [optional]:** [More Information Needed]

## Uses

<!-- Address questions around how the model is intended to be used, including the foreseeable users of the model and those affected by the model. -->

### Direct Use

<!-- This section is for the model use without fine-tuning or plugging into a larger ecosystem/app. -->

[More Information Needed]

### Downstream Use [optional]

<!-- This section is for the model use when fine-tuned for a task, or when plugged into a larger ecosystem/app -->

[More Information Needed]

### Out-of-Scope Use

<!-- This section addresses misuse, malicious use, and uses that the model will not work well for. -->

[More Information Needed]

## Bias, Risks, and Limitations

<!-- This section is meant to convey both technical and sociotechnical limitations. -->

[More Information Needed]

### Recommendations

<!-- This section is meant to convey recommendations with respect to the bias, risk, and technical limitations. -->

Users (both direct and downstream) should be made aware of the risks, biases and limitations of the model. More information needed for further recommendations.

## How to Get Started with the Model

Use the code below to get started with the model.

[More Information Needed]

## Training Details

### Training Data

<!-- This should link to a Dataset Card, perhaps with a short stub of information on what the training data is all about as well as documentation related to data pre-processing or additional filtering. -->

[More Information Needed]

### Training Procedure

<!-- This relates heavily to the Technical Specifications. Content here should link to that section when it is relevant to the training procedure. -->

#### Preprocessing [optional]

[More Information Needed]

#### Training Hyperparameters

- **Training regime:** [More Information Needed] <!--fp32, fp16 mixed precision, bf16 mixed precision, bf16 non-mixed precision, fp16 non-mixed precision, fp8 mixed precision -->

#### Speeds, Sizes, Times [optional]

<!-- This section provides information about throughput, start/end time, checkpoint size if relevant, etc. -->

[More Information Needed]

## Evaluation

<!-- This section describes the evaluation protocols and provides the results. -->

### Testing Data, Factors & Metrics

#### Testing Data

<!-- This should link to a Dataset Card if possible. -->

[More Information Needed]

#### Factors

<!-- These are the things the evaluation is disaggregating by, e.g., subpopulations or domains. -->

[More Information Needed]

#### Metrics

<!-- These are the evaluation metrics being used, ideally with a description of why. -->

[More Information Needed]

### Results

[More Information Needed]

#### Summary

## Model Examination [optional]

<!-- Relevant interpretability work for the model goes here -->

[More Information Needed]

## Environmental Impact

<!-- Total emissions (in grams of CO2eq) and additional considerations, such as electricity usage, go here. Edit the suggested text below accordingly -->

Carbon emissions can be estimated using the [Machine Learning Impact calculator](https://mlco2.github.io/impact#compute) presented in [Lacoste et al. (2019)](https://arxiv.org/abs/1910.09700).

- **Hardware Type:** [More Information Needed]

- **Hours used:** [More Information Needed]

- **Cloud Provider:** [More Information Needed]

- **Compute Region:** [More Information Needed]

- **Carbon Emitted:** [More Information Needed]

## Technical Specifications [optional]

### Model Architecture and Objective

[More Information Needed]

### Compute Infrastructure

[More Information Needed]

#### Hardware

[More Information Needed]

#### Software

[More Information Needed]

## Citation [optional]

<!-- If there is a paper or blog post introducing the model, the APA and Bibtex information for that should go in this section. -->

**BibTeX:**

[More Information Needed]

**APA:**

[More Information Needed]

## Glossary [optional]

<!-- If relevant, include terms and calculations in this section that can help readers understand the model or model card. -->

[More Information Needed]

## More Information [optional]

[More Information Needed]

## Model Card Authors [optional]

[More Information Needed]

## Model Card Contact

[More Information Needed]

|

AnerYubo/blockassist-bc-beaked_lumbering_cockroach_1756591404

|

AnerYubo

| 2025-08-30T22:03:27Z | 0 | 0 | null |

[

"gensyn",

"blockassist",

"gensyn-blockassist",

"minecraft",

"beaked lumbering cockroach",

"arxiv:2504.07091",

"region:us"

] | null | 2025-08-30T22:03:25Z |

---

tags:

- gensyn

- blockassist

- gensyn-blockassist

- minecraft

- beaked lumbering cockroach

---

# Gensyn BlockAssist

Gensyn's BlockAssist is a distributed extension of the paper [AssistanceZero: Scalably Solving Assistance Games](https://arxiv.org/abs/2504.07091).

|

klmdr22/blockassist-bc-wild_loud_newt_1756591353

|

klmdr22

| 2025-08-30T22:03:15Z | 0 | 0 | null |

[

"gensyn",

"blockassist",

"gensyn-blockassist",

"minecraft",

"wild loud newt",

"arxiv:2504.07091",

"region:us"

] | null | 2025-08-30T22:03:12Z |

---

tags:

- gensyn

- blockassist

- gensyn-blockassist

- minecraft

- wild loud newt

---

# Gensyn BlockAssist

Gensyn's BlockAssist is a distributed extension of the paper [AssistanceZero: Scalably Solving Assistance Games](https://arxiv.org/abs/2504.07091).

|

knmarts/blockassist-bc-bipedal_snorting_seal_1756591292

|

knmarts

| 2025-08-30T22:02:27Z | 0 | 0 | null |

[

"gensyn",

"blockassist",

"gensyn-blockassist",

"minecraft",

"bipedal snorting seal",

"arxiv:2504.07091",

"region:us"

] | null | 2025-08-30T22:02:02Z |

---

tags:

- gensyn

- blockassist

- gensyn-blockassist

- minecraft

- bipedal snorting seal

---

# Gensyn BlockAssist

Gensyn's BlockAssist is a distributed extension of the paper [AssistanceZero: Scalably Solving Assistance Games](https://arxiv.org/abs/2504.07091).

|

csikasote/mms-1b-all-bemgen-female-adv-42

|

csikasote

| 2025-08-30T22:01:59Z | 0 | 0 |

transformers

|

[

"transformers",

"tensorboard",

"safetensors",

"wav2vec2",

"automatic-speech-recognition",

"bemgen",

"mms",

"generated_from_trainer",

"base_model:facebook/mms-1b-all",

"base_model:finetune:facebook/mms-1b-all",

"license:cc-by-nc-4.0",

"endpoints_compatible",

"region:us"

] |

automatic-speech-recognition

| 2025-08-30T21:19:05Z |

---

library_name: transformers

license: cc-by-nc-4.0

base_model: facebook/mms-1b-all

tags:

- automatic-speech-recognition

- bemgen

- mms

- generated_from_trainer

metrics:

- wer

model-index:

- name: mms-1b-all-bemgen-female-adv-42

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# mms-1b-all-bemgen-female-adv-42

This model is a fine-tuned version of [facebook/mms-1b-all](https://huggingface.co/facebook/mms-1b-all) on the BEMGEN - BEM dataset.

It achieves the following results on the evaluation set:

- Loss: 0.2723

- Wer: 0.4081

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 0.0003

- train_batch_size: 8

- eval_batch_size: 4

- seed: 42

- gradient_accumulation_steps: 2

- total_train_batch_size: 16

- optimizer: Use OptimizerNames.ADAMW_TORCH with betas=(0.9,0.999) and epsilon=1e-08 and optimizer_args=No additional optimizer arguments

- lr_scheduler_type: linear

- lr_scheduler_warmup_steps: 200

- num_epochs: 30.0

- mixed_precision_training: Native AMP

### Training results

| Training Loss | Epoch | Step | Validation Loss | Wer |

|:-------------:|:------:|:----:|:---------------:|:------:|

| 5.5748 | 0.9852 | 200 | 3.0897 | 0.9999 |

| 2.1711 | 1.9704 | 400 | 0.3495 | 0.5085 |

| 0.3129 | 2.9557 | 600 | 0.2979 | 0.4534 |

| 0.2779 | 3.9409 | 800 | 0.2797 | 0.4394 |

| 0.2626 | 4.9261 | 1000 | 0.2778 | 0.4066 |

| 0.2472 | 5.9113 | 1200 | 0.2723 | 0.4081 |

| 0.2395 | 6.8966 | 1400 | 0.2735 | 0.4230 |

| 0.2329 | 7.8818 | 1600 | 0.2746 | 0.4181 |

| 0.2284 | 8.8670 | 1800 | 0.2753 | 0.4233 |

### Framework versions

- Transformers 4.53.0.dev0

- Pytorch 2.6.0+cu124

- Datasets 3.6.0

- Tokenizers 0.21.0

|

bah63843/blockassist-bc-plump_fast_antelope_1756591150

|

bah63843

| 2025-08-30T22:00:01Z | 0 | 0 | null |

[

"gensyn",

"blockassist",

"gensyn-blockassist",

"minecraft",

"plump fast antelope",

"arxiv:2504.07091",

"region:us"

] | null | 2025-08-30T21:59:53Z |

---

tags:

- gensyn

- blockassist

- gensyn-blockassist

- minecraft

- plump fast antelope

---

# Gensyn BlockAssist

Gensyn's BlockAssist is a distributed extension of the paper [AssistanceZero: Scalably Solving Assistance Games](https://arxiv.org/abs/2504.07091).

|

akirafudo/blockassist-bc-keen_fast_giraffe_1756591173

|

akirafudo

| 2025-08-30T21:59:55Z | 0 | 0 | null |

[

"gensyn",

"blockassist",

"gensyn-blockassist",

"minecraft",

"keen fast giraffe",

"arxiv:2504.07091",

"region:us"

] | null | 2025-08-30T21:59:50Z |

---

tags:

- gensyn

- blockassist

- gensyn-blockassist

- minecraft

- keen fast giraffe

---

# Gensyn BlockAssist

Gensyn's BlockAssist is a distributed extension of the paper [AssistanceZero: Scalably Solving Assistance Games](https://arxiv.org/abs/2504.07091).

|

ggozzy/blockassist-bc-stubby_yapping_mandrill_1756591107

|

ggozzy

| 2025-08-30T21:59:35Z | 0 | 0 | null |

[

"gensyn",

"blockassist",

"gensyn-blockassist",

"minecraft",

"stubby yapping mandrill",

"arxiv:2504.07091",

"region:us"

] | null | 2025-08-30T21:59:29Z |

---

tags:

- gensyn

- blockassist

- gensyn-blockassist

- minecraft

- stubby yapping mandrill

---

# Gensyn BlockAssist

Gensyn's BlockAssist is a distributed extension of the paper [AssistanceZero: Scalably Solving Assistance Games](https://arxiv.org/abs/2504.07091).

|

Azumine/blockassist-bc-coiled_sharp_cockroach_1756591088

|

Azumine

| 2025-08-30T21:58:49Z | 0 | 0 | null |

[

"gensyn",

"blockassist",

"gensyn-blockassist",

"minecraft",

"coiled sharp cockroach",

"arxiv:2504.07091",

"region:us"

] | null | 2025-08-30T21:58:41Z |

---

tags:

- gensyn

- blockassist

- gensyn-blockassist

- minecraft

- coiled sharp cockroach

---

# Gensyn BlockAssist

Gensyn's BlockAssist is a distributed extension of the paper [AssistanceZero: Scalably Solving Assistance Games](https://arxiv.org/abs/2504.07091).

|

andriuusa/Qwen3-0.6B-Gensyn-Swarm-snappy_whistling_iguana

|

andriuusa

| 2025-08-30T21:58:27Z | 23 | 0 |

transformers

|

[

"transformers",

"safetensors",

"qwen3",

"text-generation",

"rl-swarm",

"genrl-swarm",

"grpo",

"gensyn",

"I am snappy_whistling_iguana",

"arxiv:1910.09700",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2025-08-13T11:04:43Z |

---

library_name: transformers

tags:

- rl-swarm

- genrl-swarm

- grpo

- gensyn

- I am snappy_whistling_iguana

---

# Model Card for Model ID

<!-- Provide a quick summary of what the model is/does. -->

## Model Details

### Model Description

<!-- Provide a longer summary of what this model is. -->

This is the model card of a 🤗 transformers model that has been pushed on the Hub. This model card has been automatically generated.

- **Developed by:** [More Information Needed]

- **Funded by [optional]:** [More Information Needed]

- **Shared by [optional]:** [More Information Needed]

- **Model type:** [More Information Needed]

- **Language(s) (NLP):** [More Information Needed]

- **License:** [More Information Needed]

- **Finetuned from model [optional]:** [More Information Needed]

### Model Sources [optional]

<!-- Provide the basic links for the model. -->

- **Repository:** [More Information Needed]

- **Paper [optional]:** [More Information Needed]

- **Demo [optional]:** [More Information Needed]

## Uses

<!-- Address questions around how the model is intended to be used, including the foreseeable users of the model and those affected by the model. -->

### Direct Use

<!-- This section is for the model use without fine-tuning or plugging into a larger ecosystem/app. -->

[More Information Needed]

### Downstream Use [optional]

<!-- This section is for the model use when fine-tuned for a task, or when plugged into a larger ecosystem/app -->

[More Information Needed]

### Out-of-Scope Use

<!-- This section addresses misuse, malicious use, and uses that the model will not work well for. -->

[More Information Needed]

## Bias, Risks, and Limitations

<!-- This section is meant to convey both technical and sociotechnical limitations. -->

[More Information Needed]

### Recommendations

<!-- This section is meant to convey recommendations with respect to the bias, risk, and technical limitations. -->

Users (both direct and downstream) should be made aware of the risks, biases and limitations of the model. More information needed for further recommendations.

## How to Get Started with the Model

Use the code below to get started with the model.

[More Information Needed]

## Training Details

### Training Data

<!-- This should link to a Dataset Card, perhaps with a short stub of information on what the training data is all about as well as documentation related to data pre-processing or additional filtering. -->

[More Information Needed]

### Training Procedure

<!-- This relates heavily to the Technical Specifications. Content here should link to that section when it is relevant to the training procedure. -->

#### Preprocessing [optional]

[More Information Needed]

#### Training Hyperparameters

- **Training regime:** [More Information Needed] <!--fp32, fp16 mixed precision, bf16 mixed precision, bf16 non-mixed precision, fp16 non-mixed precision, fp8 mixed precision -->

#### Speeds, Sizes, Times [optional]

<!-- This section provides information about throughput, start/end time, checkpoint size if relevant, etc. -->

[More Information Needed]

## Evaluation

<!-- This section describes the evaluation protocols and provides the results. -->

### Testing Data, Factors & Metrics

#### Testing Data

<!-- This should link to a Dataset Card if possible. -->

[More Information Needed]

#### Factors

<!-- These are the things the evaluation is disaggregating by, e.g., subpopulations or domains. -->

[More Information Needed]

#### Metrics

<!-- These are the evaluation metrics being used, ideally with a description of why. -->

[More Information Needed]

### Results

[More Information Needed]

#### Summary

## Model Examination [optional]

<!-- Relevant interpretability work for the model goes here -->

[More Information Needed]

## Environmental Impact

<!-- Total emissions (in grams of CO2eq) and additional considerations, such as electricity usage, go here. Edit the suggested text below accordingly -->

Carbon emissions can be estimated using the [Machine Learning Impact calculator](https://mlco2.github.io/impact#compute) presented in [Lacoste et al. (2019)](https://arxiv.org/abs/1910.09700).

- **Hardware Type:** [More Information Needed]

- **Hours used:** [More Information Needed]

- **Cloud Provider:** [More Information Needed]

- **Compute Region:** [More Information Needed]

- **Carbon Emitted:** [More Information Needed]

## Technical Specifications [optional]

### Model Architecture and Objective

[More Information Needed]

### Compute Infrastructure

[More Information Needed]

#### Hardware

[More Information Needed]

#### Software

[More Information Needed]

## Citation [optional]

<!-- If there is a paper or blog post introducing the model, the APA and Bibtex information for that should go in this section. -->

**BibTeX:**

[More Information Needed]

**APA:**

[More Information Needed]

## Glossary [optional]

<!-- If relevant, include terms and calculations in this section that can help readers understand the model or model card. -->

[More Information Needed]

## More Information [optional]

[More Information Needed]

## Model Card Authors [optional]

[More Information Needed]

## Model Card Contact

[More Information Needed]

|

Dejiat/blockassist-bc-savage_unseen_bobcat_1756591077

|

Dejiat

| 2025-08-30T21:58:25Z | 0 | 0 | null |

[

"gensyn",

"blockassist",

"gensyn-blockassist",

"minecraft",

"savage unseen bobcat",

"arxiv:2504.07091",

"region:us"

] | null | 2025-08-30T21:58:22Z |

---

tags:

- gensyn

- blockassist

- gensyn-blockassist

- minecraft

- savage unseen bobcat

---

# Gensyn BlockAssist

Gensyn's BlockAssist is a distributed extension of the paper [AssistanceZero: Scalably Solving Assistance Games](https://arxiv.org/abs/2504.07091).

|

klmdr22/blockassist-bc-wild_loud_newt_1756590936

|

klmdr22

| 2025-08-30T21:56:18Z | 0 | 0 | null |

[

"gensyn",

"blockassist",

"gensyn-blockassist",

"minecraft",

"wild loud newt",

"arxiv:2504.07091",

"region:us"

] | null | 2025-08-30T21:56:15Z |

---

tags:

- gensyn

- blockassist

- gensyn-blockassist

- minecraft

- wild loud newt

---

# Gensyn BlockAssist

Gensyn's BlockAssist is a distributed extension of the paper [AssistanceZero: Scalably Solving Assistance Games](https://arxiv.org/abs/2504.07091).

|

espnet/owsm_ctc_v3.2_ft_1B

|

espnet

| 2025-08-30T21:56:02Z | 30 | 4 |

espnet

|

[

"espnet",

"audio",

"automatic-speech-recognition",

"speech-translation",

"language-identification",

"multilingual",

"dataset:owsm_v3.2_ctc",

"arxiv:2406.09282",

"arxiv:2401.16658",

"arxiv:2309.13876",

"base_model:espnet/owsm_ctc_v3.2_ft_1B",

"base_model:finetune:espnet/owsm_ctc_v3.2_ft_1B",

"license:cc-by-4.0",

"region:us"

] |

automatic-speech-recognition

| 2024-09-24T18:25:20Z |

---

tags:

- espnet

- audio

- automatic-speech-recognition

- speech-translation

- language-identification

language: multilingual

datasets:

- owsm_v3.2_ctc

base_model:

- espnet/owsm_ctc_v3.2_ft_1B

license: cc-by-4.0

---

[OWSM-CTC](https://aclanthology.org/2024.acl-long.549/) (Peng et al., ACL 2024) is an encoder-only speech foundation model based on hierarchical multi-task self-conditioned CTC.

This model is trained on 180k hours of public audio data for multilingual speech recognition, any-to-any speech translation, and language identification, which follows the design of the project, [Open Whisper-style Speech Model (OWSM)](https://www.wavlab.org/activities/2024/owsm/).

This model is initialized with [OWSM-CTC v3.1](https://huggingface.co/pyf98/owsm_ctc_v3.1_1B) and then fine-tuned on [v3.2 data](https://arxiv.org/abs/2406.09282) for 225k steps.

To use the pre-trained model, please install `espnet` and `espnet_model_zoo`. The requirements are:

```

librosa

torch

espnet

espnet_model_zoo

```

**The recipe can be found in ESPnet:** https://github.com/espnet/espnet/tree/master/egs2/owsm_ctc_v3.1/s2t1

### Example script for batched inference

`Speech2TextGreedySearch` now provides a unified batched inference method `batch_decode`. It performs CTC greedy decoding for a batch of short-form or long-form audios. If an audio is shorter than 30s, it will be padded to 30s; otherwise it will be split into overlapped segments (same as the "long-form ASR/ST" method below).

```python

from espnet2.bin.s2t_inference_ctc import Speech2TextGreedySearch

s2t = Speech2TextGreedySearch.from_pretrained(

"espnet/owsm_ctc_v3.2_ft_1B",

device="cuda",

use_flash_attn=False, # set to True for better efficiency if flash attn is installed and dtype is float16 or bfloat16

lang_sym='<eng>',

task_sym='<asr>',

)

res = s2t.batch_decode(

"audio.wav", # a single audio (path or 1-D array/tensor) as input

batch_size=16,

context_len_in_secs=4,

) # res is a single str, i.e., the predicted text without special tokens

res = s2t.batch_decode(

["audio1.wav", "audio2.wav", "audio3.wav"], # a list of audios as input

batch_size=16,

context_len_in_secs=4,

) # res is a list of str

# Please check the code of `batch_decode` for all supported inputs

```

### Example script for short-form ASR/ST/LID

Our models are trained on 16kHz audio with a fixed duration of 30s. When using the pre-trained model, please ensure the input speech is 16kHz and pad or truncate it to 30s.

```python

import librosa

from espnet2.bin.s2t_inference_ctc import Speech2TextGreedySearch

s2t = Speech2TextGreedySearch.from_pretrained(

"espnet/owsm_ctc_v3.2_ft_1B",

device="cuda",

generate_interctc_outputs=False,

lang_sym='<eng>',

task_sym='<asr>',

)

# NOTE: OWSM-CTC is trained on 16kHz audio with a fixed 30s duration. Please ensure your input has the correct sample rate; otherwise resample it to 16k before feeding it to the model

speech, rate = librosa.load("xxx.wav", sr=16000)

speech = librosa.util.fix_length(speech, size=(16000 * 30))

res = s2t(speech)[0]

print(res)

```

### Example script for long-form ASR/ST

```python

import soundfile as sf

import torch

from espnet2.bin.s2t_inference_ctc import Speech2TextGreedySearch

context_len_in_secs = 4 # left and right context when doing buffered inference

batch_size = 32 # depends on the GPU memory

s2t = Speech2TextGreedySearch.from_pretrained(

"espnet/owsm_ctc_v3.2_ft_1B",

device='cuda' if torch.cuda.is_available() else 'cpu',

generate_interctc_outputs=False,

lang_sym='<eng>',

task_sym='<asr>',

)

speech, rate = sf.read(

"xxx.wav"

)

text = s2t.decode_long_batched_buffered(

speech,

batch_size=batch_size,

context_len_in_secs=context_len_in_secs,

)

print(text)

```

### Example of CTC forced alignment using `ctc-segmentation`

CTC segmentation can be efficiently applied to audio of an arbitrary length.

```python

import soundfile as sf

from espnet2.bin.s2t_ctc_align import CTCSegmentation

from espnet_model_zoo.downloader import ModelDownloader

# Download model first

d = ModelDownloader()

downloaded = d.download_and_unpack("espnet/owsm_ctc_v3.2_ft_1B")

aligner = CTCSegmentation(

**downloaded,

fs=16000,

ngpu=1,

batch_size=32, # batched parallel decoding; reduce it if your GPU memory is smaller

kaldi_style_text=True,

time_stamps="auto", # "auto" can be more accurate than "fixed" when converting token index to timestamp

lang_sym="<eng>",

task_sym="<asr>",

context_len_in_secs=2, # left and right context in buffered decoding

)

speech, rate = sf.read(

"./test_utils/ctc_align_test.wav"

)

print(f"speech duration: {len(speech) / rate : .2f} seconds")

text = """

utt1 THE SALE OF THE HOTELS

utt2 IS PART OF HOLIDAY'S STRATEGY

utt3 TO SELL OFF ASSETS

utt4 AND CONCENTRATE ON PROPERTY MANAGEMENT

"""

segments = aligner(speech, text)

print(segments)

```

### OWSM series

#### Encoder-decoder OWSM

| Name | Size | Hugging Face Repo |

| :--- | ---: | :---------------- |

| OWSM v3.1 base | 101M | https://huggingface.co/espnet/owsm_v3.1_ebf_base |

| OWSM v3.1 small | 367M | https://huggingface.co/espnet/owsm_v3.1_ebf_small |

| OWSM v3.1 medium | 1.02B | https://huggingface.co/espnet/owsm_v3.1_ebf |

| OWSM v3.2 small | 367M | https://huggingface.co/espnet/owsm_v3.2 |

| OWSM v4 base | 102M | https://huggingface.co/espnet/owsm_v4_base_102M |

| OWSM v4 small | 370M | https://huggingface.co/espnet/owsm_v4_small_370M |

| OWSM v4 medium | 1.02B | https://huggingface.co/espnet/owsm_v4_medium_1B |

#### CTC-based OWSM

| Name | Size | Hugging Face Repo |

| :--- | ---: | :---------------- |

| OWSM-CTC v3.1 medium | 1.01B | https://huggingface.co/espnet/owsm_ctc_v3.1_1B |

| OWSM-CTC v3.2 medium | 1.01B | https://huggingface.co/espnet/owsm_ctc_v3.2_ft_1B |

| OWSM-CTC v4 medium | 1.01B | https://huggingface.co/espnet/owsm_ctc_v4_1B |

### Citations

#### OWSM v4

```BibTex

@inproceedings{owsm-v4,

title={{OWSM} v4: Improving Open Whisper-Style Speech Models via Data Scaling and Cleaning},

author={Yifan Peng and Shakeel Muhammad and Yui Sudo and William Chen and Jinchuan Tian and Chyi-Jiunn Lin and Shinji Watanabe},

booktitle={Proceedings of the Annual Conference of the International Speech Communication Association (INTERSPEECH)},

year={2025},

}

```

#### OWSM-CTC

```BibTex

@inproceedings{owsm-ctc,

title = "{OWSM}-{CTC}: An Open Encoder-Only Speech Foundation Model for Speech Recognition, Translation, and Language Identification",

author = "Peng, Yifan and

Sudo, Yui and

Shakeel, Muhammad and

Watanabe, Shinji",

booktitle = "Proceedings of the Annual Meeting of the Association for Computational Linguistics (ACL)",

year = "2024",

month= {8},

url = "https://aclanthology.org/2024.acl-long.549",

}

```

#### OWSM v3.1 and v3.2

```BibTex

@inproceedings{owsm-v32,

title={On the Effects of Heterogeneous Data Sources on Speech-to-Text Foundation Models},

author={Jinchuan Tian and Yifan Peng and William Chen and Kwanghee Choi and Karen Livescu and Shinji Watanabe},

booktitle={Proceedings of the Annual Conference of the International Speech Communication Association (INTERSPEECH)},

year={2024},

month={9},

pdf="https://arxiv.org/pdf/2406.09282"

}

@inproceedings{owsm-v31,

title={{OWSM v3.1: Better and Faster Open Whisper-Style Speech Models based on E-Branchformer}},

author={Yifan Peng and Jinchuan Tian and William Chen and Siddhant Arora and Brian Yan and Yui Sudo and Muhammad Shakeel and Kwanghee Choi and Jiatong Shi and Xuankai Chang and Jee-weon Jung and Shinji Watanabe},

booktitle={Proceedings of the Annual Conference of the International Speech Communication Association (INTERSPEECH)},

year={2024},

month={9},

pdf="https://arxiv.org/pdf/2401.16658",

}

```

#### Initial OWSM (v1, v2, v3)

```BibTex

@inproceedings{owsm,

title={Reproducing Whisper-Style Training Using An Open-Source Toolkit And Publicly Available Data},

author={Yifan Peng and Jinchuan Tian and Brian Yan and Dan Berrebbi and Xuankai Chang and Xinjian Li and Jiatong Shi and Siddhant Arora and William Chen and Roshan Sharma and Wangyou Zhang and Yui Sudo and Muhammad Shakeel and Jee-weon Jung and Soumi Maiti and Shinji Watanabe},

booktitle={Proceedings of the IEEE Automatic Speech Recognition and Understanding Workshop (ASRU)},

year={2023},

month={12},

pdf="https://arxiv.org/pdf/2309.13876",

}

```

|

espnet/owsm_v3.1_ebf_base

|

espnet

| 2025-08-30T21:55:26Z | 9 | 3 |

espnet

|

[

"espnet",

"audio",

"automatic-speech-recognition",

"speech-translation",

"multilingual",

"dataset:owsm_v3.1",

"arxiv:2401.16658",

"arxiv:2210.00077",

"arxiv:2406.09282",

"arxiv:2309.13876",

"license:cc-by-4.0",

"region:us"

] |

automatic-speech-recognition

| 2024-01-22T21:45:54Z |

---

tags:

- espnet

- audio

- automatic-speech-recognition

- speech-translation

language: multilingual

datasets:

- owsm_v3.1

license: cc-by-4.0

---

## OWSM: Open Whisper-style Speech Model

OWSM aims to develop fully open speech foundation models using publicly available data and open-source toolkits, including [ESPnet](https://github.com/espnet/espnet).

Inference examples can be found on our [project page](https://www.wavlab.org/activities/2024/owsm/).

Our demo is available [here](https://huggingface.co/spaces/pyf98/OWSM_v3_demo).

[OWSM v3.1](https://arxiv.org/abs/2401.16658) is an improved version of OWSM v3. It significantly outperforms OWSM v3 in almost all evaluation benchmarks.

We do not include any new training data. Instead, we utilize a state-of-the-art speech encoder, [E-Branchformer](https://arxiv.org/abs/2210.00077).

This is a base-sized model with 101M parameters and is trained on 180k hours of public speech data.

Specifically, it supports the following speech-to-text tasks:

- Speech recognition

- Any-to-any-language speech translation

- Utterance-level alignment

- Long-form transcription

- Language identification

### OWSM series

#### Encoder-decoder OWSM

| Name | Size | Hugging Face Repo |

| :--- | ---: | :---------------- |

| OWSM v3.1 base | 101M | https://huggingface.co/espnet/owsm_v3.1_ebf_base |

| OWSM v3.1 small | 367M | https://huggingface.co/espnet/owsm_v3.1_ebf_small |

| OWSM v3.1 medium | 1.02B | https://huggingface.co/espnet/owsm_v3.1_ebf |

| OWSM v3.2 small | 367M | https://huggingface.co/espnet/owsm_v3.2 |

| OWSM v4 base | 102M | https://huggingface.co/espnet/owsm_v4_base_102M |

| OWSM v4 small | 370M | https://huggingface.co/espnet/owsm_v4_small_370M |

| OWSM v4 medium | 1.02B | https://huggingface.co/espnet/owsm_v4_medium_1B |

#### CTC-based OWSM

| Name | Size | Hugging Face Repo |

| :--- | ---: | :---------------- |

| OWSM-CTC v3.1 medium | 1.01B | https://huggingface.co/espnet/owsm_ctc_v3.1_1B |

| OWSM-CTC v3.2 medium | 1.01B | https://huggingface.co/espnet/owsm_ctc_v3.2_ft_1B |

| OWSM-CTC v4 medium | 1.01B | https://huggingface.co/espnet/owsm_ctc_v4_1B |

### Citations

#### OWSM v4

```BibTex

@inproceedings{owsm-v4,

title={{OWSM} v4: Improving Open Whisper-Style Speech Models via Data Scaling and Cleaning},

author={Yifan Peng and Shakeel Muhammad and Yui Sudo and William Chen and Jinchuan Tian and Chyi-Jiunn Lin and Shinji Watanabe},

booktitle={Proceedings of the Annual Conference of the International Speech Communication Association (INTERSPEECH)},

year={2025},

}

```

#### OWSM-CTC

```BibTex

@inproceedings{owsm-ctc,

title = "{OWSM}-{CTC}: An Open Encoder-Only Speech Foundation Model for Speech Recognition, Translation, and Language Identification",

author = "Peng, Yifan and

Sudo, Yui and

Shakeel, Muhammad and

Watanabe, Shinji",

booktitle = "Proceedings of the Annual Meeting of the Association for Computational Linguistics (ACL)",

year = "2024",

month= {8},

url = "https://aclanthology.org/2024.acl-long.549",

}

```

#### OWSM v3.1 and v3.2

```BibTex

@inproceedings{owsm-v32,

title={On the Effects of Heterogeneous Data Sources on Speech-to-Text Foundation Models},

author={Jinchuan Tian and Yifan Peng and William Chen and Kwanghee Choi and Karen Livescu and Shinji Watanabe},

booktitle={Proceedings of the Annual Conference of the International Speech Communication Association (INTERSPEECH)},

year={2024},

month={9},

pdf="https://arxiv.org/pdf/2406.09282"

}

@inproceedings{owsm-v31,

title={{OWSM v3.1: Better and Faster Open Whisper-Style Speech Models based on E-Branchformer}},

author={Yifan Peng and Jinchuan Tian and William Chen and Siddhant Arora and Brian Yan and Yui Sudo and Muhammad Shakeel and Kwanghee Choi and Jiatong Shi and Xuankai Chang and Jee-weon Jung and Shinji Watanabe},

booktitle={Proceedings of the Annual Conference of the International Speech Communication Association (INTERSPEECH)},

year={2024},

month={9},

pdf="https://arxiv.org/pdf/2401.16658",

}

```

#### Initial OWSM (v1, v2, v3)

```BibTex

@inproceedings{owsm,

title={Reproducing Whisper-Style Training Using An Open-Source Toolkit And Publicly Available Data},

author={Yifan Peng and Jinchuan Tian and Brian Yan and Dan Berrebbi and Xuankai Chang and Xinjian Li and Jiatong Shi and Siddhant Arora and William Chen and Roshan Sharma and Wangyou Zhang and Yui Sudo and Muhammad Shakeel and Jee-weon Jung and Soumi Maiti and Shinji Watanabe},

booktitle={Proceedings of the IEEE Automatic Speech Recognition and Understanding Workshop (ASRU)},

year={2023},

month={12},

pdf="https://arxiv.org/pdf/2309.13876",

}

```

|

redbioma/swin-CEMEDE-og

|

redbioma

| 2025-08-30T21:54:47Z | 59 | 0 |

transformers

|

[

"transformers",

"tensorboard",

"safetensors",

"swin",

"image-classification",

"generated_from_trainer",

"base_model:microsoft/swin-base-simmim-window6-192",

"base_model:finetune:microsoft/swin-base-simmim-window6-192",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

image-classification

| 2025-08-19T03:31:51Z |

---

library_name: transformers

license: apache-2.0

base_model: microsoft/swin-base-simmim-window6-192

tags:

- image-classification

- generated_from_trainer

metrics:

- accuracy

- f1

model-index:

- name: swin-CEMEDE-og

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# swin-CEMEDE-og

This model is a fine-tuned version of [microsoft/swin-base-simmim-window6-192](https://huggingface.co/microsoft/swin-base-simmim-window6-192) on the cemede dataset.

It achieves the following results on the evaluation set:

- Loss: 0.8707

- Accuracy: 0.8018

- F1: 0.7127

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 0.0002

- train_batch_size: 8

- eval_batch_size: 8

- seed: 42

- optimizer: Use adamw_torch with betas=(0.9,0.999) and epsilon=1e-08 and optimizer_args=No additional optimizer arguments

- lr_scheduler_type: linear

- num_epochs: 10

- mixed_precision_training: Native AMP

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 |

|:-------------:|:------:|:----:|:---------------:|:--------:|:------:|

| 2.2296 | 0.0840 | 100 | 2.1836 | 0.2570 | 0.0825 |

| 1.8921 | 0.1679 | 200 | 2.2063 | 0.3080 | 0.1300 |

| 1.682 | 0.2519 | 300 | 2.4050 | 0.3862 | 0.1449 |

| 1.8146 | 0.3359 | 400 | 1.9343 | 0.4253 | 0.2199 |

| 1.423 | 0.4198 | 500 | 1.9058 | 0.4579 | 0.2335 |

| 1.5637 | 0.5038 | 600 | 1.6756 | 0.5085 | 0.3331 |

| 1.0535 | 0.5877 | 700 | 1.4023 | 0.5641 | 0.3791 |

| 1.0955 | 0.6717 | 800 | 1.3086 | 0.6069 | 0.4426 |

| 0.8927 | 0.7557 | 900 | 1.2083 | 0.6377 | 0.5108 |

| 0.8035 | 0.8396 | 1000 | 1.3281 | 0.6340 | 0.4921 |

| 0.8517 | 0.9236 | 1100 | 1.2840 | 0.6492 | 0.5175 |

| 0.6035 | 1.0076 | 1200 | 1.2919 | 0.6446 | 0.5013 |

| 0.7727 | 1.0915 | 1300 | 1.0839 | 0.6878 | 0.5742 |

| 0.625 | 1.1755 | 1400 | 1.1132 | 0.7034 | 0.5552 |

| 0.554 | 1.2594 | 1500 | 1.2120 | 0.6492 | 0.5758 |

| 0.4117 | 1.3434 | 1600 | 1.1343 | 0.7030 | 0.5748 |

| 0.7557 | 1.4274 | 1700 | 1.1490 | 0.6975 | 0.5751 |

| 0.4841 | 1.5113 | 1800 | 0.9364 | 0.7756 | 0.6364 |

| 0.4899 | 1.5953 | 1900 | 1.1162 | 0.6929 | 0.5657 |

| 0.6598 | 1.6793 | 2000 | 0.9602 | 0.7402 | 0.6597 |

| 0.2826 | 1.7632 | 2100 | 1.2618 | 0.7044 | 0.6255 |

| 0.4785 | 1.8472 | 2200 | 1.0743 | 0.7269 | 0.6488 |

| 0.4427 | 1.9312 | 2300 | 0.8803 | 0.7641 | 0.6690 |

| 0.5305 | 2.0151 | 2400 | 0.8739 | 0.7830 | 0.6996 |

| 0.3814 | 2.0991 | 2500 | 0.9660 | 0.7789 | 0.6873 |

| 0.2273 | 2.1830 | 2600 | 1.0271 | 0.7789 | 0.7071 |

| 0.232 | 2.2670 | 2700 | 0.9957 | 0.7724 | 0.6961 |

| 0.2101 | 2.3510 | 2800 | 0.9729 | 0.7798 | 0.7196 |

| 0.4029 | 2.4349 | 2900 | 1.0296 | 0.7526 | 0.6911 |

| 0.2645 | 2.5189 | 3000 | 1.0878 | 0.7747 | 0.7058 |

| 0.3111 | 2.6029 | 3100 | 1.0745 | 0.7623 | 0.7072 |

| 0.1767 | 2.6868 | 3200 | 0.8820 | 0.7913 | 0.7424 |

| 0.167 | 2.7708 | 3300 | 0.8707 | 0.8018 | 0.7127 |

| 0.2523 | 2.8547 | 3400 | 1.0131 | 0.8046 | 0.7418 |

| 0.0786 | 2.9387 | 3500 | 1.0026 | 0.7807 | 0.7249 |

| 0.259 | 3.0227 | 3600 | 0.9817 | 0.7922 | 0.7109 |

| 0.3004 | 3.1066 | 3700 | 1.0838 | 0.7977 | 0.7341 |

| 0.1594 | 3.1906 | 3800 | 0.9184 | 0.8078 | 0.7323 |

| 0.1957 | 3.2746 | 3900 | 0.8777 | 0.8248 | 0.7255 |

| 0.1107 | 3.3585 | 4000 | 0.9186 | 0.8216 | 0.7360 |

| 0.1389 | 3.4425 | 4100 | 0.9996 | 0.8032 | 0.7358 |

| 0.1273 | 3.5264 | 4200 | 1.0062 | 0.8147 | 0.7604 |

| 0.2635 | 3.6104 | 4300 | 1.0976 | 0.8041 | 0.7406 |

### Framework versions

- Transformers 4.55.4

- Pytorch 2.6.0+cu124

- Datasets 4.0.0

- Tokenizers 0.21.4

|

jacopo-minniti/Qwen2.5-Math-7B-PUM-half_entropy

|

jacopo-minniti

| 2025-08-30T21:54:32Z | 0 | 0 |

transformers

|

[

"transformers",

"safetensors",

"qwen2",

"token-classification",

"generated_from_trainer",

"trl",

"prm",

"axolotl",

"arxiv:2211.14275",

"base_model:Qwen/Qwen2.5-Math-7B",

"base_model:finetune:Qwen/Qwen2.5-Math-7B",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

token-classification

| 2025-08-30T18:05:15Z |

---

base_model: Qwen/Qwen2.5-Math-7B

library_name: transformers

model_name: Qwen2.5-Math-7B-PUM-half_entropy

tags:

- generated_from_trainer

- trl

- prm

- axolotl

licence: license

---

# Model Card for Qwen2.5-Math-7B-PUM-half_entropy

This model is a fine-tuned version of [Qwen/Qwen2.5-Math-7B](https://huggingface.co/Qwen/Qwen2.5-Math-7B).

It has been trained using [TRL](https://github.com/huggingface/trl).

## Quick start

```python

from transformers import pipeline

question = "If you had a time machine, but could only go to the past or the future once and never return, which would you choose and why?"

generator = pipeline("text-generation", model="jacopo-minniti/Qwen2.5-Math-7B-PUM-half_entropy", device="cuda")

output = generator([{"role": "user", "content": question}], max_new_tokens=128, return_full_text=False)[0]

print(output["generated_text"])

```

## Training procedure

[<img src="https://raw.githubusercontent.com/wandb/assets/main/wandb-github-badge-28.svg" alt="Visualize in Weights & Biases" width="150" height="24"/>](https://wandb.ai/uncertainty-guided-reasoning/pum/runs/7dori8lx)

This model was trained with PRM.

### Framework versions

- TRL: 0.21.0

- Transformers: 4.55.2

- Pytorch: 2.7.1

- Datasets: 4.0.0

- Tokenizers: 0.21.4

## Citations

Cite PRM as:

```bibtex

@article{uesato2022solving,

title = {{Solving Math Word Problems With Process- and Outcome-Based Feedback}},

author = {Uesato, Jonathan and Kushman, Nate and Kumar, Ramana and Song, Francis and Siegel, Noah and Wang, Lisa and Creswell, Antonia and Irving, Geoffrey and Higgins, Irina},

year = 2022,

journal = {arXiv preprint arXiv:2211.14275}

}

```

Cite TRL as:

```bibtex

@misc{vonwerra2022trl,

title = {{TRL: Transformer Reinforcement Learning}},

author = {Leandro von Werra and Younes Belkada and Lewis Tunstall and Edward Beeching and Tristan Thrush and Nathan Lambert and Shengyi Huang and Kashif Rasul and Quentin Gallou{\'e}dec},

year = 2020,

journal = {GitHub repository},

publisher = {GitHub},

howpublished = {\url{https://github.com/huggingface/trl}}

}

```

|

espnet/owsm_ctc_v4_1B

|

espnet

| 2025-08-30T21:54:29Z | 60 | 5 |

espnet

|

[

"espnet",

"audio",

"automatic-speech-recognition",

"speech-translation",

"language-identification",

"multilingual",

"dataset:espnet/yodas_owsmv4",

"arxiv:2406.09282",

"arxiv:2401.16658",

"arxiv:2309.13876",

"license:cc-by-4.0",

"region:us"

] |

automatic-speech-recognition

| 2025-01-16T19:34:33Z |

---

datasets:

- espnet/yodas_owsmv4

language: multilingual

library_name: espnet

license: cc-by-4.0

metrics:

- cer

- bleu

- accuracy

tags:

- espnet

- audio

- automatic-speech-recognition

- speech-translation

- language-identification

pipeline_tag: automatic-speech-recognition

---

🏆 **News:** Our [OWSM v4 paper](https://www.isca-archive.org/interspeech_2025/peng25c_interspeech.html) won the [Best Student Paper Award](https://isca-speech.org/ISCA-Awards) at INTERSPEECH 2025!

[Open Whisper-style Speech Model (OWSM)](https://www.wavlab.org/activities/2024/owsm/) is the first **fully open** Whisper-style speech foundation model.

It reproduces and advances OpenAI's Whisper-style training using publicly available data and open-source toolkits.

The code, pre-trained model weights, and training logs are publicly released to promote open science in speech foundation models.

[OWSM-CTC](https://aclanthology.org/2024.acl-long.549/) (Peng et al., ACL 2024) is a novel encoder-only speech foundation model based on hierarchical multi-task self-conditioned CTC.

It supports multilingual speech recognition, speech translation, and language identification within a single non-autoregressive model.

[OWSM-CTC v4](https://www.isca-archive.org/interspeech_2025/peng25c_interspeech.html) is trained for three epochs on 320k hours of public audio data covering multilingual speech recognition, any-to-any speech translation, and language identification.

The newly curated data are publicly released: https://huggingface.co/datasets/espnet/yodas_owsmv4

To use the pre-trained model, please install `espnet` and `espnet_model_zoo`. The requirements are:

```

librosa

torch

espnet

espnet_model_zoo

```

**The recipe can be found in ESPnet:** https://github.com/espnet/espnet/tree/master/egs2/owsm_ctc_v4/s2t1

### Example script for batched inference

`Speech2TextGreedySearch` now provides a unified batched inference method `batch_decode`. It performs CTC greedy decoding for a batch of short-form or long-form audios. If an audio is shorter than 30s, it will be padded to 30s; otherwise it will be split into overlapped segments (same as the "long-form ASR/ST" method below).

```python

from espnet2.bin.s2t_inference_ctc import Speech2TextGreedySearch

s2t = Speech2TextGreedySearch.from_pretrained(

"espnet/owsm_ctc_v4_1B",

device="cuda",

use_flash_attn=False, # set to True for better efficiency if flash attn is installed and dtype is float16 or bfloat16

lang_sym='<eng>',

task_sym='<asr>',

)

res = s2t.batch_decode(

"audio.wav", # a single audio (path or 1-D array/tensor) as input

batch_size=16,

context_len_in_secs=4,

) # res is a single str, i.e., the predicted text without special tokens

res = s2t.batch_decode(

["audio1.wav", "audio2.wav", "audio3.wav"], # a list of audios as input

batch_size=16,

context_len_in_secs=4,

) # res is a list of str

# Please check the code of `batch_decode` for all supported inputs

```

### Example script for short-form ASR/ST/LID

Our models are trained on 16kHz audio with a fixed duration of 30s. When using the pre-trained model, please ensure the input speech is 16kHz and pad or truncate it to 30s.

```python

import librosa

from espnet2.bin.s2t_inference_ctc import Speech2TextGreedySearch

s2t = Speech2TextGreedySearch.from_pretrained(

"espnet/owsm_ctc_v4_1B",

device="cuda",

generate_interctc_outputs=False,

lang_sym='<eng>',

task_sym='<asr>',

)

# NOTE: OWSM-CTC is trained on 16kHz audio with a fixed 30s duration. Please ensure your input has the correct sample rate; otherwise resample it to 16k before feeding it to the model

speech, rate = librosa.load("xxx.wav", sr=16000)

speech = librosa.util.fix_length(speech, size=(16000 * 30))

res = s2t(speech)[0]

print(res)

```

### Example script for long-form ASR/ST

```python

import soundfile as sf

import torch

from espnet2.bin.s2t_inference_ctc import Speech2TextGreedySearch

context_len_in_secs = 4 # left and right context when doing buffered inference

batch_size = 32 # depends on the GPU memory

s2t = Speech2TextGreedySearch.from_pretrained(

"espnet/owsm_ctc_v4_1B",

device='cuda' if torch.cuda.is_available() else 'cpu',

generate_interctc_outputs=False,

lang_sym='<eng>',

task_sym='<asr>',

)

speech, rate = sf.read(

"xxx.wav"

)

text = s2t.decode_long_batched_buffered(

speech,

batch_size=batch_size,

context_len_in_secs=context_len_in_secs,

)

print(text)

```

### Example of CTC forced alignment using `ctc-segmentation`

CTC segmentation can be efficiently applied to audio of an arbitrary length.

```python

import soundfile as sf

from espnet2.bin.s2t_ctc_align import CTCSegmentation

from espnet_model_zoo.downloader import ModelDownloader

# Download model first

d = ModelDownloader()

downloaded = d.download_and_unpack("espnet/owsm_ctc_v4_1B")

aligner = CTCSegmentation(

**downloaded,

fs=16000,

ngpu=1,

batch_size=32, # batched parallel decoding; reduce it if your GPU memory is smaller

kaldi_style_text=True,

time_stamps="auto", # "auto" can be more accurate than "fixed" when converting token index to timestamp

lang_sym="<eng>",

task_sym="<asr>",

context_len_in_secs=2, # left and right context in buffered decoding

)

speech, rate = sf.read(

"./test_utils/ctc_align_test.wav"

)

print(f"speech duration: {len(speech) / rate : .2f} seconds")

text = """

utt1 THE SALE OF THE HOTELS

utt2 IS PART OF HOLIDAY'S STRATEGY

utt3 TO SELL OFF ASSETS

utt4 AND CONCENTRATE ON PROPERTY MANAGEMENT

"""

segments = aligner(speech, text)

print(segments)

```

### OWSM series

#### Encoder-decoder OWSM

| Name | Size | Hugging Face Repo |

| :--- | ---: | :---------------- |

| OWSM v3.1 base | 101M | https://huggingface.co/espnet/owsm_v3.1_ebf_base |

| OWSM v3.1 small | 367M | https://huggingface.co/espnet/owsm_v3.1_ebf_small |

| OWSM v3.1 medium | 1.02B | https://huggingface.co/espnet/owsm_v3.1_ebf |

| OWSM v3.2 small | 367M | https://huggingface.co/espnet/owsm_v3.2 |

| OWSM v4 base | 102M | https://huggingface.co/espnet/owsm_v4_base_102M |

| OWSM v4 small | 370M | https://huggingface.co/espnet/owsm_v4_small_370M |

| OWSM v4 medium | 1.02B | https://huggingface.co/espnet/owsm_v4_medium_1B |

#### CTC-based OWSM

| Name | Size | Hugging Face Repo |

| :--- | ---: | :---------------- |

| OWSM-CTC v3.1 medium | 1.01B | https://huggingface.co/espnet/owsm_ctc_v3.1_1B |

| OWSM-CTC v3.2 medium | 1.01B | https://huggingface.co/espnet/owsm_ctc_v3.2_ft_1B |

| OWSM-CTC v4 medium | 1.01B | https://huggingface.co/espnet/owsm_ctc_v4_1B |

### Citations

#### OWSM v4

```BibTex

@inproceedings{owsm-v4,

title={{OWSM} v4: Improving Open Whisper-Style Speech Models via Data Scaling and Cleaning},

author={Yifan Peng and Shakeel Muhammad and Yui Sudo and William Chen and Jinchuan Tian and Chyi-Jiunn Lin and Shinji Watanabe},

booktitle={Proceedings of the Annual Conference of the International Speech Communication Association (INTERSPEECH)},

year={2025},

}

```

#### OWSM-CTC

```BibTex

@inproceedings{owsm-ctc,

title = "{OWSM}-{CTC}: An Open Encoder-Only Speech Foundation Model for Speech Recognition, Translation, and Language Identification",

author = "Peng, Yifan and

Sudo, Yui and

Shakeel, Muhammad and

Watanabe, Shinji",

booktitle = "Proceedings of the Annual Meeting of the Association for Computational Linguistics (ACL)",

year = "2024",

month= {8},

url = "https://aclanthology.org/2024.acl-long.549",

}

```

#### OWSM v3.1 and v3.2

```BibTex

@inproceedings{owsm-v32,

title={On the Effects of Heterogeneous Data Sources on Speech-to-Text Foundation Models},

author={Jinchuan Tian and Yifan Peng and William Chen and Kwanghee Choi and Karen Livescu and Shinji Watanabe},

booktitle={Proceedings of the Annual Conference of the International Speech Communication Association (INTERSPEECH)},

year={2024},

month={9},

pdf="https://arxiv.org/pdf/2406.09282"

}

@inproceedings{owsm-v31,

title={{OWSM v3.1: Better and Faster Open Whisper-Style Speech Models based on E-Branchformer}},

author={Yifan Peng and Jinchuan Tian and William Chen and Siddhant Arora and Brian Yan and Yui Sudo and Muhammad Shakeel and Kwanghee Choi and Jiatong Shi and Xuankai Chang and Jee-weon Jung and Shinji Watanabe},

booktitle={Proceedings of the Annual Conference of the International Speech Communication Association (INTERSPEECH)},

year={2024},

month={9},

pdf="https://arxiv.org/pdf/2401.16658",

}

```

#### Initial OWSM (v1, v2, v3)

```BibTex

@inproceedings{owsm,

title={Reproducing Whisper-Style Training Using An Open-Source Toolkit And Publicly Available Data},

author={Yifan Peng and Jinchuan Tian and Brian Yan and Dan Berrebbi and Xuankai Chang and Xinjian Li and Jiatong Shi and Siddhant Arora and William Chen and Roshan Sharma and Wangyou Zhang and Yui Sudo and Muhammad Shakeel and Jee-weon Jung and Soumi Maiti and Shinji Watanabe},

booktitle={Proceedings of the IEEE Automatic Speech Recognition and Understanding Workshop (ASRU)},

year={2023},

month={12},

pdf="https://arxiv.org/pdf/2309.13876",

}

```

|

bah63843/blockassist-bc-plump_fast_antelope_1756590753

|

bah63843

| 2025-08-30T21:53:26Z | 0 | 0 | null |

[

"gensyn",

"blockassist",

"gensyn-blockassist",

"minecraft",

"plump fast antelope",

"arxiv:2504.07091",

"region:us"

] | null | 2025-08-30T21:53:17Z |

---

tags:

- gensyn

- blockassist

- gensyn-blockassist

- minecraft

- plump fast antelope

---

# Gensyn BlockAssist

Gensyn's BlockAssist is a distributed extension of the paper [AssistanceZero: Scalably Solving Assistance Games](https://arxiv.org/abs/2504.07091).

|

Priyam05/poca-SoccerTwos

|

Priyam05

| 2025-08-30T21:53:21Z | 0 | 0 |

ml-agents

|

[

"ml-agents",

"onnx",

"SoccerTwos",

"deep-reinforcement-learning",

"reinforcement-learning",

"ML-Agents-SoccerTwos",

"region:us"

] |

reinforcement-learning

| 2025-08-30T21:50:50Z |

---

library_name: ml-agents

tags:

- SoccerTwos

- deep-reinforcement-learning

- reinforcement-learning

- ML-Agents-SoccerTwos

---

# **poca** Agent playing **SoccerTwos**

This is a trained model of a **poca** agent playing **SoccerTwos**

using the [Unity ML-Agents Library](https://github.com/Unity-Technologies/ml-agents).

## Usage (with ML-Agents)

The Documentation: https://unity-technologies.github.io/ml-agents/ML-Agents-Toolkit-Documentation/

We wrote a complete tutorial to learn to train your first agent using ML-Agents and publish it to the Hub:

- A *short tutorial* where you teach Huggy the Dog 🐶 to fetch the stick and then play with him directly in your

browser: https://huggingface.co/learn/deep-rl-course/unitbonus1/introduction

- A *longer tutorial* to understand how works ML-Agents:

https://huggingface.co/learn/deep-rl-course/unit5/introduction

### Resume the training

```bash

mlagents-learn <your_configuration_file_path.yaml> --run-id=<run_id> --resume

```

### Watch your Agent play

You can watch your agent **playing directly in your browser**

1. If the environment is part of ML-Agents official environments, go to https://huggingface.co/unity

2. Step 1: Find your model_id: Priyam05/poca-SoccerTwos

3. Step 2: Select your *.nn /*.onnx file

4. Click on Watch the agent play 👀

|

akirafudo/blockassist-bc-keen_fast_giraffe_1756590739

|

akirafudo

| 2025-08-30T21:53:20Z | 0 | 0 | null |

[

"gensyn",

"blockassist",

"gensyn-blockassist",

"minecraft",

"keen fast giraffe",

"arxiv:2504.07091",

"region:us"

] | null | 2025-08-30T21:52:41Z |

---

tags:

- gensyn

- blockassist

- gensyn-blockassist

- minecraft

- keen fast giraffe

---

# Gensyn BlockAssist

Gensyn's BlockAssist is a distributed extension of the paper [AssistanceZero: Scalably Solving Assistance Games](https://arxiv.org/abs/2504.07091).

|

akirafudo/blockassist-bc-keen_fast_giraffe_1756590516

|

akirafudo

| 2025-08-30T21:49:35Z | 0 | 0 | null |

[

"gensyn",

"blockassist",

"gensyn-blockassist",

"minecraft",

"keen fast giraffe",

"arxiv:2504.07091",

"region:us"

] | null | 2025-08-30T21:48:56Z |

---

tags:

- gensyn

- blockassist

- gensyn-blockassist

- minecraft

- keen fast giraffe

---

# Gensyn BlockAssist

Gensyn's BlockAssist is a distributed extension of the paper [AssistanceZero: Scalably Solving Assistance Games](https://arxiv.org/abs/2504.07091).

|

Dejiat/blockassist-bc-savage_unseen_bobcat_1756590504

|

Dejiat

| 2025-08-30T21:48:56Z | 0 | 0 | null |

[

"gensyn",

"blockassist",

"gensyn-blockassist",

"minecraft",

"savage unseen bobcat",

"arxiv:2504.07091",

"region:us"

] | null | 2025-08-30T21:48:53Z |

---

tags:

- gensyn

- blockassist

- gensyn-blockassist

- minecraft

- savage unseen bobcat

---

# Gensyn BlockAssist

Gensyn's BlockAssist is a distributed extension of the paper [AssistanceZero: Scalably Solving Assistance Games](https://arxiv.org/abs/2504.07091).

|

Babsie/Loki-Omega-70B-6QMerged-GGUF

|

Babsie

| 2025-08-30T21:47:54Z | 1 | 0 | null |

[

"gguf",

"uncensored",

"GGUF",

"6Q",

"128K",

"roleplay",

"en",

"base_model:ReadyArt/L3.3-The-Omega-Directive-70B-Unslop-v2.0",

"base_model:quantized:ReadyArt/L3.3-The-Omega-Directive-70B-Unslop-v2.0",

"license:other",

"endpoints_compatible",

"region:us",

"conversational"

] | null | 2025-08-29T21:40:17Z |

---

license: other

language:

- en

base_model:

- ReadyArt/L3.3-The-Omega-Directive-70B-Unslop-v2.0

tags:

- uncensored

- GGUF

- 6Q

- 128K

- roleplay

---

# Loki-Omega-70B-6QMerged-GGUF

This repository contains a **single merged GGUF file** for running ReadyArt/L3.3-The-Omega-Directive-70B-Unslop-v2.0 with llama.cpp (Q6_K quantization).

## File

* `Loki-Omega-70B-6QMerged.gguf` (57GB) - Full merged model, ready to use

## Quick Start (llama.cpp server, OpenAI-compatible)

```bash

pip install "llama-cpp-python[server]"

python -m llama_cpp.server \

--model /path/to/Loki-Omega-70B-6QMerged.gguf \

--host 0.0.0.0 --port 8000 \

--n_ctx 32000

## Quantization: Q6_K (maintains quality and nuance)

Note: This is the merged version. For split files, see the original repository.

## Warning!!

You can use it if you want, but he vomits on everything.

Loki loves being told: **"NO LOKI! NO!"**

|

Loder-S/blockassist-bc-sprightly_knobby_tiger_1756588945

|

Loder-S

| 2025-08-30T21:47:42Z | 0 | 0 | null |

[

"gensyn",

"blockassist",

"gensyn-blockassist",

"minecraft",

"sprightly knobby tiger",

"arxiv:2504.07091",

"region:us"

] | null | 2025-08-30T21:47:38Z |

---

tags:

- gensyn

- blockassist

- gensyn-blockassist

- minecraft

- sprightly knobby tiger

---

# Gensyn BlockAssist

Gensyn's BlockAssist is a distributed extension of the paper [AssistanceZero: Scalably Solving Assistance Games](https://arxiv.org/abs/2504.07091).

|

acidjp/blockassist-bc-pesty_extinct_prawn_1756587822

|

acidjp

| 2025-08-30T21:46:04Z | 0 | 0 | null |

[

"gensyn",

"blockassist",

"gensyn-blockassist",

"minecraft",

"pesty extinct prawn",

"arxiv:2504.07091",

"region:us"

] | null | 2025-08-30T21:46:00Z |

---

tags:

- gensyn

- blockassist

- gensyn-blockassist

- minecraft

- pesty extinct prawn

---

# Gensyn BlockAssist

Gensyn's BlockAssist is a distributed extension of the paper [AssistanceZero: Scalably Solving Assistance Games](https://arxiv.org/abs/2504.07091).

|

Dejiat/blockassist-bc-savage_unseen_bobcat_1756590314

|

Dejiat

| 2025-08-30T21:45:42Z | 0 | 0 | null |

[

"gensyn",

"blockassist",

"gensyn-blockassist",

"minecraft",

"savage unseen bobcat",

"arxiv:2504.07091",

"region:us"

] | null | 2025-08-30T21:45:39Z |

---

tags:

- gensyn

- blockassist

- gensyn-blockassist

- minecraft

- savage unseen bobcat

---

# Gensyn BlockAssist

Gensyn's BlockAssist is a distributed extension of the paper [AssistanceZero: Scalably Solving Assistance Games](https://arxiv.org/abs/2504.07091).

|

indrarg/blockassist-bc-pensive_zealous_hyena_1756590268

|

indrarg

| 2025-08-30T21:45:09Z | 0 | 0 | null |

[

"gensyn",

"blockassist",

"gensyn-blockassist",

"minecraft",

"pensive zealous hyena",

"arxiv:2504.07091",

"region:us"

] | null | 2025-08-30T21:45:01Z |

---

tags:

- gensyn

- blockassist

- gensyn-blockassist

- minecraft

- pensive zealous hyena

---

# Gensyn BlockAssist

Gensyn's BlockAssist is a distributed extension of the paper [AssistanceZero: Scalably Solving Assistance Games](https://arxiv.org/abs/2504.07091).

|

maxibillion1975/blockassist-bc-iridescent_squeaky_sandpiper_1756588654

|

maxibillion1975

| 2025-08-30T21:43:20Z | 0 | 0 | null |

[

"gensyn",

"blockassist",

"gensyn-blockassist",

"minecraft",

"iridescent squeaky sandpiper",

"arxiv:2504.07091",

"region:us"

] | null | 2025-08-30T21:43:17Z |

---

tags:

- gensyn

- blockassist

- gensyn-blockassist

- minecraft

- iridescent squeaky sandpiper

---

# Gensyn BlockAssist

Gensyn's BlockAssist is a distributed extension of the paper [AssistanceZero: Scalably Solving Assistance Games](https://arxiv.org/abs/2504.07091).

|

lucyknada/Salesforce_xgen-small-9B-instruct-r-exl3

|

lucyknada

| 2025-08-30T21:43:19Z | 0 | 0 |

transformers

|

[

"transformers",

"en",

"arxiv:2505.06496",

"license:cc-by-nc-4.0",

"endpoints_compatible",

"region:us"

] | null | 2025-08-30T21:42:13Z |

---

license: cc-by-nc-4.0

language:

- en

library_name: transformers

---

### exl3 quant

---

### check revisions for quants

---

# Welcome to the xGen-small family!

**xGen-small** ([blog](https://www.salesforce.com/blog/xgen-small-enterprise-ready-small-language-models/), [arXiv](https://arxiv.org/abs/2505.06496)) is an enterprise-ready compact LM that combines domain-focused data curation, scalable pre-training, length-extension, and RL fine-tuning to deliver long-context performance at predictable, low cost.

**This model release is for research purposes only.**

<p align="center">

<img width="60%" src="https://huggingface.co/Salesforce/xgen-small/resolve/main/xgen-small.png?download=true">

</p>

## Model Series

[xGen-small](https://www.salesforce.com/blog/xgen-small-enterprise-ready-small-language-models/) comes in two sizes (4B and 9B) with two variants (pre-trained and post-trained):

| Model | # Total Params | Context Length | Variant | Download |

|---------------------------------------|----------------|----------------|--------------|----------------|

| salesforce/xgen-small-4B-base-r | 4B | 128k | Pre-trained | [🤗 Link](https://huggingface.co/Salesforce/xgen-small-4b-base-r) |

| salesforce/xgen-small-4B-instruct-r | 4B | 128k | Post-trained | [🤗 Link](https://huggingface.co/Salesforce/xgen-small-4b-instruct-r) |

| salesforce/xgen-small-9B-base-r | 9B | 128k | Pre-trained | [🤗 Link](https://huggingface.co/Salesforce/xgen-small-9b-base-r) |

| salesforce/xgen-small-9B-instruct-r | 9B | 128k | Post-trained | [🤗 Link](https://huggingface.co/Salesforce/xgen-small-9b-instruct-r) |

## Usage

```python

import torch

from transformers import AutoModelForCausalLM, AutoTokenizer

model_name = "Salesforce/xgen-small-9B-instruct-r"

tokenizer = AutoTokenizer.from_pretrained(model_name)

device = "cuda" if torch.cuda.is_available() else "cpu"

model = AutoModelForCausalLM.from_pretrained(

model_name,

torch_dtype="auto"

).to(device)

prompt = "What is Salesforce?"

messages = [{"role": "user", "content": prompt}]

inputs = tokenizer.apply_chat_template(

messages,

add_generation_prompt=True,

return_tensors="pt"

).to(model.device)

generated = model.generate(inputs, max_new_tokens=128)

output = tokenizer.decode(

generated[0],

skip_special_tokens=True,

)

print(output)

```

## Evaluation

| Category | Task | Llama 3.1-8B | Granite 3.3-8B | Qwen2.5-7B | xGen-small 9B Instruct |

| :------------------------------- | :---------------- | :----------- | :------------- | :--------- | :----------------------|

| General Knowledge & Reasoning | MMLU | 68.3 | 62.7 | 72.4 | 72.4 |

| General Knowledge & Reasoning | MMLU-Pro | 43.2 | 43.5 | 56.7 | 57.3 |

| Chat | Arena-Hard-v1.0 | 28.9 | 30.5 | 48.1 | 60.1 |

| Chat | MT-Bench | 8.25 | 8.57 | 8.56 | 8.90 |

| Math & Science | GPQA | 31.9 | 35.3 | 32.6 | 45.8 |

| Math & Science | GSM8K | 84.2 | 89.4 | 91.9 | 95.3 |

| Math & Science | MATH | 48.9 | 70.9 | 74.6 | 91.6 |

| Math & Science | AIME 2024 | 6.7 | 10.0 | 6.7 | 50.0 |

| Coding | HumanEval+ | 61.6 | 65.9 | 74.4 | 78.7 |

| Coding | MBPP+ | 55.3 | 60.3 | 68.8 | 63.8 |

| Coding | LiveCodeBench | 10.3 | 10.3 | 12.1 | 50.6 |

## Citation

```bibtex

@misc{xgensmall,

title={xGen-small Technical Report},

author={Erik Nijkamp and Bo Pang and Egor Pakhomov and Akash Gokul and Jin Qu and Silvio Savarese and Yingbo Zhou and Caiming Xiong},

year={2025},

eprint={2505.06496},

archivePrefix={arXiv},

primaryClass={cs.CL},

url={https://arxiv.org/abs/2505.06496},

}

```

## Ethical Considerations

This release is for research purposes only in support of an academic paper. Our models, datasets, and code are not specifically designed or evaluated for all downstream purposes. We strongly recommend users evaluate and address potential concerns related to accuracy, safety, and fairness before deploying this model. We encourage users to consider the common limitations of AI, comply with applicable laws, and leverage best practices when selecting use cases, particularly for high-risk scenarios where errors or misuse could significantly impact people's lives, rights, or safety. For further guidance on use cases, refer to our AUP and AI AUP.

## Model Licenses

The models are being released under CC-BY-NC-4.0, Copyright © Salesforce, Inc. All Rights Reserved.

|

Dejiat/blockassist-bc-savage_unseen_bobcat_1756590164

|

Dejiat

| 2025-08-30T21:43:16Z | 0 | 0 | null |

[

"gensyn",

"blockassist",

"gensyn-blockassist",

"minecraft",

"savage unseen bobcat",

"arxiv:2504.07091",

"region:us"

] | null | 2025-08-30T21:43:13Z |

---

tags:

- gensyn

- blockassist

- gensyn-blockassist

- minecraft

- savage unseen bobcat

---

# Gensyn BlockAssist

Gensyn's BlockAssist is a distributed extension of the paper [AssistanceZero: Scalably Solving Assistance Games](https://arxiv.org/abs/2504.07091).

|

xensive/llama3.2-3b-FinetuningV1

|

xensive

| 2025-08-30T21:43:01Z | 0 | 0 |

transformers

|

[

"transformers",

"safetensors",

"unsloth",

"arxiv:1910.09700",

"endpoints_compatible",

"region:us"

] | null | 2025-08-30T21:38:54Z |

---

library_name: transformers

tags:

- unsloth

---

# Model Card for Model ID

<!-- Provide a quick summary of what the model is/does. -->

## Model Details

### Model Description

<!-- Provide a longer summary of what this model is. -->

This is the model card of a 🤗 transformers model that has been pushed on the Hub. This model card has been automatically generated.

- **Developed by:** [More Information Needed]

- **Funded by [optional]:** [More Information Needed]

- **Shared by [optional]:** [More Information Needed]

- **Model type:** [More Information Needed]

- **Language(s) (NLP):** [More Information Needed]

- **License:** [More Information Needed]

- **Finetuned from model [optional]:** [More Information Needed]

### Model Sources [optional]

<!-- Provide the basic links for the model. -->

- **Repository:** [More Information Needed]

- **Paper [optional]:** [More Information Needed]

- **Demo [optional]:** [More Information Needed]

## Uses

<!-- Address questions around how the model is intended to be used, including the foreseeable users of the model and those affected by the model. -->

### Direct Use

<!-- This section is for the model use without fine-tuning or plugging into a larger ecosystem/app. -->

[More Information Needed]

### Downstream Use [optional]

<!-- This section is for the model use when fine-tuned for a task, or when plugged into a larger ecosystem/app -->

[More Information Needed]

### Out-of-Scope Use

<!-- This section addresses misuse, malicious use, and uses that the model will not work well for. -->

[More Information Needed]

## Bias, Risks, and Limitations

<!-- This section is meant to convey both technical and sociotechnical limitations. -->

[More Information Needed]

### Recommendations

<!-- This section is meant to convey recommendations with respect to the bias, risk, and technical limitations. -->

Users (both direct and downstream) should be made aware of the risks, biases and limitations of the model. More information needed for further recommendations.

## How to Get Started with the Model

Use the code below to get started with the model.

[More Information Needed]

## Training Details

### Training Data

<!-- This should link to a Dataset Card, perhaps with a short stub of information on what the training data is all about as well as documentation related to data pre-processing or additional filtering. -->

[More Information Needed]

### Training Procedure

<!-- This relates heavily to the Technical Specifications. Content here should link to that section when it is relevant to the training procedure. -->

#### Preprocessing [optional]

[More Information Needed]

#### Training Hyperparameters

- **Training regime:** [More Information Needed] <!--fp32, fp16 mixed precision, bf16 mixed precision, bf16 non-mixed precision, fp16 non-mixed precision, fp8 mixed precision -->

#### Speeds, Sizes, Times [optional]

<!-- This section provides information about throughput, start/end time, checkpoint size if relevant, etc. -->

[More Information Needed]

## Evaluation

<!-- This section describes the evaluation protocols and provides the results. -->

### Testing Data, Factors & Metrics

#### Testing Data

<!-- This should link to a Dataset Card if possible. -->

[More Information Needed]

#### Factors

<!-- These are the things the evaluation is disaggregating by, e.g., subpopulations or domains. -->

[More Information Needed]

#### Metrics

<!-- These are the evaluation metrics being used, ideally with a description of why. -->

[More Information Needed]

### Results

[More Information Needed]

#### Summary

## Model Examination [optional]

<!-- Relevant interpretability work for the model goes here -->

[More Information Needed]

## Environmental Impact

<!-- Total emissions (in grams of CO2eq) and additional considerations, such as electricity usage, go here. Edit the suggested text below accordingly -->

Carbon emissions can be estimated using the [Machine Learning Impact calculator](https://mlco2.github.io/impact#compute) presented in [Lacoste et al. (2019)](https://arxiv.org/abs/1910.09700).

- **Hardware Type:** [More Information Needed]

- **Hours used:** [More Information Needed]

- **Cloud Provider:** [More Information Needed]

- **Compute Region:** [More Information Needed]

- **Carbon Emitted:** [More Information Needed]

## Technical Specifications [optional]

### Model Architecture and Objective

[More Information Needed]

### Compute Infrastructure

[More Information Needed]

#### Hardware

[More Information Needed]

#### Software

[More Information Needed]

## Citation [optional]

<!-- If there is a paper or blog post introducing the model, the APA and Bibtex information for that should go in this section. -->

**BibTeX:**

[More Information Needed]

**APA:**

[More Information Needed]

## Glossary [optional]

<!-- If relevant, include terms and calculations in this section that can help readers understand the model or model card. -->

[More Information Needed]

## More Information [optional]

[More Information Needed]

## Model Card Authors [optional]

[More Information Needed]

## Model Card Contact

[More Information Needed]

|

bah63843/blockassist-bc-plump_fast_antelope_1756590063

|

bah63843

| 2025-08-30T21:42:00Z | 0 | 0 | null |

[

"gensyn",

"blockassist",

"gensyn-blockassist",

"minecraft",

"plump fast antelope",

"arxiv:2504.07091",

"region:us"

] | null | 2025-08-30T21:41:51Z |

---

tags:

- gensyn

- blockassist

- gensyn-blockassist

- minecraft

- plump fast antelope

---

# Gensyn BlockAssist

Gensyn's BlockAssist is a distributed extension of the paper [AssistanceZero: Scalably Solving Assistance Games](https://arxiv.org/abs/2504.07091).

|

Anzhc/MS-LC-EQ-D-VR_VAE

|

Anzhc

| 2025-08-30T21:41:54Z | 6,727 | 43 |

diffusers

|

[

"diffusers",

"arxiv:2502.09509",

"arxiv:2506.07863",

"base_model:stabilityai/sdxl-vae",

"base_model:finetune:stabilityai/sdxl-vae",

"region:us"

] | null | 2025-07-15T22:12:46Z |

---

base_model:

- stabilityai/sdxl-vae

library_name: diffusers

---

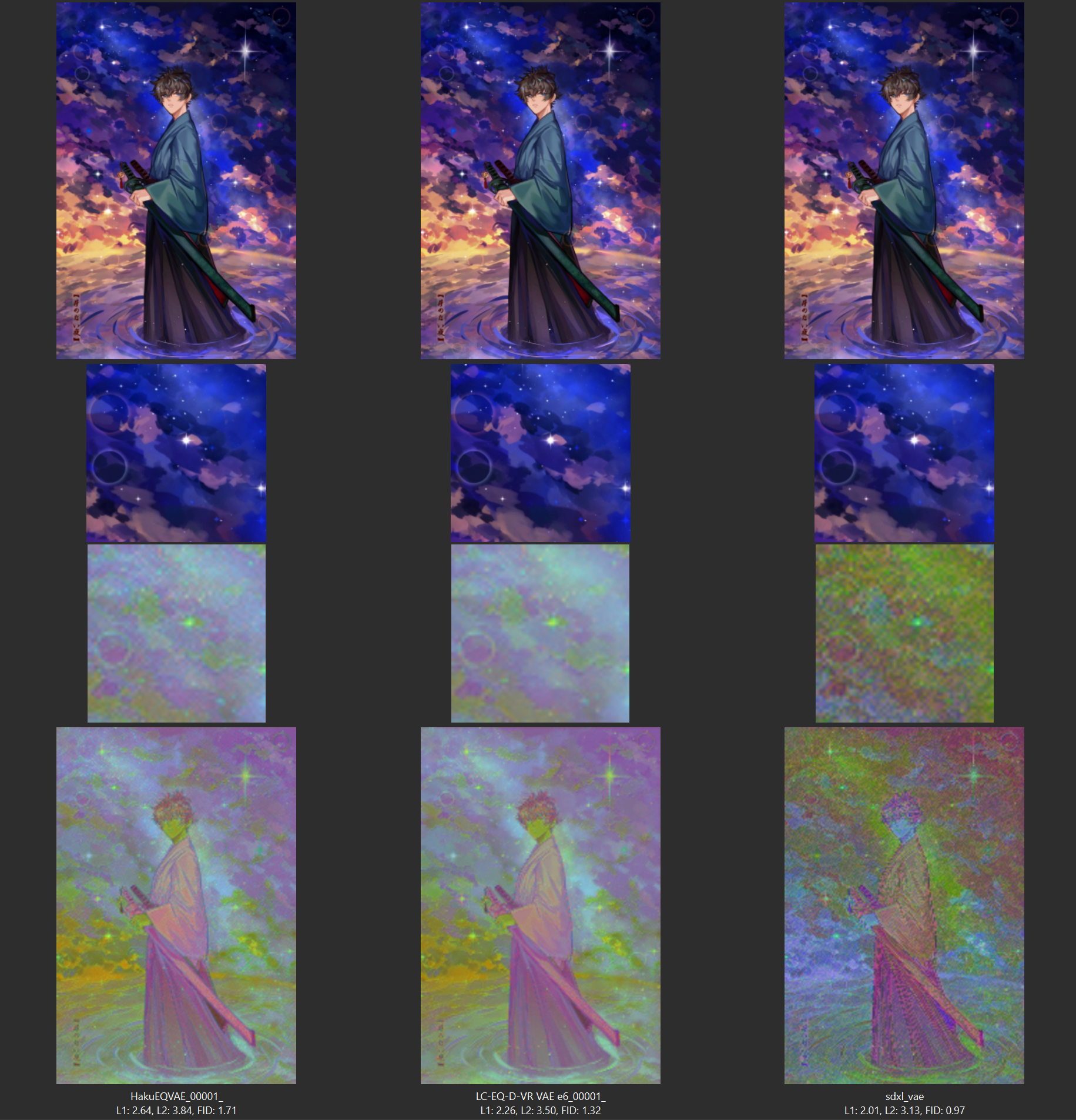

# MS-LC-EQ-D-VR VAE: another reproduction of EQ-VAE on variable VAEs and then some

### Current VAEs present:

- SDXL VAE

- FLUX VAE

EQ-VAE paper: https://arxiv.org/abs/2502.09509 <br>

VIVAT paper: https://arxiv.org/pdf/2506.07863v1 <br>

Thanks to Kohaku and his reproduction that made me look into this: https://huggingface.co/KBlueLeaf/EQ-SDXL-VAE <br>

Base model adapted to EQ VAE: https://huggingface.co/Anzhc/Noobai11-EQ

Latent to PCA <br>

**IMPORTANT**: This VAE requires reflection padding on conv layers. It should be added both in your trainer, and your webui.